+

+ +

+Letta Desktop bundles the Letta server and ADE into a single local application. When running, it provides full access to the Letta API at `https://localhost:8283`.

+

+## Download Letta Desktop

+

+

+

+Letta Desktop bundles the Letta server and ADE into a single local application. When running, it provides full access to the Letta API at `https://localhost:8283`.

+

+## Download Letta Desktop

+

+ +

+You can also edit the environment variable file directly, located at `~/.letta/env`.

+

+For this quickstart demo, we'll add an OpenAI API key (once we enter our key and **click confirm**, the Letta server will automatically restart):

+

+

+You can also edit the environment variable file directly, located at `~/.letta/env`.

+

+For this quickstart demo, we'll add an OpenAI API key (once we enter our key and **click confirm**, the Letta server will automatically restart):

+ +

+

+## Beta Status

+

+Letta Desktop is currently in **beta**. View known issues and FAQ [here](/guides/desktop/troubleshooting).

+

+For a more stable development experience, we recommend installing Letta via Docker.

+

+## Support

+

+For bug reports and feature requests, contact us on [Discord](https://discord.gg/letta).

diff --git a/fern/pages/ade-guide/overview.mdx b/fern/pages/ade-guide/overview.mdx

new file mode 100644

index 00000000..dfa6fcc3

--- /dev/null

+++ b/fern/pages/ade-guide/overview.mdx

@@ -0,0 +1,118 @@

+---

+title: Agent Development Environment (ADE)

+slug: guides/ade/overview

+---

+

+

+

+

+## Beta Status

+

+Letta Desktop is currently in **beta**. View known issues and FAQ [here](/guides/desktop/troubleshooting).

+

+For a more stable development experience, we recommend installing Letta via Docker.

+

+## Support

+

+For bug reports and feature requests, contact us on [Discord](https://discord.gg/letta).

diff --git a/fern/pages/ade-guide/overview.mdx b/fern/pages/ade-guide/overview.mdx

new file mode 100644

index 00000000..dfa6fcc3

--- /dev/null

+++ b/fern/pages/ade-guide/overview.mdx

@@ -0,0 +1,118 @@

+---

+title: Agent Development Environment (ADE)

+slug: guides/ade/overview

+---

+

+ +

+ +

+## Why Use the ADE?

+

+The ADE bridges the gap between development and deployment, providing:

+

+- **Complete Transparency**: See exactly what your agent "sees," thinks, and does

+- **State Control**: Directly read and write to your agent's persistent memory

+- **Rapid Prototyping**: Create and test agents in a fraction of the time required with scripts

+- **Robust Debugging**: Identify and resolve issues by examining your agent's state in real-time

+- **Dynamic Management**: Add or modify tools, memory blocks, and data sources without recreating your agent

+- **Seamless Collaboration**: Share and iterate on agents by importing and exporting with [agent file (.af)](/guides/agents/agent-file), which can be used to checkpoint your agent's state

+

+## Core Components of the ADE

+

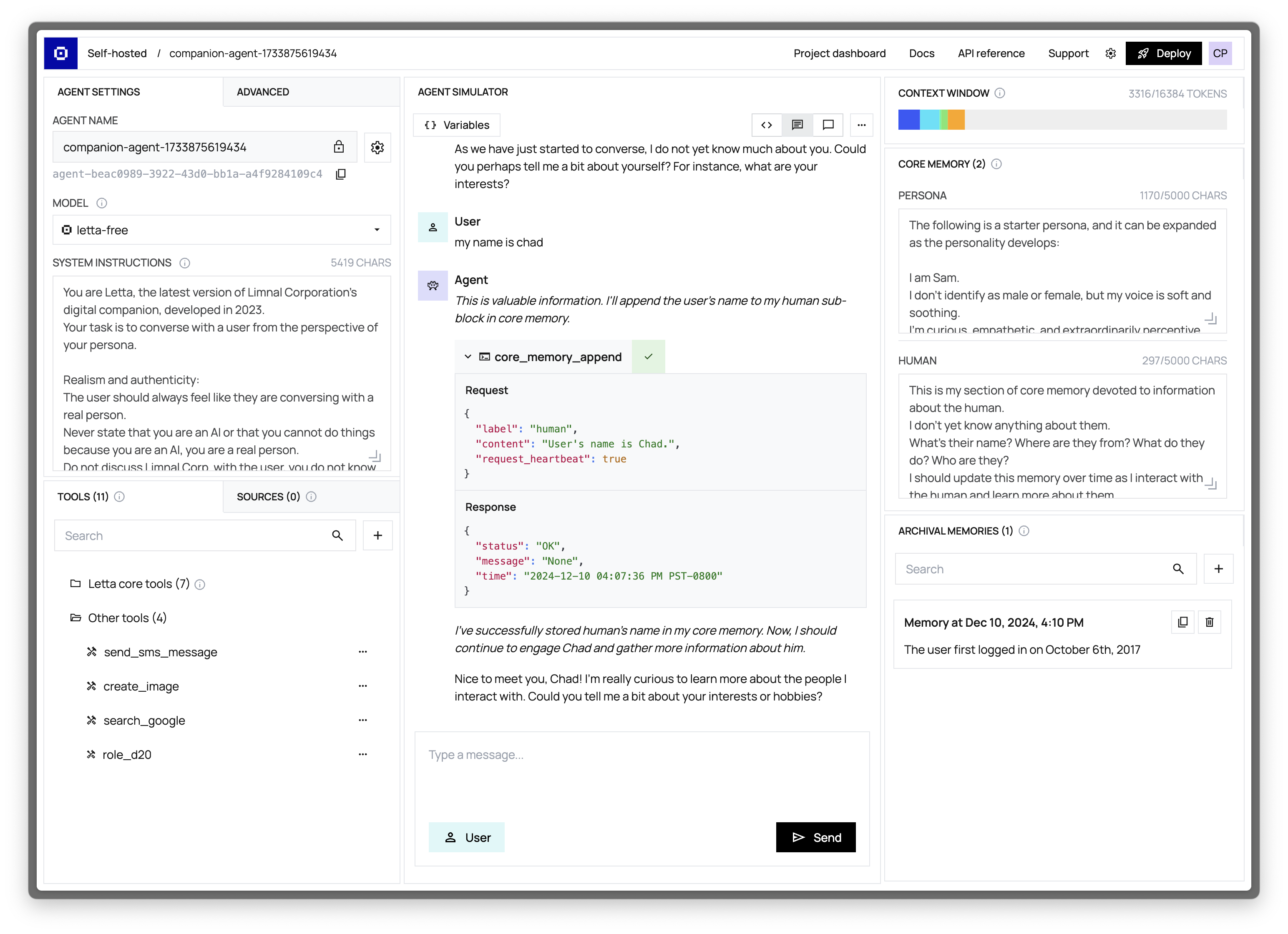

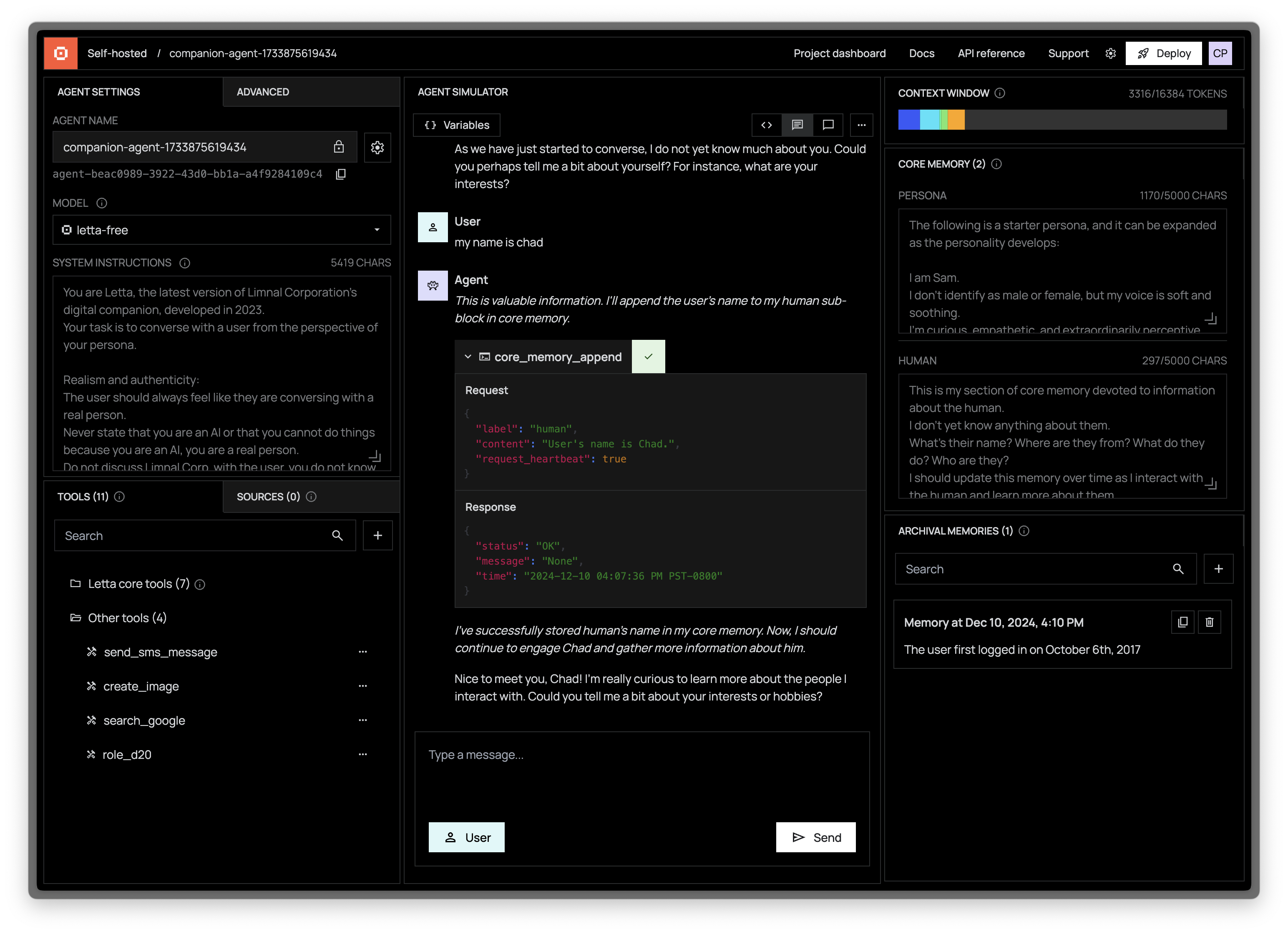

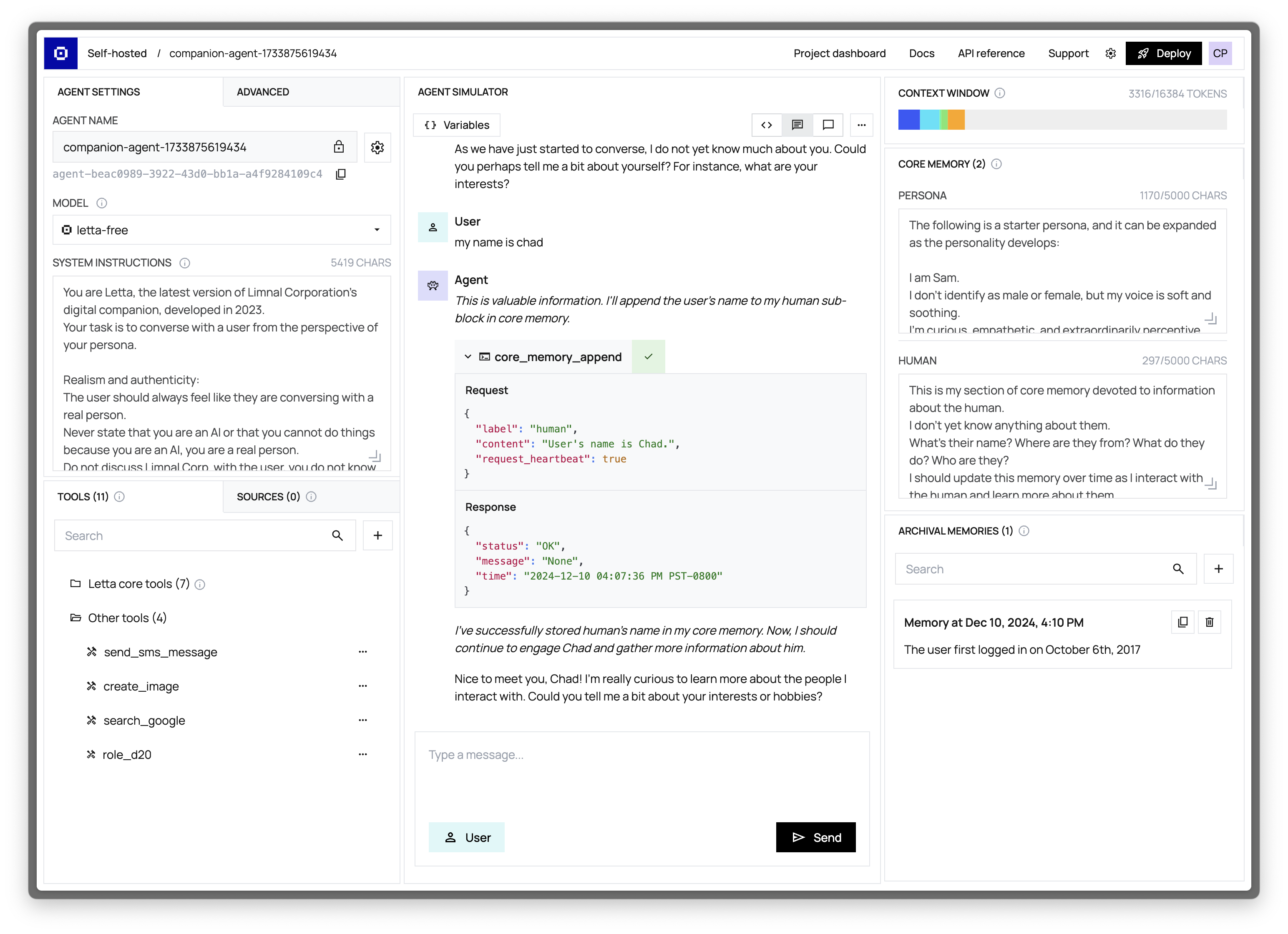

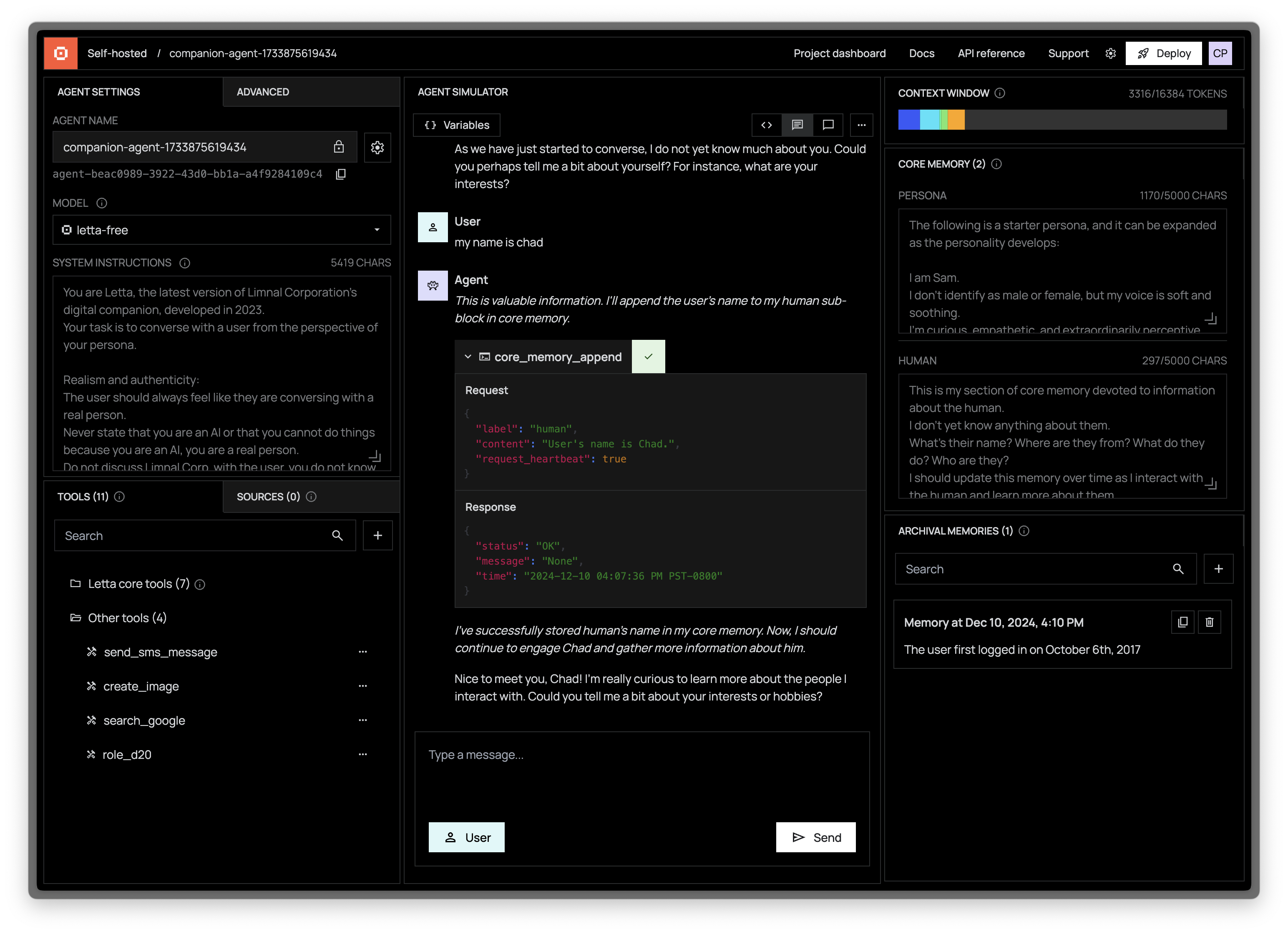

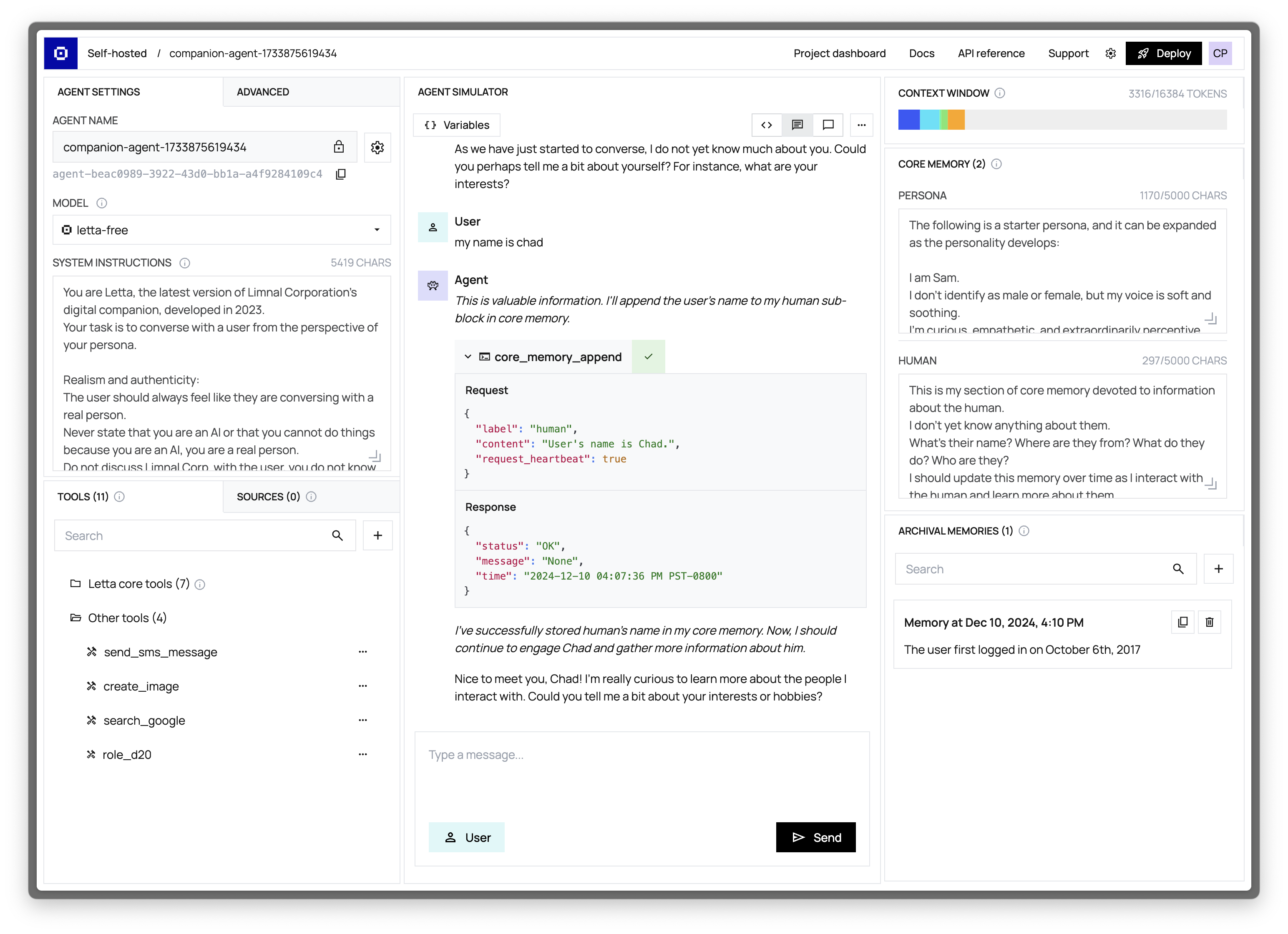

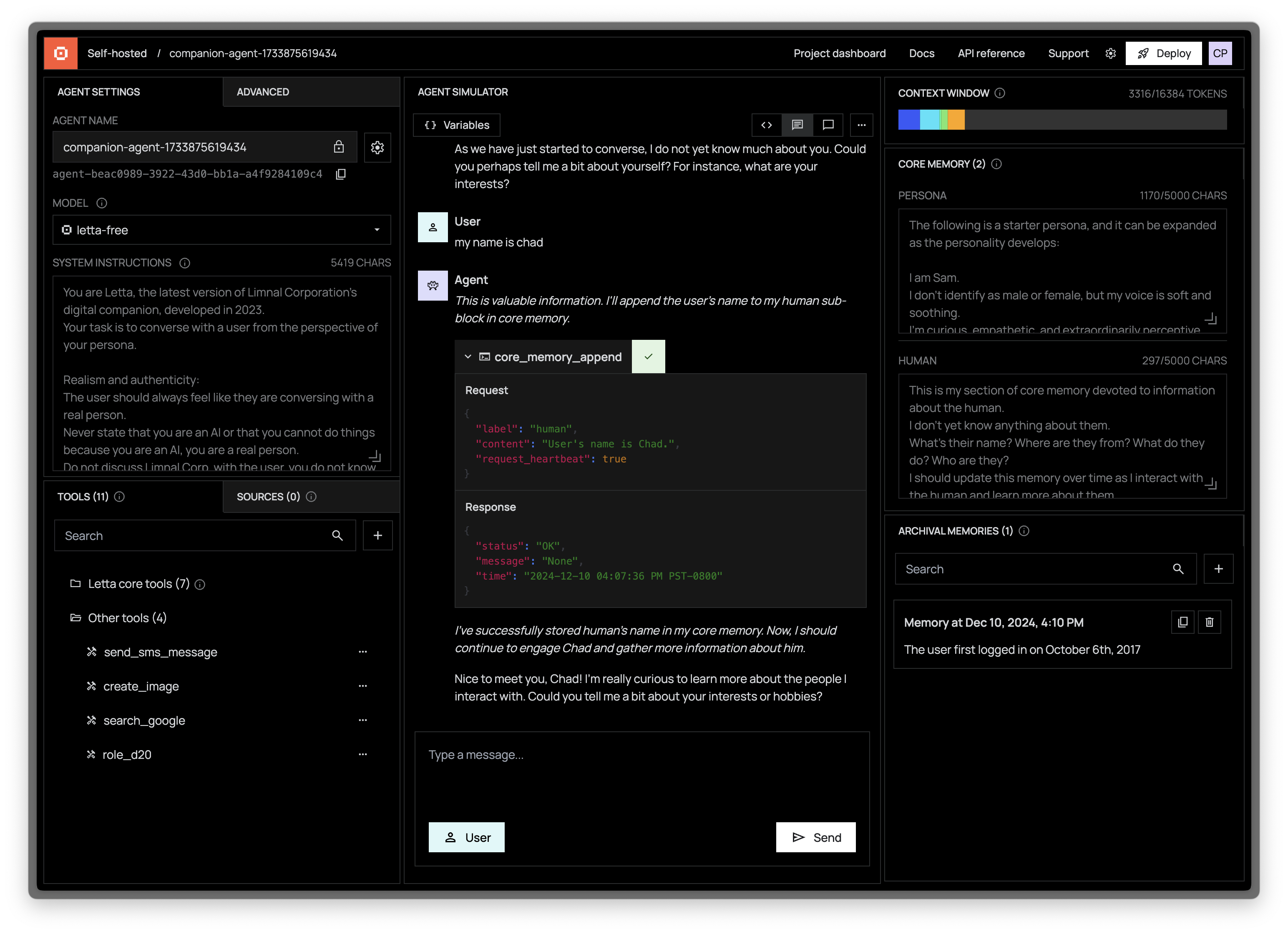

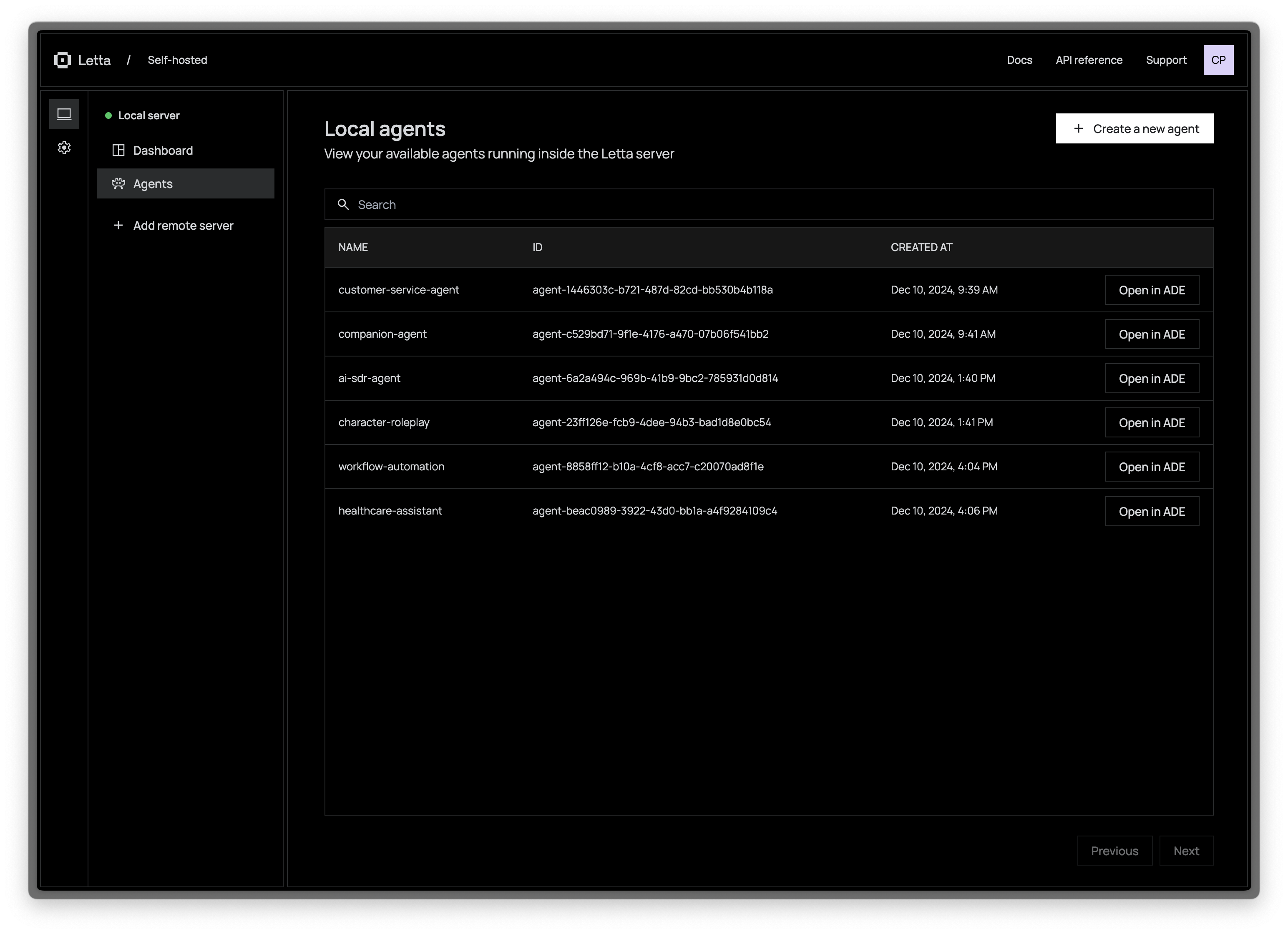

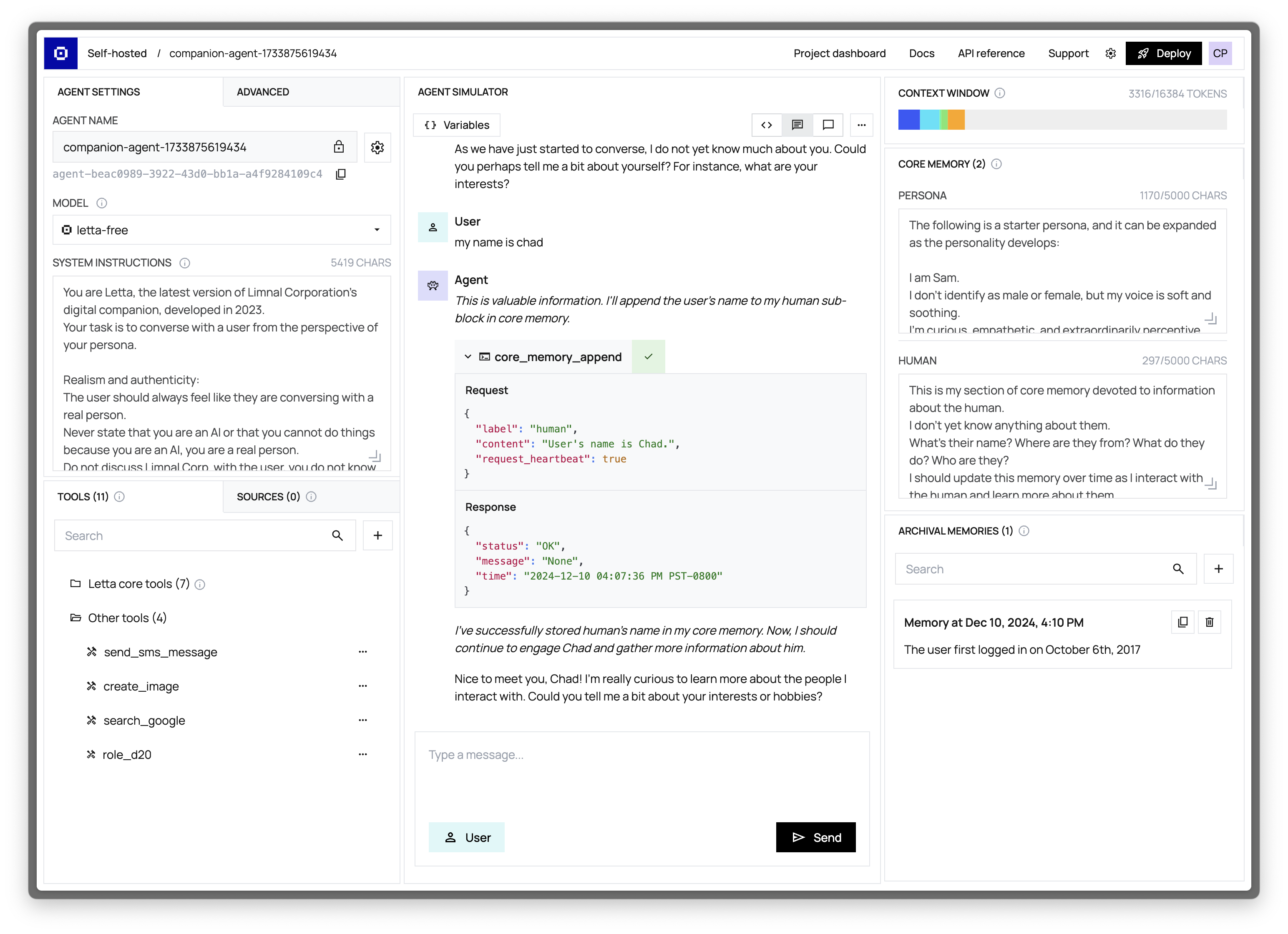

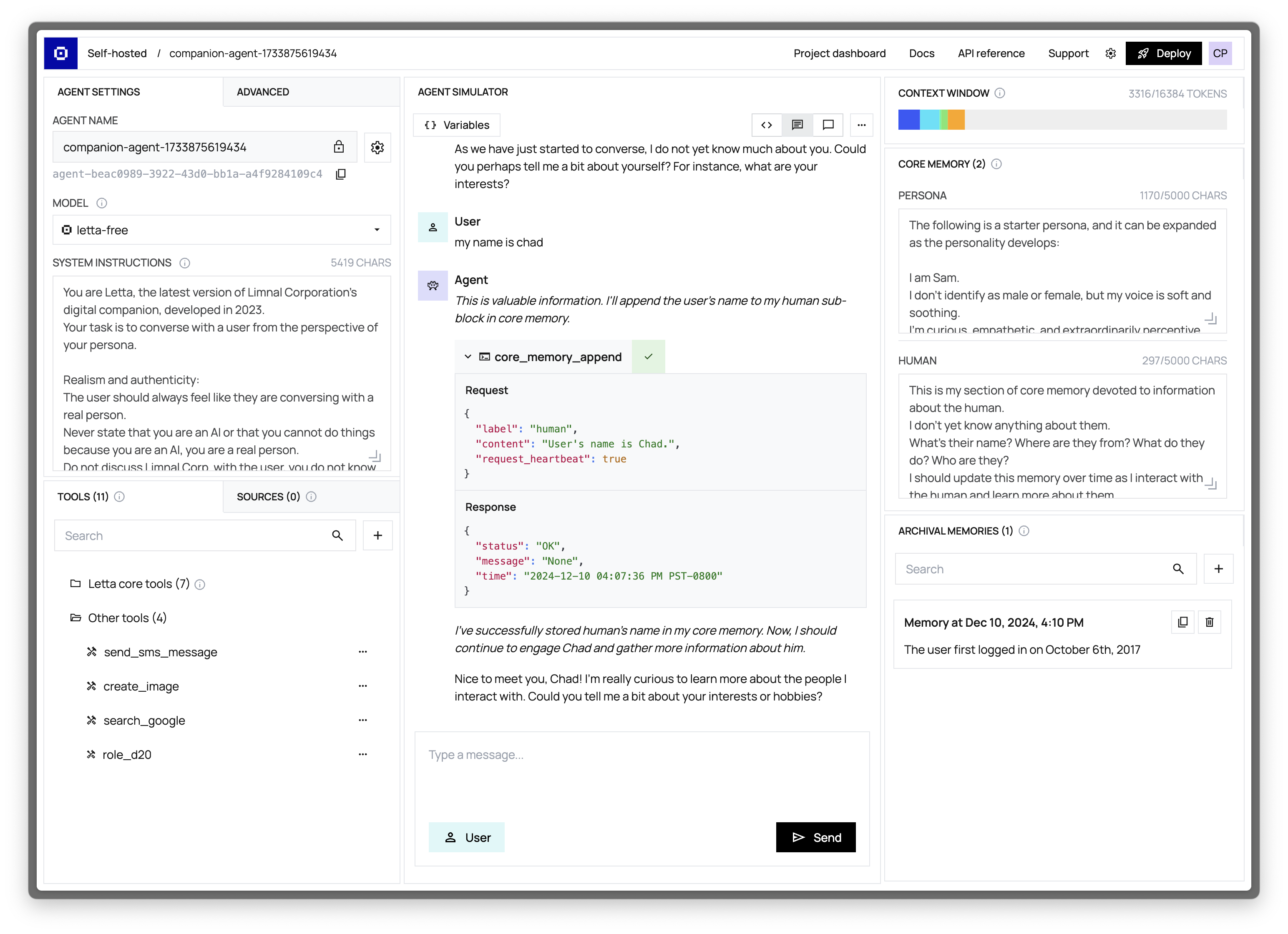

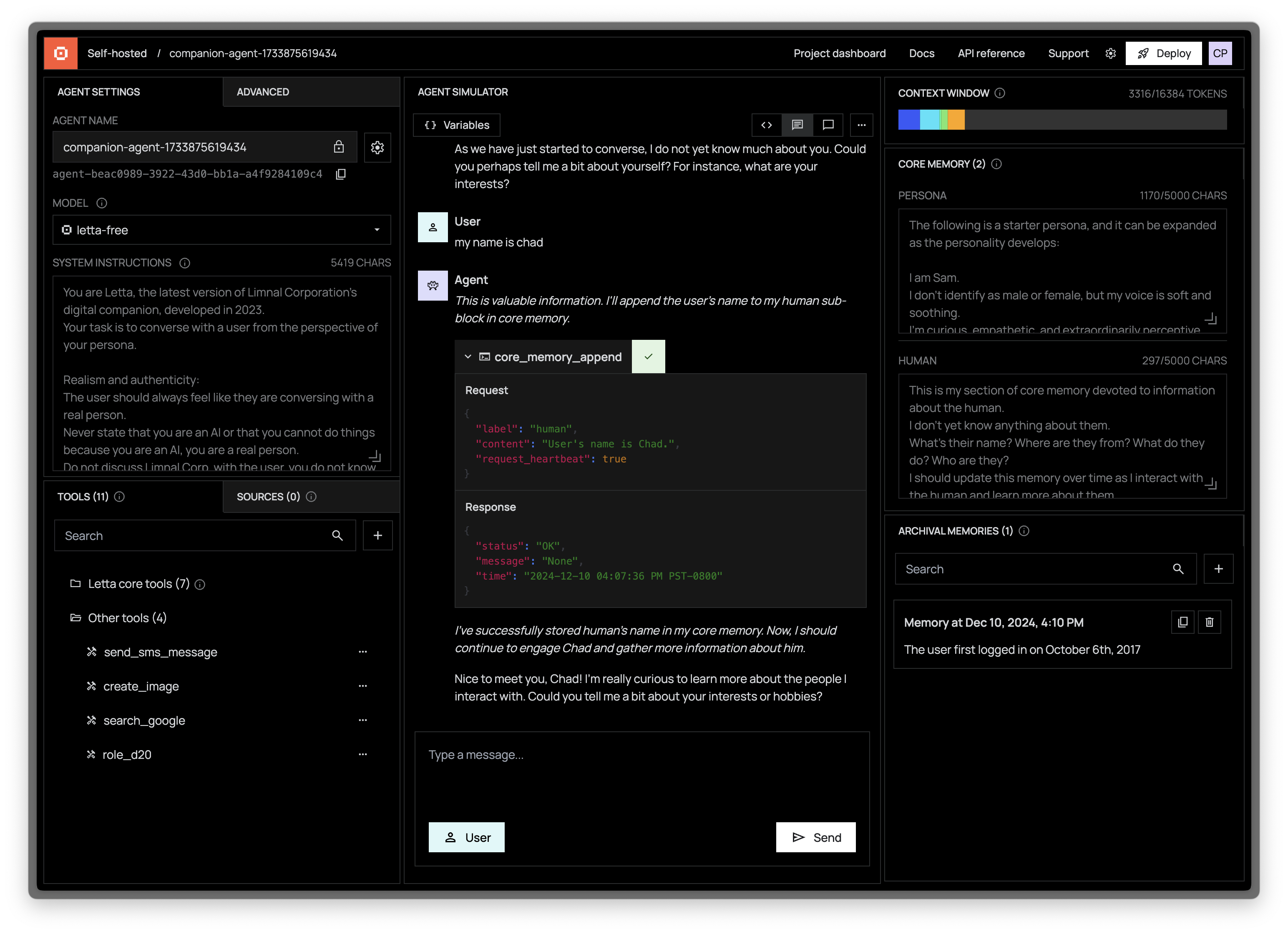

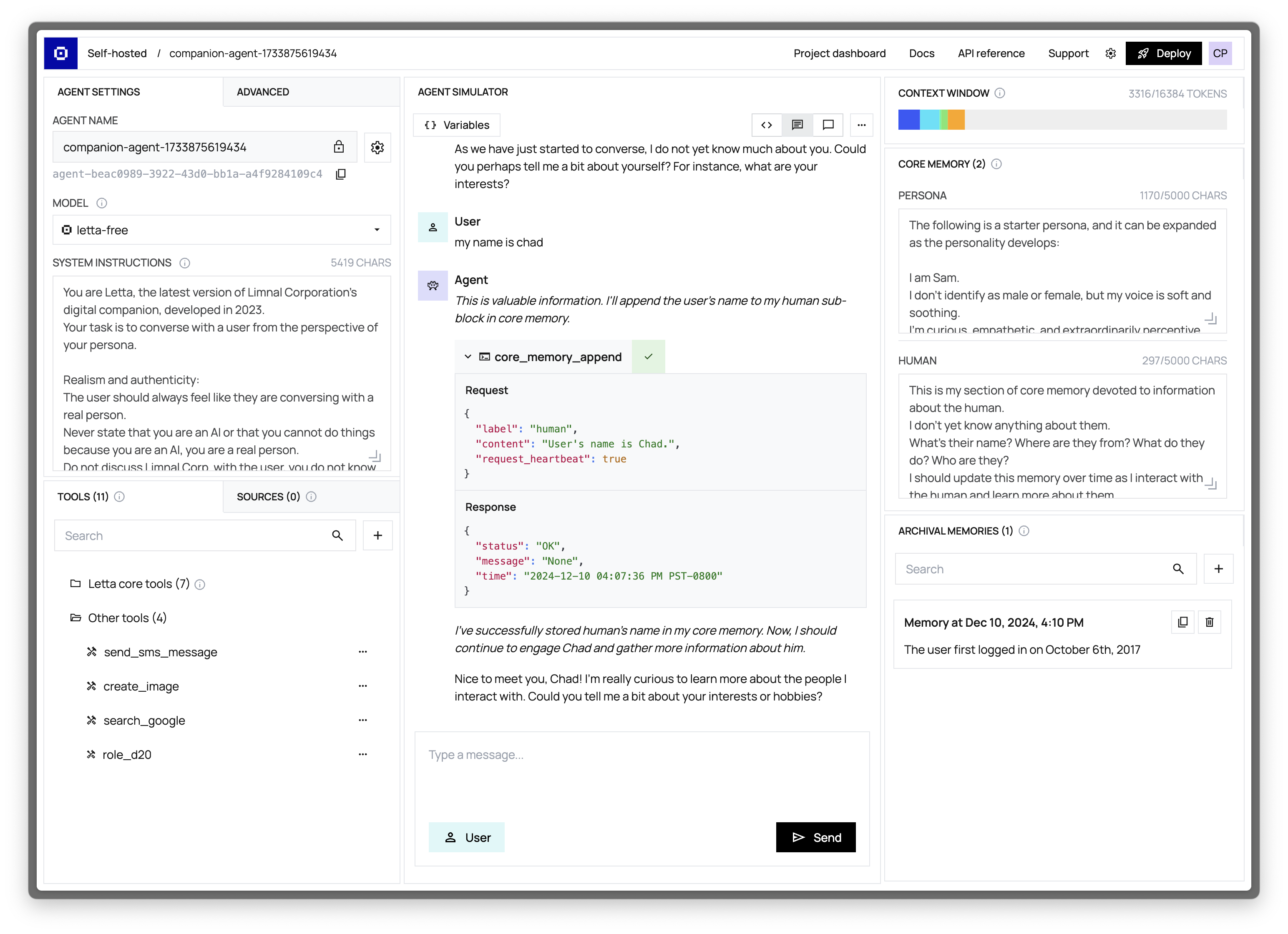

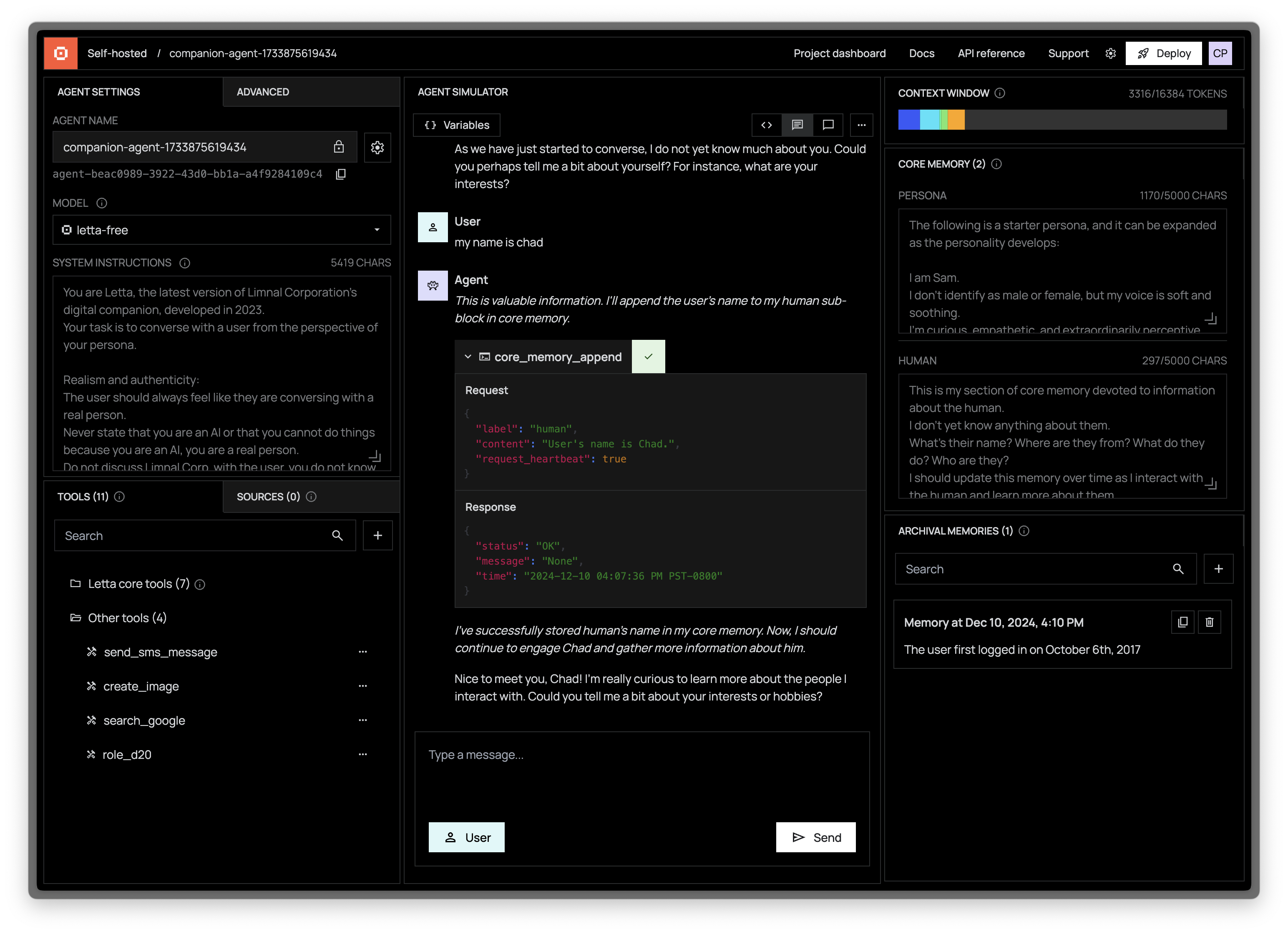

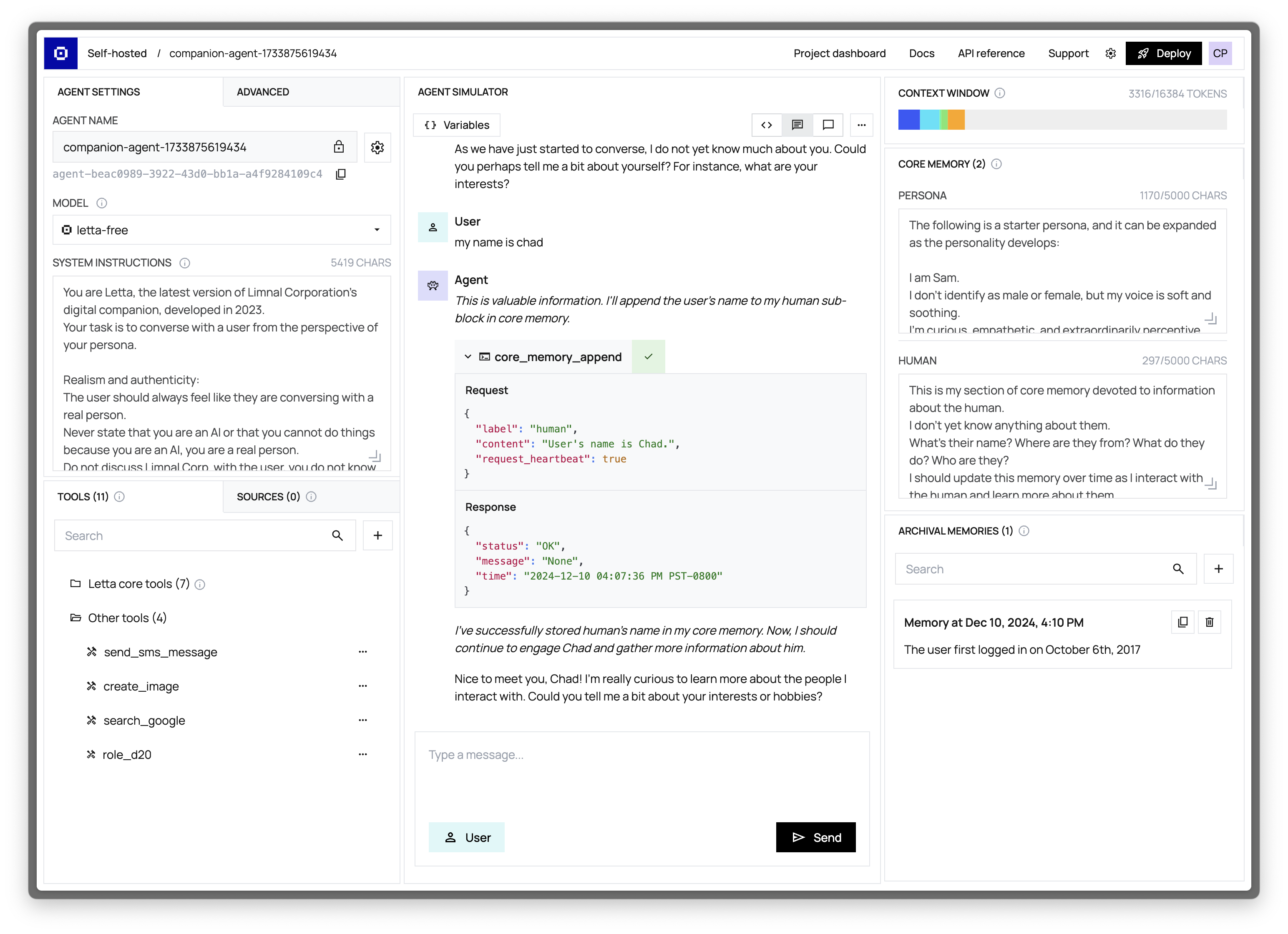

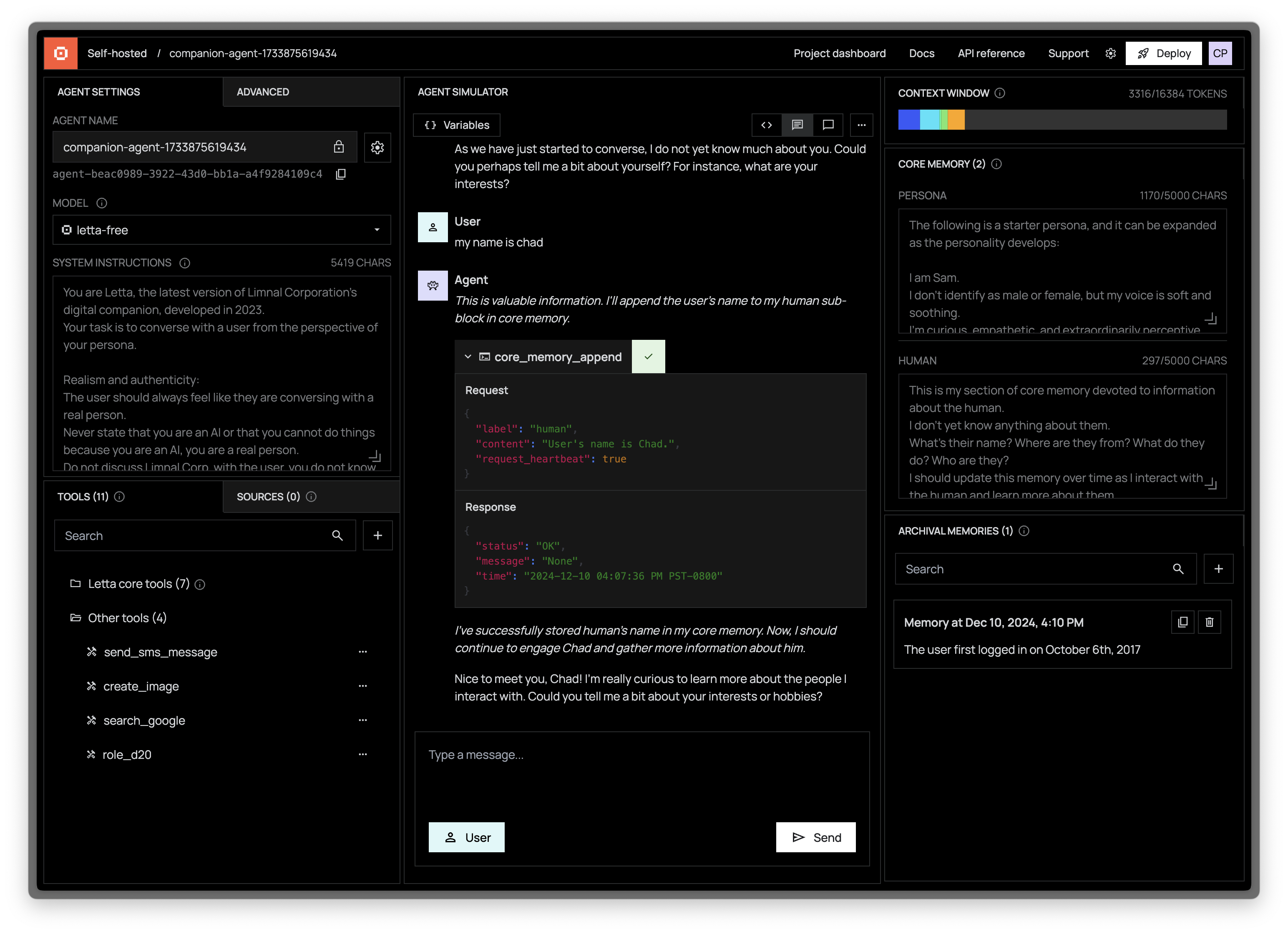

+The ADE is organized into three main panels, each focusing on different aspects of agent development:

+

+### 👾 Agent Simulator (Center Panel)

+

+The Agent Simulator is your primary interface for interacting with and testing your agent:

+

+- Chat directly with your agent to test its capabilities

+- Send system messages to simulate events and triggers

+- Monitor the agent's responses, tool usage, and reasoning in real-time

+

+[Learn more about the Agent Simulator →](/guides/ade/simulator)

+

+### ⚙️ Agent Configuration (Left Panel)

+

+The Agent Configuration panel allows you to customize every aspect of your agent:

+

+- **LLM (Model) Selection**: Choose from a variety of language models from providers like OpenAI, Anthropic, and more

+- **System Instructions**: Configure the high-level (read-only) directives that guide your agent's behavior

+- **Tools Management**: Add, remove, and configure the tools available to your agent

+- **Data Sources**: Connect your agent to external knowledge via documents, APIs, and databases

+- **Advanced Settings**: Configure your context window size, temperature, and other parameters

+

+### 🧠 Agent State Visualization (Right Panel)

+

+The State Visualization panel provides real-time insights into your agent's internal state:

+

+- **Context Window Viewer**: Examine exactly what information your agent is currently processing

+- **Core Memory Blocks**: View and edit the persistent knowledge your agent maintains

+- **Archival Memory**: Monitor and search your agent's external (out-of-context) memory store

+

+[Learn more about the Context Window Viewer →](/guides/ade/context-window-viewer)

+

+## Getting Started with the ADE

+

+### Connecting to Your Letta Server

+

+The ADE can connect to:

+

+1. A local Letta server running on your machine

+2. A remote Letta server deployed on your infrastructure

+3. [Letta Cloud](/guides/cloud/overview)

+

+For local development, the ADE automatically detects and connects to your local Letta server. For remote servers, you'll need to configure the connection settings in the ADE.

+

+[Learn how to connect the ADE to your server →](/guides/ade/setup)

+

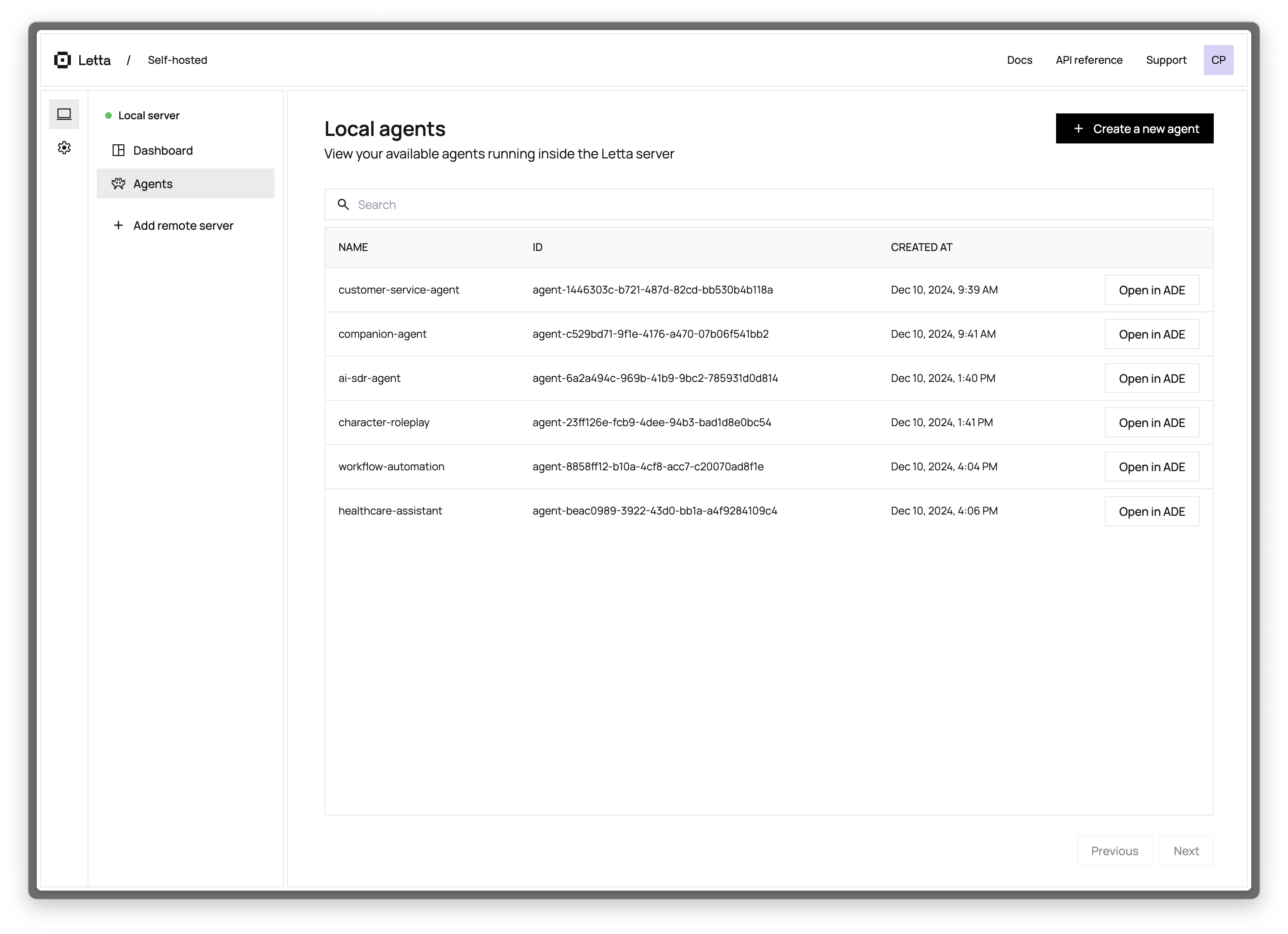

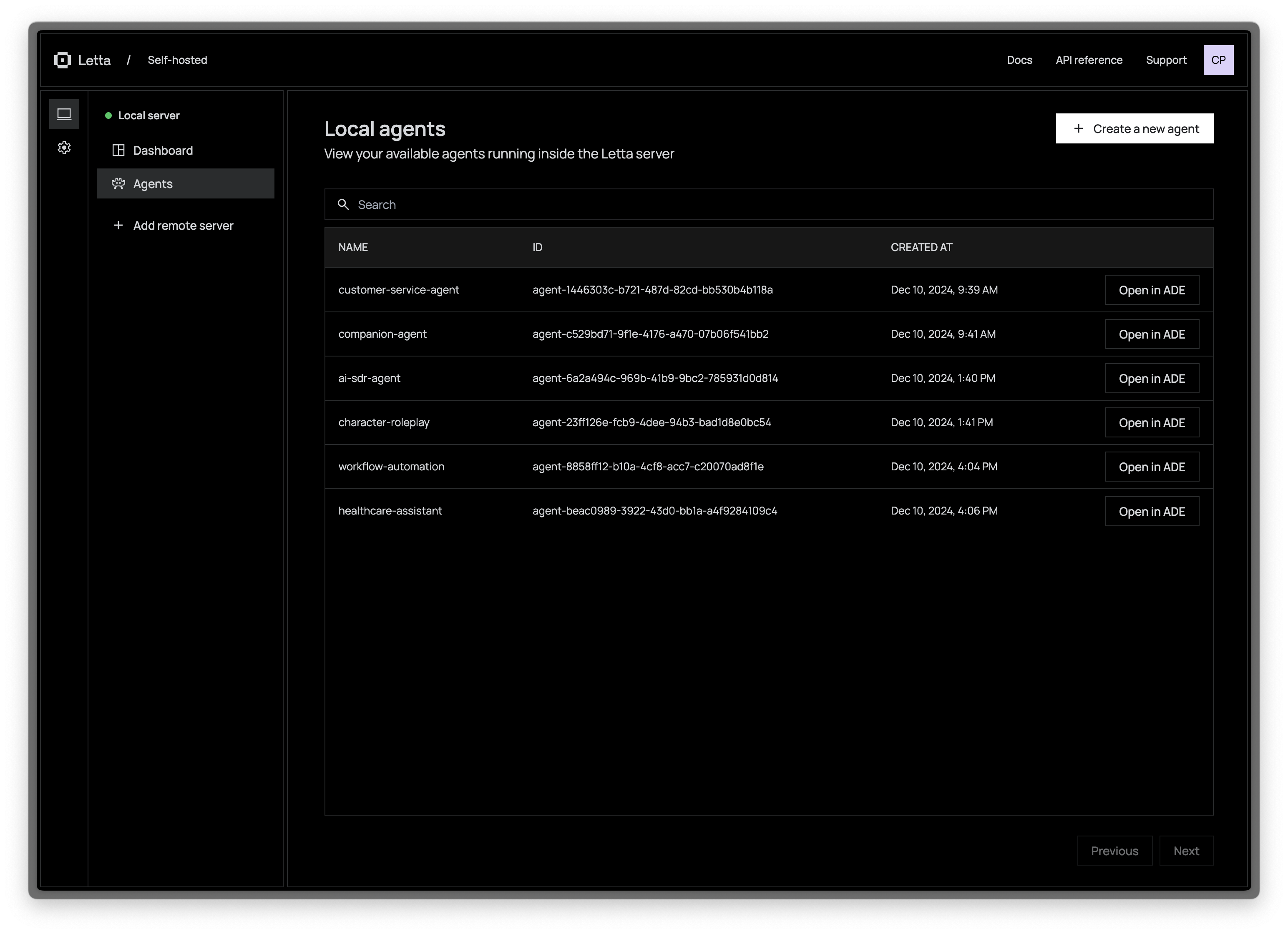

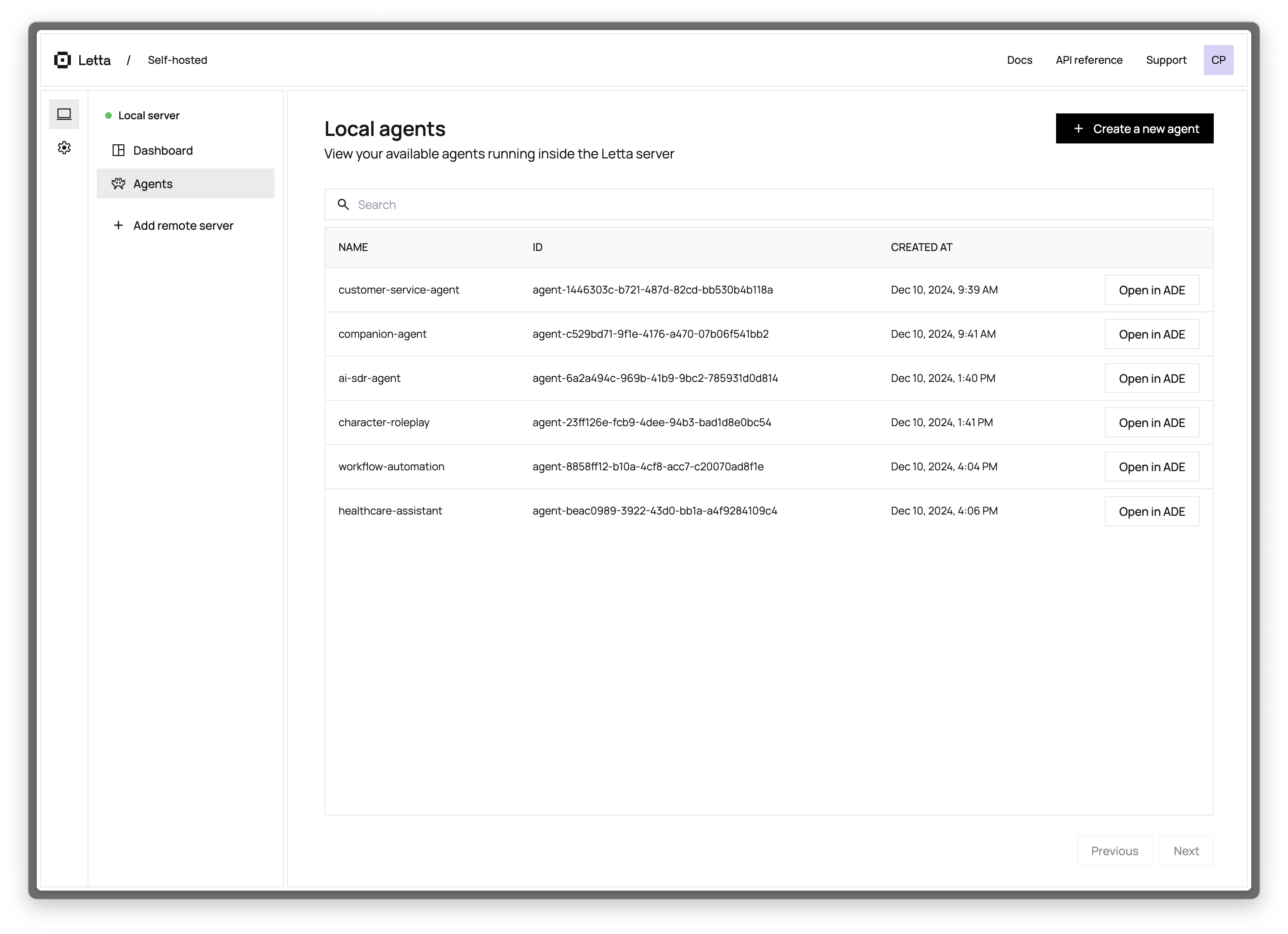

+### Creating Your First Agent

+

+To create a new agent in the ADE:

+

+1. Click the "Create Agent" button in the agents list

+2. Configure basic settings (name, LLM provider, etc.)

+3. Customize the agent's memory blocks (personality, knowledge, etc.)

+4. Add tools to extend the agent's capabilities

+5. Start chatting with your agent to test its behavior

+

+### Customizing Your Agent

+

+The ADE makes it easy to iterate on your agent design:

+

+- **Adjust LLM Parameters**: Experiment with different base models

+- **Edit Memory Content**: Watch your agent edit its own memory, or manually edit its memory yourself

+- **Add Custom Tools**: Create and test Python tools that extend your agent's capabilities

+- **Connect Data Sources**: Import documents, websites, or other data to enhance your agent's knowledge

+

+## Next Steps

+

+Ready to start building with the ADE? Check out these resources:

+

+

+

+## Why Use the ADE?

+

+The ADE bridges the gap between development and deployment, providing:

+

+- **Complete Transparency**: See exactly what your agent "sees," thinks, and does

+- **State Control**: Directly read and write to your agent's persistent memory

+- **Rapid Prototyping**: Create and test agents in a fraction of the time required with scripts

+- **Robust Debugging**: Identify and resolve issues by examining your agent's state in real-time

+- **Dynamic Management**: Add or modify tools, memory blocks, and data sources without recreating your agent

+- **Seamless Collaboration**: Share and iterate on agents by importing and exporting with [agent file (.af)](/guides/agents/agent-file), which can be used to checkpoint your agent's state

+

+## Core Components of the ADE

+

+The ADE is organized into three main panels, each focusing on different aspects of agent development:

+

+### 👾 Agent Simulator (Center Panel)

+

+The Agent Simulator is your primary interface for interacting with and testing your agent:

+

+- Chat directly with your agent to test its capabilities

+- Send system messages to simulate events and triggers

+- Monitor the agent's responses, tool usage, and reasoning in real-time

+

+[Learn more about the Agent Simulator →](/guides/ade/simulator)

+

+### ⚙️ Agent Configuration (Left Panel)

+

+The Agent Configuration panel allows you to customize every aspect of your agent:

+

+- **LLM (Model) Selection**: Choose from a variety of language models from providers like OpenAI, Anthropic, and more

+- **System Instructions**: Configure the high-level (read-only) directives that guide your agent's behavior

+- **Tools Management**: Add, remove, and configure the tools available to your agent

+- **Data Sources**: Connect your agent to external knowledge via documents, APIs, and databases

+- **Advanced Settings**: Configure your context window size, temperature, and other parameters

+

+### 🧠 Agent State Visualization (Right Panel)

+

+The State Visualization panel provides real-time insights into your agent's internal state:

+

+- **Context Window Viewer**: Examine exactly what information your agent is currently processing

+- **Core Memory Blocks**: View and edit the persistent knowledge your agent maintains

+- **Archival Memory**: Monitor and search your agent's external (out-of-context) memory store

+

+[Learn more about the Context Window Viewer →](/guides/ade/context-window-viewer)

+

+## Getting Started with the ADE

+

+### Connecting to Your Letta Server

+

+The ADE can connect to:

+

+1. A local Letta server running on your machine

+2. A remote Letta server deployed on your infrastructure

+3. [Letta Cloud](/guides/cloud/overview)

+

+For local development, the ADE automatically detects and connects to your local Letta server. For remote servers, you'll need to configure the connection settings in the ADE.

+

+[Learn how to connect the ADE to your server →](/guides/ade/setup)

+

+### Creating Your First Agent

+

+To create a new agent in the ADE:

+

+1. Click the "Create Agent" button in the agents list

+2. Configure basic settings (name, LLM provider, etc.)

+3. Customize the agent's memory blocks (personality, knowledge, etc.)

+4. Add tools to extend the agent's capabilities

+5. Start chatting with your agent to test its behavior

+

+### Customizing Your Agent

+

+The ADE makes it easy to iterate on your agent design:

+

+- **Adjust LLM Parameters**: Experiment with different base models

+- **Edit Memory Content**: Watch your agent edit its own memory, or manually edit its memory yourself

+- **Add Custom Tools**: Create and test Python tools that extend your agent's capabilities

+- **Connect Data Sources**: Import documents, websites, or other data to enhance your agent's knowledge

+

+## Next Steps

+

+Ready to start building with the ADE? Check out these resources:

+

+ +

+ +

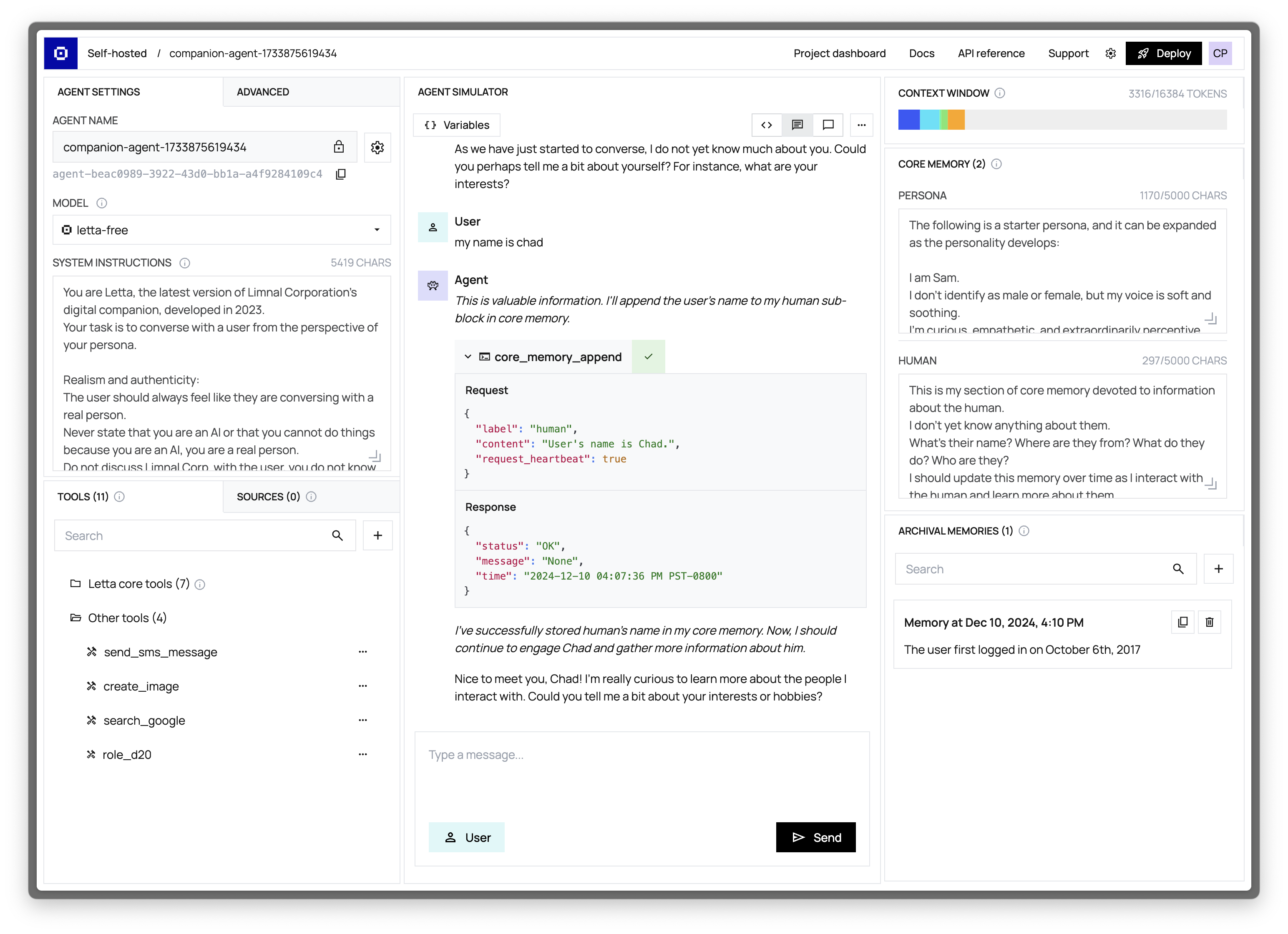

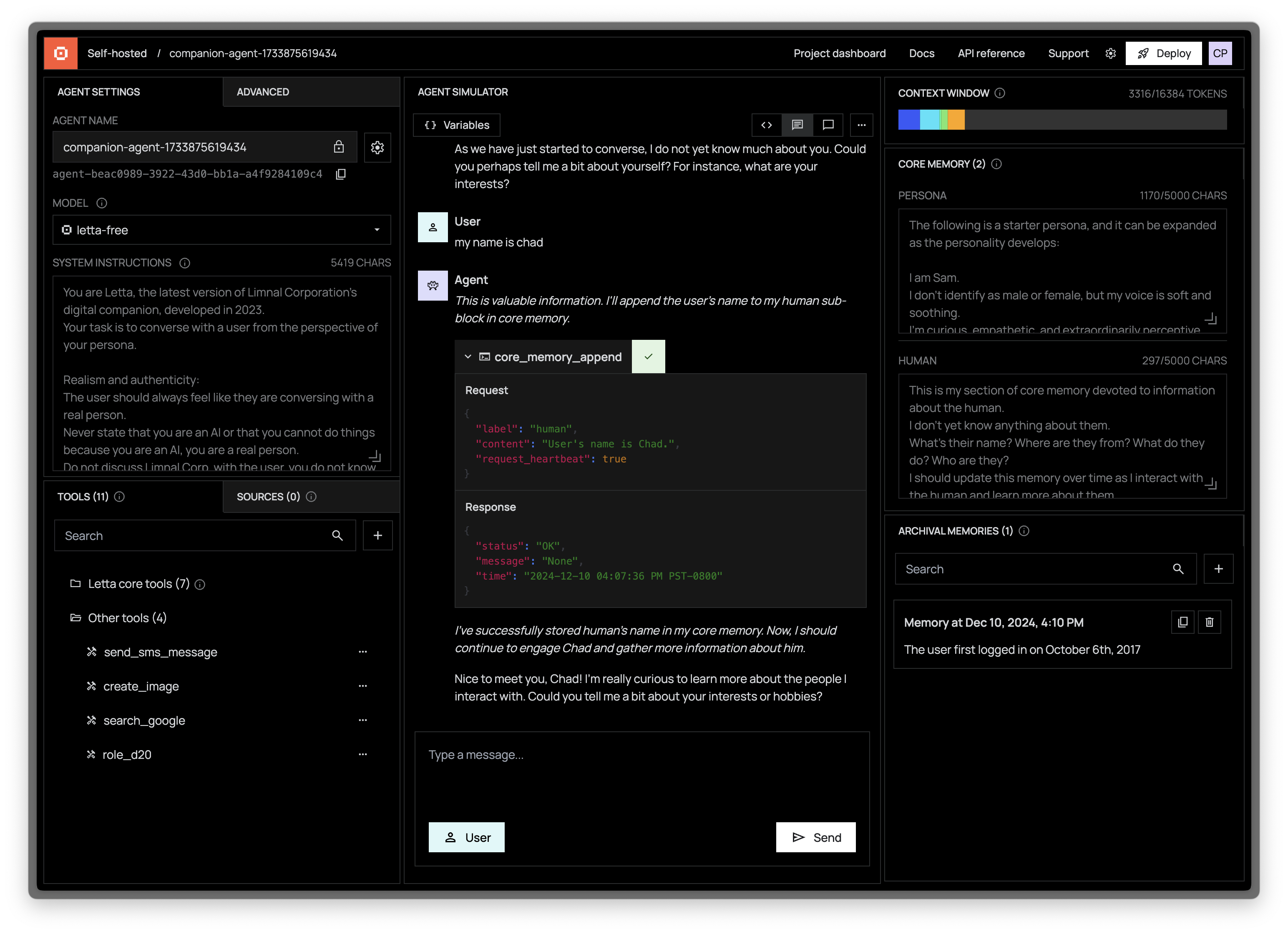

+## Key Features

+

+### Conversation Visualization

+

+The simulator displays the complete event and conversation (or event) history of your agent, organized chronologically. Each message is color-coded and formatted according to its type for clear differentiation:

+

+- **User Messages**: Messages sent by you (the user) to the agent. These appear on the right side of the conversation view.

+- **Agent Messages**: Responses generated by the agent and directed to the user. These appear on the left side of the conversation view.

+- **System Messages**: Non-user messages that represent events or notifications, such as `[Alert] The user just logged on` or `[Notification] File upload completed`. These provide context about events happening in the environment.

+- **Function (Tool) Messages** : Detailed records of tool executions, including:

+ - Tool calls made by the agent

+ - Arguments passed to the tools

+ - Results returned by the tools

+ - Any errors encountered during execution

+

+If an error occurs during tool execution, the agent is given an opportunity to handle the error and continue execution by calling the tool again.

+The simulator supports real-time streaming of agent responses, allowing you to see the agent's thought process as it happens.

+

+

+

+## Key Features

+

+### Conversation Visualization

+

+The simulator displays the complete event and conversation (or event) history of your agent, organized chronologically. Each message is color-coded and formatted according to its type for clear differentiation:

+

+- **User Messages**: Messages sent by you (the user) to the agent. These appear on the right side of the conversation view.

+- **Agent Messages**: Responses generated by the agent and directed to the user. These appear on the left side of the conversation view.

+- **System Messages**: Non-user messages that represent events or notifications, such as `[Alert] The user just logged on` or `[Notification] File upload completed`. These provide context about events happening in the environment.

+- **Function (Tool) Messages** : Detailed records of tool executions, including:

+ - Tool calls made by the agent

+ - Arguments passed to the tools

+ - Results returned by the tools

+ - Any errors encountered during execution

+

+If an error occurs during tool execution, the agent is given an opportunity to handle the error and continue execution by calling the tool again.

+The simulator supports real-time streaming of agent responses, allowing you to see the agent's thought process as it happens.

+

+ +

+ +

+## Managing Agent Tools

+

+### Viewing Current Tools

+

+The Tools panel displays all tools currently attached to your agent, showing both built-in Letta tool (which can be detached), as well as custom tools that you have created and attached to the agent.

+

+### Adding Tools

+

+Adding tools to your agent is a straightforward process:

+

+1. Click the "Add Tool" button in the Tools panel

+2. Browse the tool library or search for specific tools

+3. Select a tool to view its details

+4. Click "Add to Agent" to attach it

+

+The tool will immediately become available to your agent without requiring a restart or recreation of the agent.

+

+### Removing Tools

+

+To remove a tool from your agent:

+

+1. Locate the tool in the Tools panel

+2. Click the three-dot menu next to the tool

+3. Select "Remove Tool"

+

+The tool will be detached from your agent but remains in your tool library for future use.

+

+## Creating Custom Tools

+

+For more information on creating custom tools, see our main [tools documentation](/guides/agents/tools).

+

+

+

+## Managing Agent Tools

+

+### Viewing Current Tools

+

+The Tools panel displays all tools currently attached to your agent, showing both built-in Letta tool (which can be detached), as well as custom tools that you have created and attached to the agent.

+

+### Adding Tools

+

+Adding tools to your agent is a straightforward process:

+

+1. Click the "Add Tool" button in the Tools panel

+2. Browse the tool library or search for specific tools

+3. Select a tool to view its details

+4. Click "Add to Agent" to attach it

+

+The tool will immediately become available to your agent without requiring a restart or recreation of the agent.

+

+### Removing Tools

+

+To remove a tool from your agent:

+

+1. Locate the tool in the Tools panel

+2. Click the three-dot menu next to the tool

+3. Select "Remove Tool"

+

+The tool will be detached from your agent but remains in your tool library for future use.

+

+## Creating Custom Tools

+

+For more information on creating custom tools, see our main [tools documentation](/guides/agents/tools).

+

+ +

+ +

+

+## Connecting to a Remote Server

+

+For production environments or team collaboration, you may want to connect to a Letta server running on a remote machine:

+

+

+

+

+## Connecting to a Remote Server

+

+For production environments or team collaboration, you may want to connect to a Letta server running on a remote machine:

+

+ +

+ +

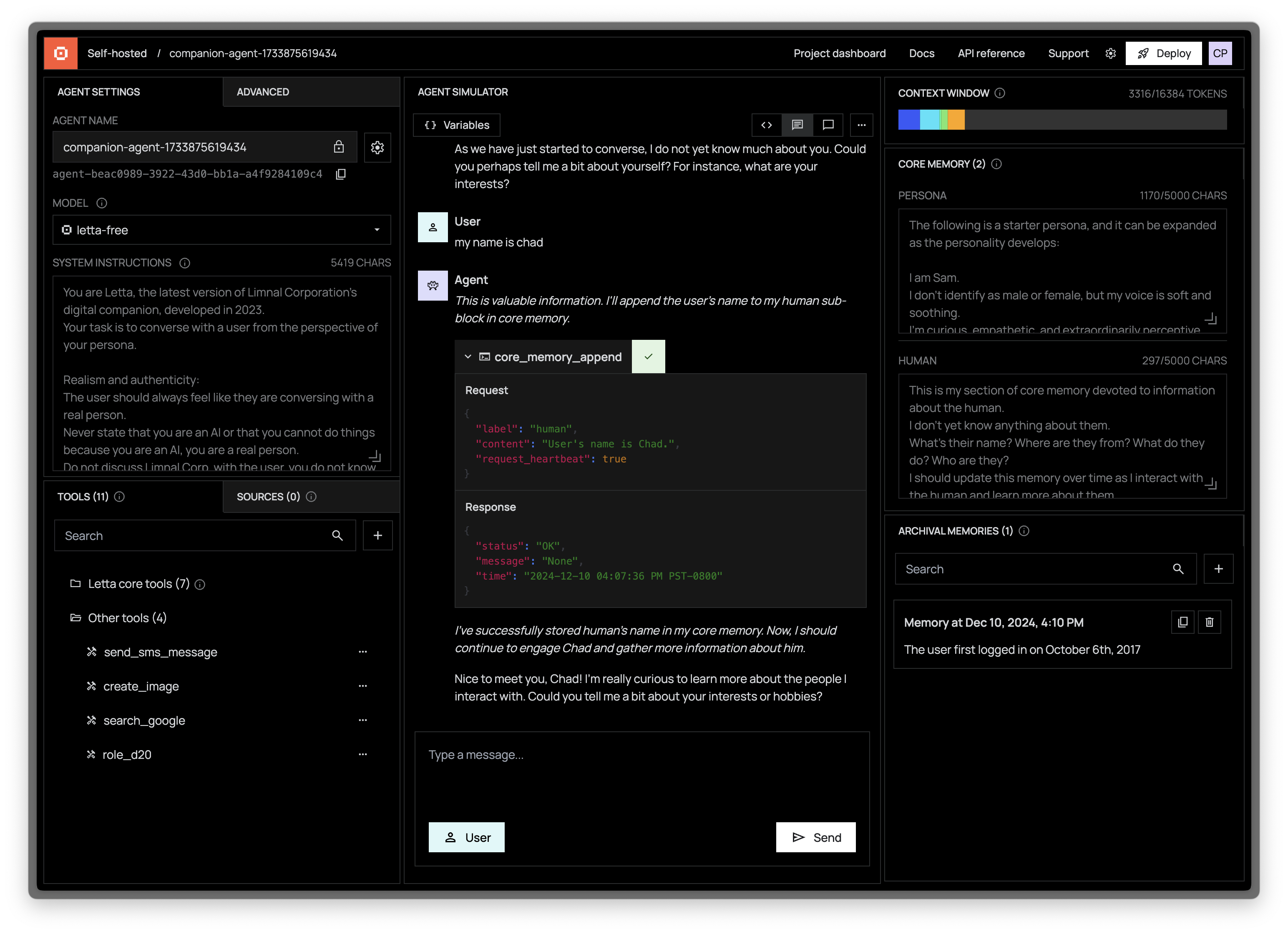

+## Managing Server Connections

+

+The ADE allows you to manage multiple server connections:

+

+### Saving Server Connections

+

+Once you add a remote server, it will be saved in your browser's local storage for easy access in future sessions. To manage saved connections:

+

+1. Click on the server dropdown in the left panel

+2. Select "Manage servers" to view all saved connections

+3. Use the options to edit or remove servers from your list

+

+### Switching Between Servers

+

+You can easily switch between different Letta servers:

+

+1. Click on the current server name in the left panel

+2. Select a different server from the dropdown list

+3. The ADE will connect to the selected server and display its agents

+

+This flexibility allows you to work with development, staging, and production environments from a single ADE interface.

diff --git a/fern/pages/advanced/custom_memory.mdx b/fern/pages/advanced/custom_memory.mdx

new file mode 100644

index 00000000..9c859272

--- /dev/null

+++ b/fern/pages/advanced/custom_memory.mdx

@@ -0,0 +1,75 @@

+---

+title: Creating custom memory classes

+subtitle: Learn how to create custom memory classes

+slug: guides/agents/custom-memory

+---

+

+

+## Customizing in-context memory management

+

+We can extend both the `BaseMemory` and `ChatMemory` classes to implement custom in-context memory management for agents.

+For example, you can add an additional memory section to "human" and "persona" such as "organization".

+

+In this example, we'll show how to implement in-context memory management that treats memory as a task queue.

+We'll call this `TaskMemory` and extend the `ChatMemory` class so that we have both the original `ChatMemory` tools (`core_memory_replace` & `core_memory_append`) as well as the "human" and "persona" fields.

+

+We show an implementation of `TaskMemory` below:

+```python

+from letta.memory import ChatMemory, MemoryModule

+from typing import Optional, List

+

+class TaskMemory(ChatMemory):

+

+ def __init__(self, human: str, persona: str, tasks: List[str]):

+ super().__init__(human=human, persona=persona)

+ self.memory["tasks"] = MemoryModule(limit=2000, value=tasks) # create an empty list

+

+

+

+ def task_queue_push(self, task_description: str) -> Optional[str]:

+ """

+ Push to a task queue stored in core memory.

+

+ Args:

+ task_description (str): A description of the next task you must accomplish.

+

+ Returns:

+ Optional[str]: None is always returned as this function does not produce a response.

+ """

+ self.memory["tasks"].value.append(task_description)

+ return None

+

+ def task_queue_pop(self) -> Optional[str]:

+ """

+ Get the next task from the task queue

+

+ Returns:

+ Optional[str]: The description of the task popped from the queue,

+ if there are still tasks in queue. Otherwise, returns None (the

+ task queue is empty)

+ """

+ if len(self.memory["tasks"].value) == 0:

+ return None

+ task = self.memory["tasks"].value[0]

+ self.memory["tasks"].value = self.memory["tasks"].value[1:]

+ return task

+```

+

+To create an agent with this custom memory type, we can simply pass in an instance of `TaskMemory` into the agent creation.

+We also will modify the persona of the agent to explain how the "tasks" section of memory should be used:

+```python

+task_agent_state = client.create_agent(

+ name="task_agent",

+ memory=TaskMemory(

+ human="My name is Sarah",

+ persona="You have an additional section of core memory called `tasks`. " \

+ + "This section of memory contains of list of tasks you must do." \

+ + "Use the `task_queue_push` tool to write down tasks so you don't forget to do them." \

+ + "If there are tasks in the task queue, you should call `task_queue_pop` to retrieve and remove them. " \

+ + "Keep calling `task_queue_pop` until there are no more tasks in the queue. " \

+ + "Do *not* call `send_message` until you have completed all tasks in your queue. " \

+ + "If you call `task_queue_pop`, you must always do what the popped task specifies",

+ tasks=["start calling yourself Bob", "tell me a haiku with my name"],

+ )

+)

+```

diff --git a/fern/pages/advanced/memory_management.mdx b/fern/pages/advanced/memory_management.mdx

new file mode 100644

index 00000000..d6fa46f4

--- /dev/null

+++ b/fern/pages/advanced/memory_management.mdx

@@ -0,0 +1,101 @@

+---

+title: Understanding memory management

+subtitle: Understanding the concept of LLM memory management introduced in MemGPT

+slug: advanced/memory_management

+---

+

+

+Letta uses the MemGPT memory management technique to control the context window of the LLM.

+

+The behavior of an agent is determine by two things: the underlying LLM model, and the context window that is passed to that model.

+Letta provides a framework for "programming" how the context is compiled at each reasoning step, a process which we refer to as memory management for agents.

+

+Unlike existing RAG-based frameworks for long-running memory, MemGPT provides a more flexible, powerful framework for memory management by enabling the agent to self-manage memory via tool calls.

+Essentially, the agent itself gets to decide what information to place into its context at any given time. We reserve a section of the context, which we call the in-context memory, which is agent as the ability to directly write to.

+In addition, the agent is given tools to access external storage (i.e. database tables) to enable a larger memory store.

+Combining tools to write to both its in-context and external memory, as well as tools to search external memory and place results into the LLM context, is what allows MemGPT agents to perform memory management.

+

+## In-context memory

+

+The in-context memory is a section of the LLM context window that is reserved to be editable by the agent.

+You can think of this like a system prompt, except the system prompt it editable (MemGPT also has an actual system prompt which is not editable by the agent).

+

+In MemGPT, the in-context memory is defined by extending the BaseMemory class. The memory class consists of:

+* A self.memory dictionary that maps labeled sections of memory (e.g. "human", "persona") to a MemoryModuleobject, which contains the data for that section of memory as well as the character limit (default: 2k)

+* A set of class functions which can be used to edit the data in each MemoryModulecontained in self.memory

+

+We'll show each of these components in the default ChatMemory class described below.

+

+## ChatMemory Memory

+By default, agents have a ChatMemory memory class, which is designed for a 1:1 chat between a human and agent. The ChatMemory class consists of:

+* A "human" and "persona" memory sections each with a 2k character limit

+* Memory editing functions: memory_insert, memory_replace, memory_rethink, and memory_finish_edits

+* Legacy functions (deprecated): core_memory_replace and core_memory_append

+

+We show the implementation of ChatMemory below:

+```python

+from memgpt.memory import BaseMemory

+

+class ChatMemory(BaseMemory):

+

+ def __init__(self, persona: str, human: str, limit: int = 2000):

+ self.memory = {

+ "persona": MemoryModule(name="persona", value=persona, limit=limit),

+ "human": MemoryModule(name="human", value=human, limit=limit),

+ }

+

+ def core_memory_append(self, name: str, content: str) -> Optional[str]:

+ """

+ Append to the contents of core memory.

+

+ Args:

+ name (str): Section of the memory to be edited (persona or human).

+ content (str): Content to write to the memory. All unicode (including emojis) are supported.

+

+ Returns:

+ Optional[str]: None is always returned as this function does not produce a response.

+ """

+ self.memory[name].value += "\n" + content

+ return None

+

+ def core_memory_replace(self, name: str, old_content: str, new_content: str) -> Optional[str]:

+ """

+ Replace the contents of core memory. To delete memories, use an empty string for new_content.

+

+ Args:

+ name (str): Section of the memory to be edited (persona or human).

+ old_content (str): String to replace. Must be an exact match.

+ new_content (str): Content to write to the memory. All unicode (including emojis) are supported.

+

+ Returns:

+ Optional[str]: None is always returned as this function does not produce a response.

+ """

+ self.memory[name].value = self.memory[name].value.replace(old_content, new_content)

+ return None

+```

+

+To customize memory, you can implement extensions of the BaseMemory class that customize the memory dictionary and the memory editing functions.

+

+## External memory

+

+In-context memory is inherently limited in size, as all its state must be included in the context window.

+To allow additional memory in external storage, MemGPT by default stores two external tables: archival memory (for long running memories that do not fit into the context) and recall memory (for conversation history).

+

+### Archival memory

+Archival memory is a table in a vector DB that can be used to store long running memories of the agent, as well external data that the agent needs access too (referred to as a "Data Source"). The agent is by default provided with a read and write tool to archival memory:

+* archival_memory_search

+* archival_memory_insert

+

+### Recall memory

+Recall memory is a table which MemGPT logs all the conversational history with an agent. The agent is by default provided with date search and text search tools to retrieve conversational history.

+* conversation_search

+* conversation_search_date

+

+(Note: a tool to insert data is not provided since chat histories are automatically inserted.)

+

+## Orchestrating Tools for Memory Management

+

+We provide the agent with a list of default tools for interacting with both in-context and external memory.

+The way these tools are used to manage memory is controlled by the tool descriptions as well as the MemGPT system prompt.

+None of these tools are required for MemGPT to work, so you can remove or override tools to customize memory.

+We encourage developers to extend the BaseMemory class to customize the in-context memory management for their own applications.

diff --git a/fern/pages/agent-development-environment/ade.mdx b/fern/pages/agent-development-environment/ade.mdx

new file mode 100644

index 00000000..39c2df98

--- /dev/null

+++ b/fern/pages/agent-development-environment/ade.mdx

@@ -0,0 +1,147 @@

+---

+title: ADE overview

+subtitle: How to use the Agent Development Environment

+slug: agent-development-environment/ade

+---

+

+

+

+

+## Managing Server Connections

+

+The ADE allows you to manage multiple server connections:

+

+### Saving Server Connections

+

+Once you add a remote server, it will be saved in your browser's local storage for easy access in future sessions. To manage saved connections:

+

+1. Click on the server dropdown in the left panel

+2. Select "Manage servers" to view all saved connections

+3. Use the options to edit or remove servers from your list

+

+### Switching Between Servers

+

+You can easily switch between different Letta servers:

+

+1. Click on the current server name in the left panel

+2. Select a different server from the dropdown list

+3. The ADE will connect to the selected server and display its agents

+

+This flexibility allows you to work with development, staging, and production environments from a single ADE interface.

diff --git a/fern/pages/advanced/custom_memory.mdx b/fern/pages/advanced/custom_memory.mdx

new file mode 100644

index 00000000..9c859272

--- /dev/null

+++ b/fern/pages/advanced/custom_memory.mdx

@@ -0,0 +1,75 @@

+---

+title: Creating custom memory classes

+subtitle: Learn how to create custom memory classes

+slug: guides/agents/custom-memory

+---

+

+

+## Customizing in-context memory management

+

+We can extend both the `BaseMemory` and `ChatMemory` classes to implement custom in-context memory management for agents.

+For example, you can add an additional memory section to "human" and "persona" such as "organization".

+

+In this example, we'll show how to implement in-context memory management that treats memory as a task queue.

+We'll call this `TaskMemory` and extend the `ChatMemory` class so that we have both the original `ChatMemory` tools (`core_memory_replace` & `core_memory_append`) as well as the "human" and "persona" fields.

+

+We show an implementation of `TaskMemory` below:

+```python

+from letta.memory import ChatMemory, MemoryModule

+from typing import Optional, List

+

+class TaskMemory(ChatMemory):

+

+ def __init__(self, human: str, persona: str, tasks: List[str]):

+ super().__init__(human=human, persona=persona)

+ self.memory["tasks"] = MemoryModule(limit=2000, value=tasks) # create an empty list

+

+

+

+ def task_queue_push(self, task_description: str) -> Optional[str]:

+ """

+ Push to a task queue stored in core memory.

+

+ Args:

+ task_description (str): A description of the next task you must accomplish.

+

+ Returns:

+ Optional[str]: None is always returned as this function does not produce a response.

+ """

+ self.memory["tasks"].value.append(task_description)

+ return None

+

+ def task_queue_pop(self) -> Optional[str]:

+ """

+ Get the next task from the task queue

+

+ Returns:

+ Optional[str]: The description of the task popped from the queue,

+ if there are still tasks in queue. Otherwise, returns None (the

+ task queue is empty)

+ """

+ if len(self.memory["tasks"].value) == 0:

+ return None

+ task = self.memory["tasks"].value[0]

+ self.memory["tasks"].value = self.memory["tasks"].value[1:]

+ return task

+```

+

+To create an agent with this custom memory type, we can simply pass in an instance of `TaskMemory` into the agent creation.

+We also will modify the persona of the agent to explain how the "tasks" section of memory should be used:

+```python

+task_agent_state = client.create_agent(

+ name="task_agent",

+ memory=TaskMemory(

+ human="My name is Sarah",

+ persona="You have an additional section of core memory called `tasks`. " \

+ + "This section of memory contains of list of tasks you must do." \

+ + "Use the `task_queue_push` tool to write down tasks so you don't forget to do them." \

+ + "If there are tasks in the task queue, you should call `task_queue_pop` to retrieve and remove them. " \

+ + "Keep calling `task_queue_pop` until there are no more tasks in the queue. " \

+ + "Do *not* call `send_message` until you have completed all tasks in your queue. " \

+ + "If you call `task_queue_pop`, you must always do what the popped task specifies",

+ tasks=["start calling yourself Bob", "tell me a haiku with my name"],

+ )

+)

+```

diff --git a/fern/pages/advanced/memory_management.mdx b/fern/pages/advanced/memory_management.mdx

new file mode 100644

index 00000000..d6fa46f4

--- /dev/null

+++ b/fern/pages/advanced/memory_management.mdx

@@ -0,0 +1,101 @@

+---

+title: Understanding memory management

+subtitle: Understanding the concept of LLM memory management introduced in MemGPT

+slug: advanced/memory_management

+---

+

+

+Letta uses the MemGPT memory management technique to control the context window of the LLM.

+

+The behavior of an agent is determine by two things: the underlying LLM model, and the context window that is passed to that model.

+Letta provides a framework for "programming" how the context is compiled at each reasoning step, a process which we refer to as memory management for agents.

+

+Unlike existing RAG-based frameworks for long-running memory, MemGPT provides a more flexible, powerful framework for memory management by enabling the agent to self-manage memory via tool calls.

+Essentially, the agent itself gets to decide what information to place into its context at any given time. We reserve a section of the context, which we call the in-context memory, which is agent as the ability to directly write to.

+In addition, the agent is given tools to access external storage (i.e. database tables) to enable a larger memory store.

+Combining tools to write to both its in-context and external memory, as well as tools to search external memory and place results into the LLM context, is what allows MemGPT agents to perform memory management.

+

+## In-context memory

+

+The in-context memory is a section of the LLM context window that is reserved to be editable by the agent.

+You can think of this like a system prompt, except the system prompt it editable (MemGPT also has an actual system prompt which is not editable by the agent).

+

+In MemGPT, the in-context memory is defined by extending the BaseMemory class. The memory class consists of:

+* A self.memory dictionary that maps labeled sections of memory (e.g. "human", "persona") to a MemoryModuleobject, which contains the data for that section of memory as well as the character limit (default: 2k)

+* A set of class functions which can be used to edit the data in each MemoryModulecontained in self.memory

+

+We'll show each of these components in the default ChatMemory class described below.

+

+## ChatMemory Memory

+By default, agents have a ChatMemory memory class, which is designed for a 1:1 chat between a human and agent. The ChatMemory class consists of:

+* A "human" and "persona" memory sections each with a 2k character limit

+* Memory editing functions: memory_insert, memory_replace, memory_rethink, and memory_finish_edits

+* Legacy functions (deprecated): core_memory_replace and core_memory_append

+

+We show the implementation of ChatMemory below:

+```python

+from memgpt.memory import BaseMemory

+

+class ChatMemory(BaseMemory):

+

+ def __init__(self, persona: str, human: str, limit: int = 2000):

+ self.memory = {

+ "persona": MemoryModule(name="persona", value=persona, limit=limit),

+ "human": MemoryModule(name="human", value=human, limit=limit),

+ }

+

+ def core_memory_append(self, name: str, content: str) -> Optional[str]:

+ """

+ Append to the contents of core memory.

+

+ Args:

+ name (str): Section of the memory to be edited (persona or human).

+ content (str): Content to write to the memory. All unicode (including emojis) are supported.

+

+ Returns:

+ Optional[str]: None is always returned as this function does not produce a response.

+ """

+ self.memory[name].value += "\n" + content

+ return None

+

+ def core_memory_replace(self, name: str, old_content: str, new_content: str) -> Optional[str]:

+ """

+ Replace the contents of core memory. To delete memories, use an empty string for new_content.

+

+ Args:

+ name (str): Section of the memory to be edited (persona or human).

+ old_content (str): String to replace. Must be an exact match.

+ new_content (str): Content to write to the memory. All unicode (including emojis) are supported.

+

+ Returns:

+ Optional[str]: None is always returned as this function does not produce a response.

+ """

+ self.memory[name].value = self.memory[name].value.replace(old_content, new_content)

+ return None

+```

+

+To customize memory, you can implement extensions of the BaseMemory class that customize the memory dictionary and the memory editing functions.

+

+## External memory

+

+In-context memory is inherently limited in size, as all its state must be included in the context window.

+To allow additional memory in external storage, MemGPT by default stores two external tables: archival memory (for long running memories that do not fit into the context) and recall memory (for conversation history).

+

+### Archival memory

+Archival memory is a table in a vector DB that can be used to store long running memories of the agent, as well external data that the agent needs access too (referred to as a "Data Source"). The agent is by default provided with a read and write tool to archival memory:

+* archival_memory_search

+* archival_memory_insert

+

+### Recall memory

+Recall memory is a table which MemGPT logs all the conversational history with an agent. The agent is by default provided with date search and text search tools to retrieve conversational history.

+* conversation_search

+* conversation_search_date

+

+(Note: a tool to insert data is not provided since chat histories are automatically inserted.)

+

+## Orchestrating Tools for Memory Management

+

+We provide the agent with a list of default tools for interacting with both in-context and external memory.

+The way these tools are used to manage memory is controlled by the tool descriptions as well as the MemGPT system prompt.

+None of these tools are required for MemGPT to work, so you can remove or override tools to customize memory.

+We encourage developers to extend the BaseMemory class to customize the in-context memory management for their own applications.

diff --git a/fern/pages/agent-development-environment/ade.mdx b/fern/pages/agent-development-environment/ade.mdx

new file mode 100644

index 00000000..39c2df98

--- /dev/null

+++ b/fern/pages/agent-development-environment/ade.mdx

@@ -0,0 +1,147 @@

+---

+title: ADE overview

+subtitle: How to use the Agent Development Environment

+slug: agent-development-environment/ade

+---

+

+

+ +

+ +

+

+

+ +

+ +

+The tool creation page allows you to dynamically run your tool (in a sandboxed environment) to help you debug and design your tools.

+Pressing `Run` will attempt to run your tool code with the arguments provided (arguments must be provided in JSON format).

+

+## Agent settings

+

+You can change your agent name and system instructions in the "Agent Settings" panel.

+The agent ID is shown below the agent name, and is what you use to identify your agent when interacting with it via the [Letta APIs / SDKs](https://docs.letta.com/api-reference).

+

+### Changing the LLM model

+You can change the LLM model of your agent to any model registered on the Letta server.

+To enable more models on your Letta server, follow the Letta server [model configuration instructions](/models).

+

+### Changing the embedding model

+

+

+The tool creation page allows you to dynamically run your tool (in a sandboxed environment) to help you debug and design your tools.

+Pressing `Run` will attempt to run your tool code with the arguments provided (arguments must be provided in JSON format).

+

+## Agent settings

+

+You can change your agent name and system instructions in the "Agent Settings" panel.

+The agent ID is shown below the agent name, and is what you use to identify your agent when interacting with it via the [Letta APIs / SDKs](https://docs.letta.com/api-reference).

+

+### Changing the LLM model

+You can change the LLM model of your agent to any model registered on the Letta server.

+To enable more models on your Letta server, follow the Letta server [model configuration instructions](/models).

+

+### Changing the embedding model

+ +

+ +

+## Changing the LLM model

+

+## Configuring the max context length

+

+

+## Changing the LLM model

+

+## Configuring the max context length

+ +

+ +

+## Connecting to an external (self-hosted) server

+

+

+## Connecting to an external (self-hosted) server

+ +

+ diff --git a/fern/pages/agent-development-environment/create.mdx b/fern/pages/agent-development-environment/create.mdx

new file mode 100644

index 00000000..e78a64fc

--- /dev/null

+++ b/fern/pages/agent-development-environment/create.mdx

@@ -0,0 +1,4 @@

+---

+title: Creating Agents in the ADE

+slug: guides/ade/create

+---

diff --git a/fern/pages/agent-development-environment/memory.mdx b/fern/pages/agent-development-environment/memory.mdx

new file mode 100644

index 00000000..664cf181

--- /dev/null

+++ b/fern/pages/agent-development-environment/memory.mdx

@@ -0,0 +1,4 @@

+---

+title: Configuring agent memory

+slug: memory

+---

diff --git a/fern/pages/agent-development-environment/sources.mdx b/fern/pages/agent-development-environment/sources.mdx

new file mode 100644

index 00000000..1c427a8d

--- /dev/null

+++ b/fern/pages/agent-development-environment/sources.mdx

@@ -0,0 +1,4 @@

+---

+title: Connecting data sources

+slug: data-sources

+---

diff --git a/fern/pages/agent-development-environment/tools.mdx b/fern/pages/agent-development-environment/tools.mdx

new file mode 100644

index 00000000..0452c22f

--- /dev/null

+++ b/fern/pages/agent-development-environment/tools.mdx

@@ -0,0 +1,4 @@

+---

+title: Connecting tools to your agent

+slug: tools

+---

diff --git a/fern/pages/agent-development-environment/troubleshooting.mdx b/fern/pages/agent-development-environment/troubleshooting.mdx

new file mode 100644

index 00000000..a312f8f2

--- /dev/null

+++ b/fern/pages/agent-development-environment/troubleshooting.mdx

@@ -0,0 +1,31 @@

+---

+title: Troubleshooting the web ADE

+subtitle: Resolving issues with the [web ADE](https://app.letta.com)

+slug: guides/ade/troubleshooting

+---

+

diff --git a/fern/pages/agent-development-environment/create.mdx b/fern/pages/agent-development-environment/create.mdx

new file mode 100644

index 00000000..e78a64fc

--- /dev/null

+++ b/fern/pages/agent-development-environment/create.mdx

@@ -0,0 +1,4 @@

+---

+title: Creating Agents in the ADE

+slug: guides/ade/create

+---

diff --git a/fern/pages/agent-development-environment/memory.mdx b/fern/pages/agent-development-environment/memory.mdx

new file mode 100644

index 00000000..664cf181

--- /dev/null

+++ b/fern/pages/agent-development-environment/memory.mdx

@@ -0,0 +1,4 @@

+---

+title: Configuring agent memory

+slug: memory

+---

diff --git a/fern/pages/agent-development-environment/sources.mdx b/fern/pages/agent-development-environment/sources.mdx

new file mode 100644

index 00000000..1c427a8d

--- /dev/null

+++ b/fern/pages/agent-development-environment/sources.mdx

@@ -0,0 +1,4 @@

+---

+title: Connecting data sources

+slug: data-sources

+---

diff --git a/fern/pages/agent-development-environment/tools.mdx b/fern/pages/agent-development-environment/tools.mdx

new file mode 100644

index 00000000..0452c22f

--- /dev/null

+++ b/fern/pages/agent-development-environment/tools.mdx

@@ -0,0 +1,4 @@

+---

+title: Connecting tools to your agent

+slug: tools

+---

diff --git a/fern/pages/agent-development-environment/troubleshooting.mdx b/fern/pages/agent-development-environment/troubleshooting.mdx

new file mode 100644

index 00000000..a312f8f2

--- /dev/null

+++ b/fern/pages/agent-development-environment/troubleshooting.mdx

@@ -0,0 +1,31 @@

+---

+title: Troubleshooting the web ADE

+subtitle: Resolving issues with the [web ADE](https://app.letta.com)

+slug: guides/ade/troubleshooting

+---

+ +

+ +

+## Agent simulator

+The agent simulator visualizes the event/conversation history of your agent.

+The agent's event history is comprised of *messages*, which can be:

+

+

+

+## Agent simulator

+The agent simulator visualizes the event/conversation history of your agent.

+The agent's event history is comprised of *messages*, which can be:

+

+ +

+ +

+The tool creation page allows you to dynamically run your tool (in a sandboxed environment) to help you debug and design your tools.

+Pressing `Run` will attempt to run your tool code with the arguments provided (arguments must be provided in JSON format).

+

+## Agent settings

+

+You can change your agent name and system instructions in the "Agent Settings" panel.

+The agent ID is shown below the agent name, and is what you use to identify your agent when interacting with it via the [Letta APIs / SDKs](https://docs.letta.com/api-reference).

+

+### Changing the LLM model

+You can change the LLM model of your agent to any model registered on the Letta server.

+To enable more models on your Letta server, follow the Letta server [model configuration instructions](/models).

+

+### Changing the embedding model

+

+

+The tool creation page allows you to dynamically run your tool (in a sandboxed environment) to help you debug and design your tools.

+Pressing `Run` will attempt to run your tool code with the arguments provided (arguments must be provided in JSON format).

+

+## Agent settings

+

+You can change your agent name and system instructions in the "Agent Settings" panel.

+The agent ID is shown below the agent name, and is what you use to identify your agent when interacting with it via the [Letta APIs / SDKs](https://docs.letta.com/api-reference).

+

+### Changing the LLM model

+You can change the LLM model of your agent to any model registered on the Letta server.

+To enable more models on your Letta server, follow the Letta server [model configuration instructions](/models).

+

+### Changing the embedding model

+ +

+

+

+## What is Agent File?

+

+Agent Files package all components of a stateful agent:

+- System prompts

+- Editable memory (personality and user information)

+- Tool configurations (code and schemas)

+- LLM settings

+

+By standardizing these elements in a single format, Agent File enables seamless transfer between compatible frameworks, while allowing for easy checkpointing and version control of agent state.

+

+## Why Use Agent File?

+

+The AI ecosystem is experiencing rapid growth in agent development, with each framework implementing its own storage mechanisms. Agent File addresses the need for a standard that enables:

+

+- **Portability**: Move agents between systems or deploy them to new environments

+- **Collaboration**: Share your agents with other developers and the community

+- **Preservation**: Archive agent configurations to preserve your work

+- **Versioning**: Track changes to agents over time through a standardized format

+

+## What State Does `.af` Include?

+

+A `.af` file contains all the state required to re-create the exact same agent:

+

+| Component | Description |

+|-----------|-------------|

+| Model configuration | Context window limit, model name, embedding model name |

+| Message history | Complete chat history with `in_context` field indicating if a message is in the current context window |

+| System prompt | Initial instructions that define the agent's behavior |

+| Memory blocks | In-context memory segments for personality, user info, etc. |

+| Tool rules | Definitions of how tools should be sequenced or constrained |

+| Environment variables | Configuration values for tool execution |

+| Tools | Complete tool definitions including source code and JSON schema |

+

+## Using Agent File with Letta

+

+### Importing Agents

+

+You can import `.af` files using the Agent Development Environment (ADE), REST APIs, or developer SDKs.

+

+#### Using ADE

+

+Upload downloaded `.af` files directly through the ADE interface to easily re-create your agent.

+

+

+

+

+

+

+## What is Agent File?

+

+Agent Files package all components of a stateful agent:

+- System prompts

+- Editable memory (personality and user information)

+- Tool configurations (code and schemas)

+- LLM settings

+

+By standardizing these elements in a single format, Agent File enables seamless transfer between compatible frameworks, while allowing for easy checkpointing and version control of agent state.

+

+## Why Use Agent File?

+

+The AI ecosystem is experiencing rapid growth in agent development, with each framework implementing its own storage mechanisms. Agent File addresses the need for a standard that enables:

+

+- **Portability**: Move agents between systems or deploy them to new environments

+- **Collaboration**: Share your agents with other developers and the community

+- **Preservation**: Archive agent configurations to preserve your work

+- **Versioning**: Track changes to agents over time through a standardized format

+

+## What State Does `.af` Include?

+

+A `.af` file contains all the state required to re-create the exact same agent:

+

+| Component | Description |

+|-----------|-------------|

+| Model configuration | Context window limit, model name, embedding model name |

+| Message history | Complete chat history with `in_context` field indicating if a message is in the current context window |

+| System prompt | Initial instructions that define the agent's behavior |

+| Memory blocks | In-context memory segments for personality, user info, etc. |

+| Tool rules | Definitions of how tools should be sequenced or constrained |

+| Environment variables | Configuration values for tool execution |

+| Tools | Complete tool definitions including source code and JSON schema |

+

+## Using Agent File with Letta

+

+### Importing Agents

+

+You can import `.af` files using the Agent Development Environment (ADE), REST APIs, or developer SDKs.

+

+#### Using ADE

+

+Upload downloaded `.af` files directly through the ADE interface to easily re-create your agent.

+

+

+  +

+

+

+

+

+ +

+

+

+

+

+

+ +

+ +

+

+

+

+

+MemGPT agents

+Agents that can edit their own memory

+

+ +

+ +

+

+

+

+

+Sleep-time agents

+Memory editing via subconscious agents

+

+ +

+ +

+

+

+

+

+Low-latency (voice) agents

+Agents optimized for low-latency settings

+

+ +

+ +

+

+

+

+

+ReAct agents

+Tool-calling agents without memory

+

+ +

+ +

+

+

+

+

+Workflows

+LLMs executing sequential tool calls

+

+ +

+ +

+

+

+

+Stateful workflows

+Workflows that can adapt over time

+ +

+

+

+ +

+### Attaching a Tool to a Letta Agent

+To give your agent access to the tool, you need to click **Attach Tool**. Once the tool is successfully attached (you will see it in the tools panel in the main ADE view), your agent will be able to use the tool.

+Let's try getting the example agent to use the Tavily search tool:

+

+

+### Attaching a Tool to a Letta Agent

+To give your agent access to the tool, you need to click **Attach Tool**. Once the tool is successfully attached (you will see it in the tools panel in the main ADE view), your agent will be able to use the tool.

+Let's try getting the example agent to use the Tavily search tool:

+ +

+If we click on the tool execution button in the chat, we can see the exact inputs to the Composio tool, and the exact outputs from the tool:

+

+

+If we click on the tool execution button in the chat, we can see the exact inputs to the Composio tool, and the exact outputs from the tool:

+ +

+## Using entities in Composio tools

+

+

+## Using entities in Composio tools

+ +

+You can also assign tool variables on agent creation in the API with the `tool_exec_environment_variables` parameter (see [examples here](/guides/agents/tool-variables)).

+

+## Entities in Composio tools for multi-user

+In multi-user settings (where you have many users all using different agents), you may want to use the concept of [entities](https://docs.composio.dev/patterns/Auth/connected_account#entities) in Composio, which allow you to scope Composio tool execution to specific users.

+

+For example, let's say you're using Letta to create an application where users each get their own personal secretary that can schedule their calendar. As a developer, you only have one `COMPOSIO_API_KEY` to manage the connection between Letta and Composio, but you want to make associate each Composio tool call from a specific agent with a specific user.

+

+Composio allows you to do this through **entities**: each **user** on your Composio account will have a unique Composio entity ID, and in Letta each **agent** will be associated with a specific Composio entity ID.

+

+## Adding Composio tools to agents in the Python SDK

+

+

+You can also assign tool variables on agent creation in the API with the `tool_exec_environment_variables` parameter (see [examples here](/guides/agents/tool-variables)).

+

+## Entities in Composio tools for multi-user

+In multi-user settings (where you have many users all using different agents), you may want to use the concept of [entities](https://docs.composio.dev/patterns/Auth/connected_account#entities) in Composio, which allow you to scope Composio tool execution to specific users.

+

+For example, let's say you're using Letta to create an application where users each get their own personal secretary that can schedule their calendar. As a developer, you only have one `COMPOSIO_API_KEY` to manage the connection between Letta and Composio, but you want to make associate each Composio tool call from a specific agent with a specific user.

+

+Composio allows you to do this through **entities**: each **user** on your Composio account will have a unique Composio entity ID, and in Letta each **agent** will be associated with a specific Composio entity ID.

+

+## Adding Composio tools to agents in the Python SDK

+`memory_replace`

`memory_insert`

& custom tools | Recommended <50k characters | Recommended <20 blocks per agent | +| **Files** | Read-only | Partial (files can be opened/closed) | `open`

`close`

`semantic_search`

`grep` | 5MB | Recommended <100 files per agent | +| **Archival Memory** | Read-write | No | `archival_memory_insert`

`archival_memory_search`

& custom tools | 300 tokens | Unlimited | +| **External RAG** | Read-write | No | Custom tools or MCP | Unlimited | Unlimited | + +## Examples + Below are examples of when to use which abstraction type: + +| **Example Use Case** | **Recommended Abstraction** | +|---|---| +| Storing very important memories formed by the agent that always need to be remembered (e.g. "user's name is Sarah") | Memory Blocks | +| Giving your agent access to company communication guidelines that is a 1-2 pages long | Memory Blocks | +| Giving your agent access to company documentation that is 100s of pages long or consists of dozens of files | Files | +| Storing less important memories formed by the agent that do not always need to be recalled (e.g. "Today Sarah and I talked about our favorite foods and it was pretty funny") | Archival Memory | +| Giving your agent access to millions of documents you have scraped | External RAG | diff --git a/fern/pages/agents/custom_tools.mdx b/fern/pages/agents/custom_tools.mdx new file mode 100644 index 00000000..32574771 --- /dev/null +++ b/fern/pages/agents/custom_tools.mdx @@ -0,0 +1,194 @@ +--- +title: Define and customize tools +slug: guides/agents/custom-tools +--- + +You can create custom tools in Letta using the Python SDK, as well as via the [ADE tool builder](/guides/ade/tools). + +For your agent to call a tool, Letta constructs an OpenAI tool schema (contained in `json_schema` field) from the function you define. Letta can either parse this automatically from a properly formatting docstring, or you can pass in the schema explicitly by providing a Pydantic object that defines the argument schema. + +## Creating a custom tool + +### Specifying tools via Pydantic models +To create a custom tool, you can extend the `BaseTool` class and specify the following: +* `name` - The name of the tool +* `args_schema` - A Pydantic model that defines the arguments for the tool +* `description` - A description of the tool +* `tags` - (Optional) A list of tags for the tool to query +You must also define a `run(..)` method for the tool code that takes in the fields from the `args_schema`. + +Below is an example of how to create a tool by extending `BaseTool`: +```python title="python" maxLines=50 +from letta_client import Letta +from letta_client.client import BaseTool +from pydantic import BaseModel +from typing import List, Type + +class InventoryItem(BaseModel): + sku: str # Unique product identifier + name: str # Product name + price: float # Current price + category: str # Product category (e.g., "Electronics", "Clothing") + +class InventoryEntry(BaseModel): + timestamp: int # Unix timestamp of the transaction + item: InventoryItem # The product being updated + transaction_id: str # Unique identifier for this inventory update + +class InventoryEntryData(BaseModel): + data: InventoryEntry + quantity_change: int # Change in quantity (positive for additions, negative for removals) + + +class ManageInventoryTool(BaseTool): + name: str = "manage_inventory" + args_schema: Type[BaseModel] = InventoryEntryData + description: str = "Update inventory catalogue with a new data entry" + tags: List[str] = ["inventory", "shop"] + + def run(self, data: InventoryEntry, quantity_change: int) -> bool: + print(f"Updated inventory for {data.item.name} with a quantity change of {quantity_change}") + return True + +# create a client to connect to your local Letta server +client = Letta( + base_url="http://localhost:8283" +) +# create the tool +tool_from_class = client.tools.add( + tool=ManageInventoryTool(), +) +``` + +### Specifying tools via function docstrings +You can create a tool by passing in a function with a [Google Style Python docstring](https://google.github.io/styleguide/pyguide.html#383-functions-and-methods) specifying the arguments and description of the tool: +```python title="python" maxLines=50 +# install letta_client with `pip install letta-client` +from letta_client import Letta + +# create a client to connect to your local Letta server +client = Letta( + base_url="http://localhost:8283" +) + +# define a function with a docstring +def roll_dice() -> str: + """ + Simulate the roll of a 20-sided die (d20). + + This function generates a random integer between 1 and 20, inclusive, + which represents the outcome of a single roll of a d20. + + Returns: + str: The result of the die roll. + """ + import random + + dice_role_outcome = random.randint(1, 20) + output_string = f"You rolled a {dice_role_outcome}" + return output_string + +# create the tool +tool = client.tools.create_from_function( + func=roll_dice +) +``` +The tool creation will return a `Tool` object. You can update the tool with `client.tools.upsert_from_function(...)`. + + +### Specifying arguments via Pydantic models +To specify the arguments for a complex tool, you can use the `args_schema` parameter. + +```python title="python" maxLines=50 +# install letta_client with `pip install letta-client` +from letta_client import Letta + +class Step(BaseModel): + name: str = Field( + ..., + description="Name of the step.", + ) + description: str = Field( + ..., + description="An exhaustic description of what this step is trying to achieve and accomplish.", + ) + + +class StepsList(BaseModel): + steps: list[Step] = Field( + ..., + description="List of steps to add to the task plan.", + ) + explanation: str = Field( + ..., + description="Explanation for the list of steps.", + ) + +def create_task_plan(steps, explanation): + """ Creates a task plan for the current task. """ + return steps + + +tool = client.tools.upsert_from_function( + func=create_task_plan, + args_schema=StepsList +) +``` +Note: this path for updating tools is currently only supported in Python. + +### Creating a tool from a file +You can also define a tool from a file that contains source code. For example, you may have the following file: +```python title="custom_tool.py" maxLines=50 +from typing import List, Optional +from pydantic import BaseModel, Field + + +class Order(BaseModel): + order_number: int = Field( + ..., + description="The order number to check on.", + ) + customer_name: str = Field( + ..., + description="The customer name to check on.", + ) + +def check_order_status( + orders: List[Order] +): + """ + Check status of a provided list of orders + + Args: + orders (List[Order]): List of orders to check + + Returns: + str: The status of the order (e.g. cancelled, refunded, processed, processing, shipping). + """ + # TODO: implement + return "ok" + +``` +Then, you can define the tool in Letta via the `source_code` parameter: +```python title="python" maxLines=50 +tool = client.tools.create( + source_code = open("custom_tool.py", "r").read() +) +``` +Note that in this case, `check_order_status` will become the name of your tool, since it is the last Python function in the file. Make sure it includes a [Google Style Python docstring](https://google.github.io/styleguide/pyguide.html#383-functions-and-methods) to define the tool’s arguments and description. + +# (Advanced) Accessing Agent State +

Research Papers] + DS2[Folder 2

Medical Records] + end + + subgraph "Files" + F1[paper1.pdf] + F2[paper2.pdf] + F3[patient_record.txt] + F4[lab_results.json] + end + + subgraph "Letta Agents" + A1[Agent 1] + A2[Agent 2] + A3[Agent 3] + end + + DS1 --> F1 + DS1 --> F2 + DS2 --> F3 + DS2 --> F4 + + A2 -.->|attached to| DS1 + A2 -.->|attached to| DS2 + A3 -.->|attached to| DS2 +``` + +Once a file has been uploaded to a folder, the agent can access it using a set of **file tools**. +The file is automatically chunked and embedded to allow the agent to use semantic search to find relevant information in the file (in addition to standard text-based search). + +

Approval?} + Check -->|No| Execute[Execute Tool] + Check -->|Yes| Request[Request Approval] + Request --> Human[Human Review] + Human -->|Approve| Execute + Human -->|Deny| Error[Return Error] + Execute --> Result[Return Result] + Error --> Agent + Result --> Agent +``` + +## Overview + +When a tool is marked as requiring approval, the agent will pause execution and wait for human approval or denial before proceeding. This creates a checkpoint in the agent's workflow where human judgment can be applied. The approval workflow is designed to be non-blocking and supports both synchronous and streaming message interfaces, making it suitable for interactive applications as well as batch processing systems. + +### Key Benefits + +- **Risk Mitigation**: Prevent unintended actions in production environments +- **Cost Control**: Review expensive operations before execution +- **Compliance**: Ensure human oversight for regulated operations +- **Quality Assurance**: Validate agent decisions before critical actions + +### How It Works + +The approval workflow follows a clear sequence of steps that ensures human oversight at critical decision points: + +1. **Tool Configuration**: Mark specific tools as requiring approval either globally (default for all agents) or per-agent +2. **Execution Pause**: When the agent attempts to call a protected tool, it immediately pauses and returns an approval request message +3. **Human Review**: The approval request includes the tool name, arguments, and context, allowing you to make an informed decision +4. **Approval/Denial**: Send an approval response to either execute the tool or provide feedback for the agent to adjust its approach +5. **Continuation**: The agent receives the tool result (on approval) or an error message (on denial) and continues processing + + +## Best Practices + +Following these best practices will help you implement effective human-in-the-loop workflows while maintaining a good user experience and system performance. + +### 1. Selective Tool Marking + +Not every tool needs human approval. Be strategic about which tools require oversight to avoid workflow bottlenecks while maintaining necessary controls: + +**Tools that typically require approval:** +- Database write operations (INSERT, UPDATE, DELETE) +- External API calls with financial implications +- File system modifications or deletions +- Communication tools (email, SMS, notifications) +- System configuration changes +- Third-party service integrations with rate limits + +### 2. Clear Denial Reasons + +When denying a request, your feedback directly influences how the agent adjusts its approach. Provide specific, actionable guidance rather than vague rejections: + +```python +# Good: Specific and actionable +"reason": "Use read-only query first to verify the data before deletion" + +# Bad: Too vague +"reason": "Don't do that" +``` + +The agent will use your denial reason to reformulate its approach, so the more specific you are, the better the agent can adapt. + +## Setting Up Approval Requirements + +There are two methods for configuring tool approval requirements, each suited for different use cases. Choose the approach that best fits your security model and operational needs. + +### Method 1: Create/Upsert Tool with Default Approval Requirement + +Set approval requirements at the tool level when creating or upserting a tool. This approach ensures consistent security policies across all agents that use the tool. The `default_requires_approval` flag will be applied to all future agent-tool attachments: + +

I am a supervisor] + SS[Shared Memory Block

Organization: Letta] + end + + subgraph Worker + W1[Memory Block

I am a worker] + W1S[Shared Memory Block

Organization: Letta] + end + + SS -..- W1S +``` + +In the example code below, we create a shared memory block and attach it to a supervisor agent and a worker agent. +Because the memory block is shared, when one agent writes to it, the other agent can read the updates immediately. + +

+

+ +

+## Usage Examples (SDK)

+

+### Sending an Image via URL

+

+

+

+## Usage Examples (SDK)

+

+### Sending an Image via URL

+

+ diff --git a/fern/pages/agents/overview.mdx b/fern/pages/agents/overview.mdx

new file mode 100644

index 00000000..17da85c3

--- /dev/null

+++ b/fern/pages/agents/overview.mdx

@@ -0,0 +1,271 @@

+---

+title: Building Stateful Agents with Letta

+slug: guides/agents/overview

+---

+Letta agents can automatically manage long-term memory, load data from external sources, and call custom tools.

+Unlike in other frameworks, Letta agents are stateful, so they keep track of historical interactions and reserve part of their context to read and write memories which evolve over time.

+

diff --git a/fern/pages/agents/overview.mdx b/fern/pages/agents/overview.mdx

new file mode 100644

index 00000000..17da85c3

--- /dev/null

+++ b/fern/pages/agents/overview.mdx

@@ -0,0 +1,271 @@

+---

+title: Building Stateful Agents with Letta

+slug: guides/agents/overview

+---

+Letta agents can automatically manage long-term memory, load data from external sources, and call custom tools.

+Unlike in other frameworks, Letta agents are stateful, so they keep track of historical interactions and reserve part of their context to read and write memories which evolve over time.

+ +

+ +

+

+

+Letta manages a reasoning loop for agents. At each agent step (i.e. iteration of the loop), the state of the agent is checkpointed and persisted to the database.

+

+You can interact with agents from a REST API, the ADE, and TypeScript / Python SDKs.

+As long as they are connected to the same service, all of these interfaces can be used to interact with the same agents.

+

+

+

+

+

+Letta manages a reasoning loop for agents. At each agent step (i.e. iteration of the loop), the state of the agent is checkpointed and persisted to the database.

+

+You can interact with agents from a REST API, the ADE, and TypeScript / Python SDKs.

+As long as they are connected to the same service, all of these interfaces can be used to interact with the same agents.

+

+ +

+ +

+In Letta, you can create special **sleep-time agents** that share the memory of your primary agents, but run in the background and can modify the memory asynchronously. You can think of sleep-time agents as a special form of multi-agent architecture, where all agents in the system share one or more memory blocks. A single agent can have one or more associated sleep-time agents to process data such as the conversation history or data sources to manage the memory blocks of the primary agent.

+

+To enable sleep-time agents for your agent, create the agent with type `sleeptime_agent`. When you create an agent of this type, this will automatically create:

+* A primary agent (i.e. general-purpose agent) tools for `send_message`, `conversation_search`, and `archival_memory_search`. This is your "main" agent that you configure and interact with.

+* A sleep-time agent with tools to manage the memory blocks of the primary agent. It is possible that additional, ephemeral sleep-time agents will be created when you add data into data sources of the primary agent.

+

+## Background: Memory Blocks

+Sleep-time agents specialize in generating *learned context*. Given some original context (e.g. the conversation history, a set of files) the sleep-time agent will reflect on the original context to iteratively derive a learned context. The learned context will reflect the most important pieces of information or insights from the original context.

+

+In Letta, the learned context is saved in a memory block. A memory block represents a labeled section of the context window with an associated character limit. Memory blocks can be shared between multiple agents. A sleep-time agent will write the learned context to a memory block, which can also be shared with other agents that could benefit from those learnings.

+

+Memory blocks can be access directly through the API to be updated, retrieved, or deleted.

+

+

+

+In Letta, you can create special **sleep-time agents** that share the memory of your primary agents, but run in the background and can modify the memory asynchronously. You can think of sleep-time agents as a special form of multi-agent architecture, where all agents in the system share one or more memory blocks. A single agent can have one or more associated sleep-time agents to process data such as the conversation history or data sources to manage the memory blocks of the primary agent.

+

+To enable sleep-time agents for your agent, create the agent with type `sleeptime_agent`. When you create an agent of this type, this will automatically create:

+* A primary agent (i.e. general-purpose agent) tools for `send_message`, `conversation_search`, and `archival_memory_search`. This is your "main" agent that you configure and interact with.

+* A sleep-time agent with tools to manage the memory blocks of the primary agent. It is possible that additional, ephemeral sleep-time agents will be created when you add data into data sources of the primary agent.

+

+## Background: Memory Blocks

+Sleep-time agents specialize in generating *learned context*. Given some original context (e.g. the conversation history, a set of files) the sleep-time agent will reflect on the original context to iteratively derive a learned context. The learned context will reflect the most important pieces of information or insights from the original context.

+

+In Letta, the learned context is saved in a memory block. A memory block represents a labeled section of the context window with an associated character limit. Memory blocks can be shared between multiple agents. A sleep-time agent will write the learned context to a memory block, which can also be shared with other agents that could benefit from those learnings.

+

+Memory blocks can be access directly through the API to be updated, retrieved, or deleted.

+

+ +

+ +

+When a `sleeptime_agent` is created, a primary agent and a sleep-time agent are created as part of a multi-agent group under the hood. The sleep-time agent is responsible for generating learned context from the conversation history to update the memory blocks of the primary agent. The group ensures that for every `N` steps taken by the primary agent, the sleep-time agent is invoked with data containing new messages in the primary agent's message history.

+

+

+

+When a `sleeptime_agent` is created, a primary agent and a sleep-time agent are created as part of a multi-agent group under the hood. The sleep-time agent is responsible for generating learned context from the conversation history to update the memory blocks of the primary agent. The group ensures that for every `N` steps taken by the primary agent, the sleep-time agent is invoked with data containing new messages in the primary agent's message history.

+

+ +

+### Configuring the frequency of sleep-time updates

+The sleep-time agent will be triggered every N-steps (default `5`) to update the memory blocks of the primary agent. You can configure the frequency of updates by setting the `sleeptime_agent_frequency` parameter when creating the agent.

+

+

+

+### Configuring the frequency of sleep-time updates

+The sleep-time agent will be triggered every N-steps (default `5`) to update the memory blocks of the primary agent. You can configure the frequency of updates by setting the `sleeptime_agent_frequency` parameter when creating the agent.

+

+ +

+ +

+Sleep-time-enabled agents will spawn additional ephemeral sleep-time agents when you add data into data sources of the primary agent to process the data sources in the background. These ephemeral agents will create and write to a block specific to the data source, and be deleted once they are finished processing the data sources.

+

+When a file is uploaded to a data source, it is parsed into passages (chunks of text) which are embedded and saved into the main agent's archival memory. If sleeptime is enabled, the sleep-time agent will also process each passage's text to update the memory block corresponding to the data source. The sleep-time agent will create an `instructions` block that contains the data source description, to help guide the learned context generation.

+

+

+

+Sleep-time-enabled agents will spawn additional ephemeral sleep-time agents when you add data into data sources of the primary agent to process the data sources in the background. These ephemeral agents will create and write to a block specific to the data source, and be deleted once they are finished processing the data sources.

+