diff --git a/.gitattributes b/.gitattributes

new file mode 100644

index 00000000..108cb3b3

--- /dev/null

+++ b/.gitattributes

@@ -0,0 +1,20 @@

+# Set the default behavior, in case people don't have core.autocrlf set.

+* text=auto

+

+# Explicitly declare text files you want to always be normalized and converted

+# to LF on checkout.

+*.py text eol=lf

+*.txt text eol=lf

+*.md text eol=lf

+*.json text eol=lf

+*.yml text eol=lf

+*.yaml text eol=lf

+

+# Declare files that will always have CRLF line endings on checkout.

+# (Only if you have specific Windows-only files)

+*.bat text eol=crlf

+

+# Denote all files that are truly binary and should not be modified.

+*.png binary

+*.jpg binary

+*.gif binary

diff --git a/.gitignore b/.gitignore

index 26d77194..92123a56 100644

--- a/.gitignore

+++ b/.gitignore

@@ -1,1019 +1,1019 @@

-# MemGPT config files

-configs/

-

-# Below are generated by gitignor.io (toptal)

-# Created by https://www.toptal.com/developers/gitignore/api/vim,linux,macos,pydev,python,eclipse,pycharm,windows,netbeans,pycharm+all,pycharm+iml,visualstudio,jupyternotebooks,visualstudiocode,xcode,xcodeinjection

-# Edit at https://www.toptal.com/developers/gitignore?templates=vim,linux,macos,pydev,python,eclipse,pycharm,windows,netbeans,pycharm+all,pycharm+iml,visualstudio,jupyternotebooks,visualstudiocode,xcode,xcodeinjection

-

-### Eclipse ###

-.metadata

-bin/

-tmp/

-*.tmp

-*.bak

-*.swp

-*~.nib

-local.properties

-.settings/

-.loadpath

-.recommenders

-

-# External tool builders

-.externalToolBuilders/

-

-# Locally stored "Eclipse launch configurations"

-*.launch

-

-# PyDev specific (Python IDE for Eclipse)

-*.pydevproject

-

-# CDT-specific (C/C++ Development Tooling)

-.cproject

-

-# CDT- autotools

-.autotools

-

-# Java annotation processor (APT)

-.factorypath

-

-# PDT-specific (PHP Development Tools)

-.buildpath

-

-# sbteclipse plugin

-.target

-

-# Tern plugin

-.tern-project

-

-# TeXlipse plugin

-.texlipse

-

-# STS (Spring Tool Suite)

-.springBeans

-

-# Code Recommenders

-.recommenders/

-

-# Annotation Processing

-.apt_generated/

-.apt_generated_test/

-

-# Scala IDE specific (Scala & Java development for Eclipse)

-.cache-main

-.scala_dependencies

-.worksheet

-

-# Uncomment this line if you wish to ignore the project description file.

-# Typically, this file would be tracked if it contains build/dependency configurations:

-#.project

-

-### Eclipse Patch ###

-# Spring Boot Tooling

-.sts4-cache/

-

-### JupyterNotebooks ###

-# gitignore template for Jupyter Notebooks

-# website: http://jupyter.org/

-

-.ipynb_checkpoints

-*/.ipynb_checkpoints/*

-

-# IPython

-profile_default/

-ipython_config.py

-

-# Remove previous ipynb_checkpoints

-# git rm -r .ipynb_checkpoints/

-

-### Linux ###

-*~

-

-# temporary files which can be created if a process still has a handle open of a deleted file

-.fuse_hidden*

-

-# KDE directory preferences

-.directory

-

-# Linux trash folder which might appear on any partition or disk

-.Trash-*

-

-# .nfs files are created when an open file is removed but is still being accessed

-.nfs*

-

-### macOS ###

-# General

-.DS_Store

-.AppleDouble

-.LSOverride

-

-# Icon must end with two \r

-Icon

-

-

-# Thumbnails

-._*

-

-# Files that might appear in the root of a volume

-.DocumentRevisions-V100

-.fseventsd

-.Spotlight-V100

-.TemporaryItems

-.Trashes

-.VolumeIcon.icns

-.com.apple.timemachine.donotpresent

-

-# Directories potentially created on remote AFP share

-.AppleDB

-.AppleDesktop

-Network Trash Folder

-Temporary Items

-.apdisk

-

-### macOS Patch ###

-# iCloud generated files

-*.icloud

-

-### NetBeans ###

-**/nbproject/private/

-**/nbproject/Makefile-*.mk

-**/nbproject/Package-*.bash

-build/

-nbbuild/

-dist/

-nbdist/

-.nb-gradle/

-

-### PyCharm ###

-# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

-# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

-

-# User-specific stuff

-.idea/**/workspace.xml

-.idea/**/tasks.xml

-.idea/**/usage.statistics.xml

-.idea/**/dictionaries

-.idea/**/shelf

-

-# AWS User-specific

-.idea/**/aws.xml

-

-# Generated files

-.idea/**/contentModel.xml

-

-# Sensitive or high-churn files

-.idea/**/dataSources/

-.idea/**/dataSources.ids

-.idea/**/dataSources.local.xml

-.idea/**/sqlDataSources.xml

-.idea/**/dynamic.xml

-.idea/**/uiDesigner.xml

-.idea/**/dbnavigator.xml

-

-# Gradle

-.idea/**/gradle.xml

-.idea/**/libraries

-

-# Gradle and Maven with auto-import

-# When using Gradle or Maven with auto-import, you should exclude module files,

-# since they will be recreated, and may cause churn. Uncomment if using

-# auto-import.

-# .idea/artifacts

-# .idea/compiler.xml

-# .idea/jarRepositories.xml

-# .idea/modules.xml

-# .idea/*.iml

-# .idea/modules

-# *.iml

-# *.ipr

-

-# CMake

-cmake-build-*/

-

-# Mongo Explorer plugin

-.idea/**/mongoSettings.xml

-

-# File-based project format

-*.iws

-

-# IntelliJ

-out/

-

-# mpeltonen/sbt-idea plugin

-.idea_modules/

-

-# JIRA plugin

-atlassian-ide-plugin.xml

-

-# Cursive Clojure plugin

-.idea/replstate.xml

-

-# SonarLint plugin

-.idea/sonarlint/

-

-# Crashlytics plugin (for Android Studio and IntelliJ)

-com_crashlytics_export_strings.xml

-crashlytics.properties

-crashlytics-build.properties

-fabric.properties

-

-# Editor-based Rest Client

-.idea/httpRequests

-

-# Android studio 3.1+ serialized cache file

-.idea/caches/build_file_checksums.ser

-

-### PyCharm Patch ###

-# Comment Reason: https://github.com/joeblau/gitignore.io/issues/186#issuecomment-215987721

-

-# *.iml

-# modules.xml

-# .idea/misc.xml

-# *.ipr

-

-# Sonarlint plugin

-# https://plugins.jetbrains.com/plugin/7973-sonarlint

-.idea/**/sonarlint/

-

-# SonarQube Plugin

-# https://plugins.jetbrains.com/plugin/7238-sonarqube-community-plugin

-.idea/**/sonarIssues.xml

-

-# Markdown Navigator plugin

-# https://plugins.jetbrains.com/plugin/7896-markdown-navigator-enhanced

-.idea/**/markdown-navigator.xml

-.idea/**/markdown-navigator-enh.xml

-.idea/**/markdown-navigator/

-

-# Cache file creation bug

-# See https://youtrack.jetbrains.com/issue/JBR-2257

-.idea/$CACHE_FILE$

-

-# CodeStream plugin

-# https://plugins.jetbrains.com/plugin/12206-codestream

-.idea/codestream.xml

-

-# Azure Toolkit for IntelliJ plugin

-# https://plugins.jetbrains.com/plugin/8053-azure-toolkit-for-intellij

-.idea/**/azureSettings.xml

-

-### PyCharm+all ###

-# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

-# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

-

-# User-specific stuff

-

-# AWS User-specific

-

-# Generated files

-

-# Sensitive or high-churn files

-

-# Gradle

-

-# Gradle and Maven with auto-import

-# When using Gradle or Maven with auto-import, you should exclude module files,

-# since they will be recreated, and may cause churn. Uncomment if using

-# auto-import.

-# .idea/artifacts

-# .idea/compiler.xml

-# .idea/jarRepositories.xml

-# .idea/modules.xml

-# .idea/*.iml

-# .idea/modules

-# *.iml

-# *.ipr

-

-# CMake

-

-# Mongo Explorer plugin

-

-# File-based project format

-

-# IntelliJ

-

-# mpeltonen/sbt-idea plugin

-

-# JIRA plugin

-

-# Cursive Clojure plugin

-

-# SonarLint plugin

-

-# Crashlytics plugin (for Android Studio and IntelliJ)

-

-# Editor-based Rest Client

-

-# Android studio 3.1+ serialized cache file

-

-### PyCharm+all Patch ###

-# Ignore everything but code style settings and run configurations

-# that are supposed to be shared within teams.

-

-.idea/*

-

-!.idea/codeStyles

-!.idea/runConfigurations

-

-### PyCharm+iml ###

-# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

-# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

-

-# User-specific stuff

-

-# AWS User-specific

-

-# Generated files

-

-# Sensitive or high-churn files

-

-# Gradle

-

-# Gradle and Maven with auto-import

-# When using Gradle or Maven with auto-import, you should exclude module files,

-# since they will be recreated, and may cause churn. Uncomment if using

-# auto-import.

-# .idea/artifacts

-# .idea/compiler.xml

-# .idea/jarRepositories.xml

-# .idea/modules.xml

-# .idea/*.iml

-# .idea/modules

-# *.iml

-# *.ipr

-

-# CMake

-

-# Mongo Explorer plugin

-

-# File-based project format

-

-# IntelliJ

-

-# mpeltonen/sbt-idea plugin

-

-# JIRA plugin

-

-# Cursive Clojure plugin

-

-# SonarLint plugin

-

-# Crashlytics plugin (for Android Studio and IntelliJ)

-

-# Editor-based Rest Client

-

-# Android studio 3.1+ serialized cache file

-

-### PyCharm+iml Patch ###

-# Reason: https://github.com/joeblau/gitignore.io/issues/186#issuecomment-249601023

-

-*.iml

-modules.xml

-.idea/misc.xml

-*.ipr

-

-### pydev ###

-.pydevproject

-

-### Python ###

-# Byte-compiled / optimized / DLL files

-__pycache__/

-*.py[cod]

-*$py.class

-

-# C extensions

-*.so

-

-# Distribution / packaging

-.Python

-develop-eggs/

-downloads/

-eggs#memgpt/memgpt-server:0.3.7

-MANIFEST

-

-# PyInstaller

-# Usually these files are written by a python script from a template

-# before PyInstaller builds the exe, so as to inject date/other infos into it.

-*.manifest

-*.spec

-

-# Installer logs

-pip-log.txt

-pip-delete-this-directory.txt

-

-# Unit test / coverage reports

-htmlcov/

-.tox/

-.nox/

-.coverage

-.coverage.*

-.cache

-nosetests.xml

-coverage.xml

-*.cover

-*.py,cover

-.hypothesis/

-.pytest_cache/

-cover/

-

-# Translations

-*.mo

-*.pot

-

-# Django stuff:

-*.log

-local_settings.py

-db.sqlite3

-db.sqlite3-journal

-

-# Flask stuff:

-instance/

-.webassets-cache

-

-# Scrapy stuff:

-.scrapy

-

-# Sphinx documentation

-docs/_build/

-

-# PyBuilder

-.pybuilder/

-target/

-

-# Jupyter Notebook

-

-# IPython

-

-# pyenv

-# For a library or package, you might want to ignore these files since the code is

-# intended to run in multiple environments; otherwise, check them in:

-# .python-version

-

-# pipenv

-# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

-# However, in case of collaboration, if having platform-specific dependencies or dependencies

-# having no cross-platform support, pipenv may install dependencies that don't work, or not

-# install all needed dependencies.

-#Pipfile.lock

-

-# poetry

-# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

-# This is especially recommended for binary packages to ensure reproducibility, and is more

-# commonly ignored for libraries.

-# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

-#poetry.lock

-

-# pdm

-# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

-#pdm.lock

-# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

-# in version control.

-# https://pdm.fming.dev/#use-with-ide

-.pdm.toml

-

-# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

-__pypackages__/

-

-# Celery stuff

-celerybeat-schedule

-celerybeat.pid

-

-# SageMath parsed files

-*.sage.py

-

-# Environments

-.env

-.venv

-env/

-venv/

-ENV/

-env.bak/

-venv.bak/

-

-# Spyder project settings

-.spyderproject

-.spyproject

-

-# Rope project settings

-.ropeproject

-

-# mkdocs documentation

-/site

-

-# mypy

-.mypy_cache/

-.dmypy.json

-dmypy.json

-

-# Pyre type checker

-.pyre/

-

-# pytype static type analyzer

-.pytype/

-

-# Cython debug symbols

-cython_debug/

-

-# PyCharm

-# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

-# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

-# and can be added to the global gitignore or merged into this file. For a more nuclear

-# option (not recommended) you can uncomment the following to ignore the entire idea folder.

-#.idea/

-

-### Python Patch ###

-# Poetry local configuration file - https://python-poetry.org/docs/configuration/#local-configuration

-poetry.toml

-

-# ruff

-.ruff_cache/

-

-# LSP config files

-pyrightconfig.json

-

-### Vim ###

-# Swap

-[._]*.s[a-v][a-z]

-!*.svg # comment out if you don't need vector files

-[._]*.sw[a-p]

-[._]s[a-rt-v][a-z]

-[._]ss[a-gi-z]

-[._]sw[a-p]

-

-# Session

-Session.vim

-Sessionx.vim

-

-# Temporary

-.netrwhist

-# Auto-generated tag files

-tags

-# Persistent undo

-[._]*.un~

-

-### VisualStudioCode ###

-.vscode/*

-!.vscode/settings.json

-!.vscode/tasks.json

-!.vscode/launch.json

-!.vscode/extensions.json

-!.vscode/*.code-snippets

-

-# Local History for Visual Studio Code

-.history/

-

-# Built Visual Studio Code Extensions

-*.vsix

-

-### VisualStudioCode Patch ###

-# Ignore all local history of files

-.history

-.ionide

-

-### Windows ###

-# Windows thumbnail cache files

-Thumbs.db

-Thumbs.db:encryptable

-ehthumbs.db

-ehthumbs_vista.db

-

-# Dump file

-*.stackdump

-

-# Folder config file

-[Dd]esktop.ini

-

-# Recycle Bin used on file shares

-$RECYCLE.BIN/

-

-# Windows Installer files

-*.cab

-*.msi

-*.msix

-*.msm

-*.msp

-

-# Windows shortcuts

-*.lnk

-

-### Xcode ###

-## User settings

-xcuserdata/

-

-## Xcode 8 and earlier

-*.xcscmblueprint

-*.xccheckout

-

-### Xcode Patch ###

-*.xcodeproj/*

-!*.xcodeproj/project.pbxproj

-!*.xcodeproj/xcshareddata/

-!*.xcodeproj/project.xcworkspace/

-!*.xcworkspace/contents.xcworkspacedata

-/*.gcno

-**/xcshareddata/WorkspaceSettings.xcsettings

-

-### XcodeInjection ###

-# Code Injection

-#

-# After new code Injection tools there's a generated folder /iOSInjectionProject

-# https://github.com/johnno1962/injectionforxcode

-

-iOSInjectionProject/

-

-### VisualStudio ###

-## Ignore Visual Studio temporary files, build results, and

-## files generated by popular Visual Studio add-ons.

-##

-## Get latest from https://github.com/github/gitignore/blob/main/VisualStudio.gitignore

-

-# User-specific files

-*.rsuser

-*.suo

-*.user

-*.userosscache

-*.sln.docstates

-

-# User-specific files (MonoDevelop/Xamarin Studio)

-*.userprefs

-

-# Mono auto generated files

-mono_crash.*

-

-# Build results

-[Dd]ebug/

-[Dd]ebugPublic/

-[Rr]elease/

-[Rr]eleases/

-x64/

-x86/

-[Ww][Ii][Nn]32/

-[Aa][Rr][Mm]/

-[Aa][Rr][Mm]64/

-bld/

-[Bb]in/

-[Oo]bj/

-[Ll]og/

-[Ll]ogs/

-

-# Visual Studio 2015/2017 cache/options directory

-.vs/

-# Uncomment if you have tasks that create the project's static files in wwwroot

-#wwwroot/

-

-# Visual Studio 2017 auto generated files

-Generated\ Files/

-

-# MSTest test Results

-[Tt]est[Rr]esult*/

-[Bb]uild[Ll]og.*

-

-# NUnit

-*.VisualState.xml

-TestResult.xml

-nunit-*.xml

-

-# Build Results of an ATL Project

-[Dd]ebugPS/

-[Rr]eleasePS/

-dlldata.c

-

-# Benchmark Results

-BenchmarkDotNet.Artifacts/

-

-# .NET Core

-project.lock.json

-project.fragment.lock.json

-artifacts/

-

-# ASP.NET Scaffolding

-ScaffoldingReadMe.txt

-

-# StyleCop

-StyleCopReport.xml

-

-# Files built by Visual Studio

-*_i.c

-*_p.c

-*_h.h

-*.ilk

-*.meta

-*.obj

-*.iobj

-*.pch

-*.pdb

-*.ipdb

-*.pgc

-*.pgd

-*.rsp

-*.sbr

-*.tlb

-*.tli

-*.tlh

-*.tmp_proj

-*_wpftmp.csproj

-*.tlog

-*.vspscc

-*.vssscc

-.builds

-*.pidb

-*.svclog

-*.scc

-

-# Chutzpah Test files

-_Chutzpah*

-

-# Visual C++ cache files

-ipch/

-*.aps

-*.ncb

-*.opendb

-*.opensdf

-*.sdf

-*.cachefile

-*.VC.db

-*.VC.VC.opendb

-

-# Visual Studio profiler

-*.psess

-*.vsp

-*.vspx

-*.sap

-

-# Visual Studio Trace Files

-*.e2e

-

-# TFS 2012 Local Workspace

-$tf/

-

-# Guidance Automation Toolkit

-*.gpState

-

-# ReSharper is a .NET coding add-in

-_ReSharper*/

-*.[Rr]e[Ss]harper

-*.DotSettings.user

-

-# TeamCity is a build add-in

-_TeamCity*

-

-# DotCover is a Code Coverage Tool

-*.dotCover

-

-# AxoCover is a Code Coverage Tool

-.axoCover/*

-!.axoCover/settings.json

-

-# Coverlet is a free, cross platform Code Coverage Tool

-coverage*.json

-coverage*.xml

-coverage*.info

-

-# Visual Studio code coverage results

-*.coverage

-*.coveragexml

-

-# NCrunch

-_NCrunch_*

-.*crunch*.local.xml

-nCrunchTemp_*

-

-# MightyMoose

-*.mm.*

-AutoTest.Net/

-

-# Web workbench (sass)

-.sass-cache/

-

-# Installshield output folder

-[Ee]xpress/

-

-# DocProject is a documentation generator add-in

-DocProject/buildhelp/

-DocProject/Help/*.HxT

-DocProject/Help/*.HxC

-DocProject/Help/*.hhc

-DocProject/Help/*.hhk

-DocProject/Help/*.hhp

-DocProject/Help/Html2

-DocProject/Help/html

-

-# Click-Once directory

-publish/

-

-# Publish Web Output

-*.[Pp]ublish.xml

-*.azurePubxml

-# Note: Comment the next line if you want to checkin your web deploy settings,

-# but database connection strings (with potential passwords) will be unencrypted

-*.pubxml

-*.publishproj

-

-# Microsoft Azure Web App publish settings. Comment the next line if you want to

-# checkin your Azure Web App publish settings, but sensitive information contained

-# in these scripts will be unencrypted

-PublishScripts/

-

-# NuGet Packages

-*.nupkg

-# NuGet Symbol Packages

-*.snupkg

-# The packages folder can be ignored because of Package Restore

-**/[Pp]ackages/*

-# except build/, which is used as an MSBuild target.

-!**/[Pp]ackages/build/

-# Uncomment if necessary however generally it will be regenerated when needed

-#!**/[Pp]ackages/repositories.config

-# NuGet v3's project.json files produces more ignorable files

-*.nuget.props

-*.nuget.targets

-

-# Microsoft Azure Build Output

-csx/

-*.build.csdef

-

-# Microsoft Azure Emulator

-ecf/

-rcf/

-

-# Windows Store app package directories and files

-AppPackages/

-BundleArtifacts/

-Package.StoreAssociation.xml

-_pkginfo.txt

-*.appx

-*.appxbundle

-*.appxupload

-

-# Visual Studio cache files

-# files ending in .cache can be ignored

-*.[Cc]ache

-# but keep track of directories ending in .cache

-!?*.[Cc]ache/

-

-# Others

-ClientBin/

-~$*

-*.dbmdl

-*.dbproj.schemaview

-*.jfm

-*.pfx

-*.publishsettings

-orleans.codegen.cs

-

-# Including strong name files can present a security risk

-# (https://github.com/github/gitignore/pull/2483#issue-259490424)

-#*.snk

-

-# Since there are multiple workflows, uncomment next line to ignore bower_components

-# (https://github.com/github/gitignore/pull/1529#issuecomment-104372622)

-#bower_components/

-

-# RIA/Silverlight projects

-Generated_Code/

-

-# Backup & report files from converting an old project file

-# to a newer Visual Studio version. Backup files are not needed,

-# because we have git ;-)

-_UpgradeReport_Files/

-Backup*/

-UpgradeLog*.XML

-UpgradeLog*.htm

-ServiceFabricBackup/

-*.rptproj.bak

-

-# SQL Server files

-*.mdf

-*.ldf

-*.ndf

-

-# Business Intelligence projects

-*.rdl.data

-*.bim.layout

-*.bim_*.settings

-*.rptproj.rsuser

-*- [Bb]ackup.rdl

-*- [Bb]ackup ([0-9]).rdl

-*- [Bb]ackup ([0-9][0-9]).rdl

-

-# Microsoft Fakes

-FakesAssemblies/

-

-# GhostDoc plugin setting file

-*.GhostDoc.xml

-

-# Node.js Tools for Visual Studio

-.ntvs_analysis.dat

-node_modules/

-

-# Visual Studio 6 build log

-*.plg

-

-# Visual Studio 6 workspace options file

-*.opt

-

-# Visual Studio 6 auto-generated workspace file (contains which files were open etc.)

-*.vbw

-

-# Visual Studio 6 auto-generated project file (contains which files were open etc.)

-*.vbp

-

-# Visual Studio 6 workspace and project file (working project files containing files to include in project)

-*.dsw

-*.dsp

-

-# Visual Studio 6 technical files

-

-# Visual Studio LightSwitch build output

-**/*.HTMLClient/GeneratedArtifacts

-**/*.DesktopClient/GeneratedArtifacts

-**/*.DesktopClient/ModelManifest.xml

-**/*.Server/GeneratedArtifacts

-**/*.Server/ModelManifest.xml

-_Pvt_Extensions

-

-# Paket dependency manager

-.paket/paket.exe

-paket-files/

-

-# FAKE - F# Make

-.fake/

-

-# CodeRush personal settings

-.cr/personal

-

-# Python Tools for Visual Studio (PTVS)

-*.pyc

-

-# Cake - Uncomment if you are using it

-# tools/**

-# !tools/packages.config

-

-# Tabs Studio

-*.tss

-

-# Telerik's JustMock configuration file

-*.jmconfig

-

-# BizTalk build output

-*.btp.cs

-*.btm.cs

-*.odx.cs

-*.xsd.cs

-

-# OpenCover UI analysis results

-OpenCover/

-

-# Azure Stream Analytics local run output

-ASALocalRun/

-

-# MSBuild Binary and Structured Log

-*.binlog

-

-# NVidia Nsight GPU debugger configuration file

-*.nvuser

-

-# MFractors (Xamarin productivity tool) working folder

-.mfractor/

-

-# Local History for Visual Studio

-.localhistory/

-

-# Visual Studio History (VSHistory) files

-.vshistory/

-

-# BeatPulse healthcheck temp database

-healthchecksdb

-

-# Backup folder for Package Reference Convert tool in Visual Studio 2017

-MigrationBackup/

-

-# Ionide (cross platform F# VS Code tools) working folder

-.ionide/

-

-# Fody - auto-generated XML schema

-FodyWeavers.xsd

-

-# VS Code files for those working on multiple tools

-*.code-workspace

-

-# Local History for Visual Studio Code

-

-# Windows Installer files from build outputs

-

-# JetBrains Rider

-*.sln.iml

-

-### VisualStudio Patch ###

-# Additional files built by Visual Studio

-

-# End of https://www.toptal.com/developers/gitignore/api/vim,linux,macos,pydev,python,eclipse,pycharm,windows,netbeans,pycharm+all,pycharm+iml,visualstudio,jupyternotebooks,visualstudiocode,xcode,xcodeinjection

-

-

-## cached db data

-pgdata/

-!pgdata/.gitkeep

-

-## pytest mirrors

-memgpt/.pytest_cache/

-memgpy/pytest.ini

-**/**/pytest_cache

+# MemGPT config files

+configs/

+

+# Below are generated by gitignor.io (toptal)

+# Created by https://www.toptal.com/developers/gitignore/api/vim,linux,macos,pydev,python,eclipse,pycharm,windows,netbeans,pycharm+all,pycharm+iml,visualstudio,jupyternotebooks,visualstudiocode,xcode,xcodeinjection

+# Edit at https://www.toptal.com/developers/gitignore?templates=vim,linux,macos,pydev,python,eclipse,pycharm,windows,netbeans,pycharm+all,pycharm+iml,visualstudio,jupyternotebooks,visualstudiocode,xcode,xcodeinjection

+

+### Eclipse ###

+.metadata

+bin/

+tmp/

+*.tmp

+*.bak

+*.swp

+*~.nib

+local.properties

+.settings/

+.loadpath

+.recommenders

+

+# External tool builders

+.externalToolBuilders/

+

+# Locally stored "Eclipse launch configurations"

+*.launch

+

+# PyDev specific (Python IDE for Eclipse)

+*.pydevproject

+

+# CDT-specific (C/C++ Development Tooling)

+.cproject

+

+# CDT- autotools

+.autotools

+

+# Java annotation processor (APT)

+.factorypath

+

+# PDT-specific (PHP Development Tools)

+.buildpath

+

+# sbteclipse plugin

+.target

+

+# Tern plugin

+.tern-project

+

+# TeXlipse plugin

+.texlipse

+

+# STS (Spring Tool Suite)

+.springBeans

+

+# Code Recommenders

+.recommenders/

+

+# Annotation Processing

+.apt_generated/

+.apt_generated_test/

+

+# Scala IDE specific (Scala & Java development for Eclipse)

+.cache-main

+.scala_dependencies

+.worksheet

+

+# Uncomment this line if you wish to ignore the project description file.

+# Typically, this file would be tracked if it contains build/dependency configurations:

+#.project

+

+### Eclipse Patch ###

+# Spring Boot Tooling

+.sts4-cache/

+

+### JupyterNotebooks ###

+# gitignore template for Jupyter Notebooks

+# website: http://jupyter.org/

+

+.ipynb_checkpoints

+*/.ipynb_checkpoints/*

+

+# IPython

+profile_default/

+ipython_config.py

+

+# Remove previous ipynb_checkpoints

+# git rm -r .ipynb_checkpoints/

+

+### Linux ###

+*~

+

+# temporary files which can be created if a process still has a handle open of a deleted file

+.fuse_hidden*

+

+# KDE directory preferences

+.directory

+

+# Linux trash folder which might appear on any partition or disk

+.Trash-*

+

+# .nfs files are created when an open file is removed but is still being accessed

+.nfs*

+

+### macOS ###

+# General

+.DS_Store

+.AppleDouble

+.LSOverride

+

+# Icon must end with two \r

+Icon

+

+

+# Thumbnails

+._*

+

+# Files that might appear in the root of a volume

+.DocumentRevisions-V100

+.fseventsd

+.Spotlight-V100

+.TemporaryItems

+.Trashes

+.VolumeIcon.icns

+.com.apple.timemachine.donotpresent

+

+# Directories potentially created on remote AFP share

+.AppleDB

+.AppleDesktop

+Network Trash Folder

+Temporary Items

+.apdisk

+

+### macOS Patch ###

+# iCloud generated files

+*.icloud

+

+### NetBeans ###

+**/nbproject/private/

+**/nbproject/Makefile-*.mk

+**/nbproject/Package-*.bash

+build/

+nbbuild/

+dist/

+nbdist/

+.nb-gradle/

+

+### PyCharm ###

+# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

+# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

+

+# User-specific stuff

+.idea/**/workspace.xml

+.idea/**/tasks.xml

+.idea/**/usage.statistics.xml

+.idea/**/dictionaries

+.idea/**/shelf

+

+# AWS User-specific

+.idea/**/aws.xml

+

+# Generated files

+.idea/**/contentModel.xml

+

+# Sensitive or high-churn files

+.idea/**/dataSources/

+.idea/**/dataSources.ids

+.idea/**/dataSources.local.xml

+.idea/**/sqlDataSources.xml

+.idea/**/dynamic.xml

+.idea/**/uiDesigner.xml

+.idea/**/dbnavigator.xml

+

+# Gradle

+.idea/**/gradle.xml

+.idea/**/libraries

+

+# Gradle and Maven with auto-import

+# When using Gradle or Maven with auto-import, you should exclude module files,

+# since they will be recreated, and may cause churn. Uncomment if using

+# auto-import.

+# .idea/artifacts

+# .idea/compiler.xml

+# .idea/jarRepositories.xml

+# .idea/modules.xml

+# .idea/*.iml

+# .idea/modules

+# *.iml

+# *.ipr

+

+# CMake

+cmake-build-*/

+

+# Mongo Explorer plugin

+.idea/**/mongoSettings.xml

+

+# File-based project format

+*.iws

+

+# IntelliJ

+out/

+

+# mpeltonen/sbt-idea plugin

+.idea_modules/

+

+# JIRA plugin

+atlassian-ide-plugin.xml

+

+# Cursive Clojure plugin

+.idea/replstate.xml

+

+# SonarLint plugin

+.idea/sonarlint/

+

+# Crashlytics plugin (for Android Studio and IntelliJ)

+com_crashlytics_export_strings.xml

+crashlytics.properties

+crashlytics-build.properties

+fabric.properties

+

+# Editor-based Rest Client

+.idea/httpRequests

+

+# Android studio 3.1+ serialized cache file

+.idea/caches/build_file_checksums.ser

+

+### PyCharm Patch ###

+# Comment Reason: https://github.com/joeblau/gitignore.io/issues/186#issuecomment-215987721

+

+# *.iml

+# modules.xml

+# .idea/misc.xml

+# *.ipr

+

+# Sonarlint plugin

+# https://plugins.jetbrains.com/plugin/7973-sonarlint

+.idea/**/sonarlint/

+

+# SonarQube Plugin

+# https://plugins.jetbrains.com/plugin/7238-sonarqube-community-plugin

+.idea/**/sonarIssues.xml

+

+# Markdown Navigator plugin

+# https://plugins.jetbrains.com/plugin/7896-markdown-navigator-enhanced

+.idea/**/markdown-navigator.xml

+.idea/**/markdown-navigator-enh.xml

+.idea/**/markdown-navigator/

+

+# Cache file creation bug

+# See https://youtrack.jetbrains.com/issue/JBR-2257

+.idea/$CACHE_FILE$

+

+# CodeStream plugin

+# https://plugins.jetbrains.com/plugin/12206-codestream

+.idea/codestream.xml

+

+# Azure Toolkit for IntelliJ plugin

+# https://plugins.jetbrains.com/plugin/8053-azure-toolkit-for-intellij

+.idea/**/azureSettings.xml

+

+### PyCharm+all ###

+# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

+# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

+

+# User-specific stuff

+

+# AWS User-specific

+

+# Generated files

+

+# Sensitive or high-churn files

+

+# Gradle

+

+# Gradle and Maven with auto-import

+# When using Gradle or Maven with auto-import, you should exclude module files,

+# since they will be recreated, and may cause churn. Uncomment if using

+# auto-import.

+# .idea/artifacts

+# .idea/compiler.xml

+# .idea/jarRepositories.xml

+# .idea/modules.xml

+# .idea/*.iml

+# .idea/modules

+# *.iml

+# *.ipr

+

+# CMake

+

+# Mongo Explorer plugin

+

+# File-based project format

+

+# IntelliJ

+

+# mpeltonen/sbt-idea plugin

+

+# JIRA plugin

+

+# Cursive Clojure plugin

+

+# SonarLint plugin

+

+# Crashlytics plugin (for Android Studio and IntelliJ)

+

+# Editor-based Rest Client

+

+# Android studio 3.1+ serialized cache file

+

+### PyCharm+all Patch ###

+# Ignore everything but code style settings and run configurations

+# that are supposed to be shared within teams.

+

+.idea/*

+

+!.idea/codeStyles

+!.idea/runConfigurations

+

+### PyCharm+iml ###

+# Covers JetBrains IDEs: IntelliJ, RubyMine, PhpStorm, AppCode, PyCharm, CLion, Android Studio, WebStorm and Rider

+# Reference: https://intellij-support.jetbrains.com/hc/en-us/articles/206544839

+

+# User-specific stuff

+

+# AWS User-specific

+

+# Generated files

+

+# Sensitive or high-churn files

+

+# Gradle

+

+# Gradle and Maven with auto-import

+# When using Gradle or Maven with auto-import, you should exclude module files,

+# since they will be recreated, and may cause churn. Uncomment if using

+# auto-import.

+# .idea/artifacts

+# .idea/compiler.xml

+# .idea/jarRepositories.xml

+# .idea/modules.xml

+# .idea/*.iml

+# .idea/modules

+# *.iml

+# *.ipr

+

+# CMake

+

+# Mongo Explorer plugin

+

+# File-based project format

+

+# IntelliJ

+

+# mpeltonen/sbt-idea plugin

+

+# JIRA plugin

+

+# Cursive Clojure plugin

+

+# SonarLint plugin

+

+# Crashlytics plugin (for Android Studio and IntelliJ)

+

+# Editor-based Rest Client

+

+# Android studio 3.1+ serialized cache file

+

+### PyCharm+iml Patch ###

+# Reason: https://github.com/joeblau/gitignore.io/issues/186#issuecomment-249601023

+

+*.iml

+modules.xml

+.idea/misc.xml

+*.ipr

+

+### pydev ###

+.pydevproject

+

+### Python ###

+# Byte-compiled / optimized / DLL files

+__pycache__/

+*.py[cod]

+*$py.class

+

+# C extensions

+*.so

+

+# Distribution / packaging

+.Python

+develop-eggs/

+downloads/

+eggs#memgpt/memgpt-server:0.3.7

+MANIFEST

+

+# PyInstaller

+# Usually these files are written by a python script from a template

+# before PyInstaller builds the exe, so as to inject date/other infos into it.

+*.manifest

+*.spec

+

+# Installer logs

+pip-log.txt

+pip-delete-this-directory.txt

+

+# Unit test / coverage reports

+htmlcov/

+.tox/

+.nox/

+.coverage

+.coverage.*

+.cache

+nosetests.xml

+coverage.xml

+*.cover

+*.py,cover

+.hypothesis/

+.pytest_cache/

+cover/

+

+# Translations

+*.mo

+*.pot

+

+# Django stuff:

+*.log

+local_settings.py

+db.sqlite3

+db.sqlite3-journal

+

+# Flask stuff:

+instance/

+.webassets-cache

+

+# Scrapy stuff:

+.scrapy

+

+# Sphinx documentation

+docs/_build/

+

+# PyBuilder

+.pybuilder/

+target/

+

+# Jupyter Notebook

+

+# IPython

+

+# pyenv

+# For a library or package, you might want to ignore these files since the code is

+# intended to run in multiple environments; otherwise, check them in:

+# .python-version

+

+# pipenv

+# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

+# However, in case of collaboration, if having platform-specific dependencies or dependencies

+# having no cross-platform support, pipenv may install dependencies that don't work, or not

+# install all needed dependencies.

+#Pipfile.lock

+

+# poetry

+# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

+# This is especially recommended for binary packages to ensure reproducibility, and is more

+# commonly ignored for libraries.

+# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

+#poetry.lock

+

+# pdm

+# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

+#pdm.lock

+# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

+# in version control.

+# https://pdm.fming.dev/#use-with-ide

+.pdm.toml

+

+# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

+__pypackages__/

+

+# Celery stuff

+celerybeat-schedule

+celerybeat.pid

+

+# SageMath parsed files

+*.sage.py

+

+# Environments

+.env

+.venv

+env/

+venv/

+ENV/

+env.bak/

+venv.bak/

+

+# Spyder project settings

+.spyderproject

+.spyproject

+

+# Rope project settings

+.ropeproject

+

+# mkdocs documentation

+/site

+

+# mypy

+.mypy_cache/

+.dmypy.json

+dmypy.json

+

+# Pyre type checker

+.pyre/

+

+# pytype static type analyzer

+.pytype/

+

+# Cython debug symbols

+cython_debug/

+

+# PyCharm

+# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

+# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

+# and can be added to the global gitignore or merged into this file. For a more nuclear

+# option (not recommended) you can uncomment the following to ignore the entire idea folder.

+#.idea/

+

+### Python Patch ###

+# Poetry local configuration file - https://python-poetry.org/docs/configuration/#local-configuration

+poetry.toml

+

+# ruff

+.ruff_cache/

+

+# LSP config files

+pyrightconfig.json

+

+### Vim ###

+# Swap

+[._]*.s[a-v][a-z]

+!*.svg # comment out if you don't need vector files

+[._]*.sw[a-p]

+[._]s[a-rt-v][a-z]

+[._]ss[a-gi-z]

+[._]sw[a-p]

+

+# Session

+Session.vim

+Sessionx.vim

+

+# Temporary

+.netrwhist

+# Auto-generated tag files

+tags

+# Persistent undo

+[._]*.un~

+

+### VisualStudioCode ###

+.vscode/*

+!.vscode/settings.json

+!.vscode/tasks.json

+!.vscode/launch.json

+!.vscode/extensions.json

+!.vscode/*.code-snippets

+

+# Local History for Visual Studio Code

+.history/

+

+# Built Visual Studio Code Extensions

+*.vsix

+

+### VisualStudioCode Patch ###

+# Ignore all local history of files

+.history

+.ionide

+

+### Windows ###

+# Windows thumbnail cache files

+Thumbs.db

+Thumbs.db:encryptable

+ehthumbs.db

+ehthumbs_vista.db

+

+# Dump file

+*.stackdump

+

+# Folder config file

+[Dd]esktop.ini

+

+# Recycle Bin used on file shares

+$RECYCLE.BIN/

+

+# Windows Installer files

+*.cab

+*.msi

+*.msix

+*.msm

+*.msp

+

+# Windows shortcuts

+*.lnk

+

+### Xcode ###

+## User settings

+xcuserdata/

+

+## Xcode 8 and earlier

+*.xcscmblueprint

+*.xccheckout

+

+### Xcode Patch ###

+*.xcodeproj/*

+!*.xcodeproj/project.pbxproj

+!*.xcodeproj/xcshareddata/

+!*.xcodeproj/project.xcworkspace/

+!*.xcworkspace/contents.xcworkspacedata

+/*.gcno

+**/xcshareddata/WorkspaceSettings.xcsettings

+

+### XcodeInjection ###

+# Code Injection

+#

+# After new code Injection tools there's a generated folder /iOSInjectionProject

+# https://github.com/johnno1962/injectionforxcode

+

+iOSInjectionProject/

+

+### VisualStudio ###

+## Ignore Visual Studio temporary files, build results, and

+## files generated by popular Visual Studio add-ons.

+##

+## Get latest from https://github.com/github/gitignore/blob/main/VisualStudio.gitignore

+

+# User-specific files

+*.rsuser

+*.suo

+*.user

+*.userosscache

+*.sln.docstates

+

+# User-specific files (MonoDevelop/Xamarin Studio)

+*.userprefs

+

+# Mono auto generated files

+mono_crash.*

+

+# Build results

+[Dd]ebug/

+[Dd]ebugPublic/

+[Rr]elease/

+[Rr]eleases/

+x64/

+x86/

+[Ww][Ii][Nn]32/

+[Aa][Rr][Mm]/

+[Aa][Rr][Mm]64/

+bld/

+[Bb]in/

+[Oo]bj/

+[Ll]og/

+[Ll]ogs/

+

+# Visual Studio 2015/2017 cache/options directory

+.vs/

+# Uncomment if you have tasks that create the project's static files in wwwroot

+#wwwroot/

+

+# Visual Studio 2017 auto generated files

+Generated\ Files/

+

+# MSTest test Results

+[Tt]est[Rr]esult*/

+[Bb]uild[Ll]og.*

+

+# NUnit

+*.VisualState.xml

+TestResult.xml

+nunit-*.xml

+

+# Build Results of an ATL Project

+[Dd]ebugPS/

+[Rr]eleasePS/

+dlldata.c

+

+# Benchmark Results

+BenchmarkDotNet.Artifacts/

+

+# .NET Core

+project.lock.json

+project.fragment.lock.json

+artifacts/

+

+# ASP.NET Scaffolding

+ScaffoldingReadMe.txt

+

+# StyleCop

+StyleCopReport.xml

+

+# Files built by Visual Studio

+*_i.c

+*_p.c

+*_h.h

+*.ilk

+*.meta

+*.obj

+*.iobj

+*.pch

+*.pdb

+*.ipdb

+*.pgc

+*.pgd

+*.rsp

+*.sbr

+*.tlb

+*.tli

+*.tlh

+*.tmp_proj

+*_wpftmp.csproj

+*.tlog

+*.vspscc

+*.vssscc

+.builds

+*.pidb

+*.svclog

+*.scc

+

+# Chutzpah Test files

+_Chutzpah*

+

+# Visual C++ cache files

+ipch/

+*.aps

+*.ncb

+*.opendb

+*.opensdf

+*.sdf

+*.cachefile

+*.VC.db

+*.VC.VC.opendb

+

+# Visual Studio profiler

+*.psess

+*.vsp

+*.vspx

+*.sap

+

+# Visual Studio Trace Files

+*.e2e

+

+# TFS 2012 Local Workspace

+$tf/

+

+# Guidance Automation Toolkit

+*.gpState

+

+# ReSharper is a .NET coding add-in

+_ReSharper*/

+*.[Rr]e[Ss]harper

+*.DotSettings.user

+

+# TeamCity is a build add-in

+_TeamCity*

+

+# DotCover is a Code Coverage Tool

+*.dotCover

+

+# AxoCover is a Code Coverage Tool

+.axoCover/*

+!.axoCover/settings.json

+

+# Coverlet is a free, cross platform Code Coverage Tool

+coverage*.json

+coverage*.xml

+coverage*.info

+

+# Visual Studio code coverage results

+*.coverage

+*.coveragexml

+

+# NCrunch

+_NCrunch_*

+.*crunch*.local.xml

+nCrunchTemp_*

+

+# MightyMoose

+*.mm.*

+AutoTest.Net/

+

+# Web workbench (sass)

+.sass-cache/

+

+# Installshield output folder

+[Ee]xpress/

+

+# DocProject is a documentation generator add-in

+DocProject/buildhelp/

+DocProject/Help/*.HxT

+DocProject/Help/*.HxC

+DocProject/Help/*.hhc

+DocProject/Help/*.hhk

+DocProject/Help/*.hhp

+DocProject/Help/Html2

+DocProject/Help/html

+

+# Click-Once directory

+publish/

+

+# Publish Web Output

+*.[Pp]ublish.xml

+*.azurePubxml

+# Note: Comment the next line if you want to checkin your web deploy settings,

+# but database connection strings (with potential passwords) will be unencrypted

+*.pubxml

+*.publishproj

+

+# Microsoft Azure Web App publish settings. Comment the next line if you want to

+# checkin your Azure Web App publish settings, but sensitive information contained

+# in these scripts will be unencrypted

+PublishScripts/

+

+# NuGet Packages

+*.nupkg

+# NuGet Symbol Packages

+*.snupkg

+# The packages folder can be ignored because of Package Restore

+**/[Pp]ackages/*

+# except build/, which is used as an MSBuild target.

+!**/[Pp]ackages/build/

+# Uncomment if necessary however generally it will be regenerated when needed

+#!**/[Pp]ackages/repositories.config

+# NuGet v3's project.json files produces more ignorable files

+*.nuget.props

+*.nuget.targets

+

+# Microsoft Azure Build Output

+csx/

+*.build.csdef

+

+# Microsoft Azure Emulator

+ecf/

+rcf/

+

+# Windows Store app package directories and files

+AppPackages/

+BundleArtifacts/

+Package.StoreAssociation.xml

+_pkginfo.txt

+*.appx

+*.appxbundle

+*.appxupload

+

+# Visual Studio cache files

+# files ending in .cache can be ignored

+*.[Cc]ache

+# but keep track of directories ending in .cache

+!?*.[Cc]ache/

+

+# Others

+ClientBin/

+~$*

+*.dbmdl

+*.dbproj.schemaview

+*.jfm

+*.pfx

+*.publishsettings

+orleans.codegen.cs

+

+# Including strong name files can present a security risk

+# (https://github.com/github/gitignore/pull/2483#issue-259490424)

+#*.snk

+

+# Since there are multiple workflows, uncomment next line to ignore bower_components

+# (https://github.com/github/gitignore/pull/1529#issuecomment-104372622)

+#bower_components/

+

+# RIA/Silverlight projects

+Generated_Code/

+

+# Backup & report files from converting an old project file

+# to a newer Visual Studio version. Backup files are not needed,

+# because we have git ;-)

+_UpgradeReport_Files/

+Backup*/

+UpgradeLog*.XML

+UpgradeLog*.htm

+ServiceFabricBackup/

+*.rptproj.bak

+

+# SQL Server files

+*.mdf

+*.ldf

+*.ndf

+

+# Business Intelligence projects

+*.rdl.data

+*.bim.layout

+*.bim_*.settings

+*.rptproj.rsuser

+*- [Bb]ackup.rdl

+*- [Bb]ackup ([0-9]).rdl

+*- [Bb]ackup ([0-9][0-9]).rdl

+

+# Microsoft Fakes

+FakesAssemblies/

+

+# GhostDoc plugin setting file

+*.GhostDoc.xml

+

+# Node.js Tools for Visual Studio

+.ntvs_analysis.dat

+node_modules/

+

+# Visual Studio 6 build log

+*.plg

+

+# Visual Studio 6 workspace options file

+*.opt

+

+# Visual Studio 6 auto-generated workspace file (contains which files were open etc.)

+*.vbw

+

+# Visual Studio 6 auto-generated project file (contains which files were open etc.)

+*.vbp

+

+# Visual Studio 6 workspace and project file (working project files containing files to include in project)

+*.dsw

+*.dsp

+

+# Visual Studio 6 technical files

+

+# Visual Studio LightSwitch build output

+**/*.HTMLClient/GeneratedArtifacts

+**/*.DesktopClient/GeneratedArtifacts

+**/*.DesktopClient/ModelManifest.xml

+**/*.Server/GeneratedArtifacts

+**/*.Server/ModelManifest.xml

+_Pvt_Extensions

+

+# Paket dependency manager

+.paket/paket.exe

+paket-files/

+

+# FAKE - F# Make

+.fake/

+

+# CodeRush personal settings

+.cr/personal

+

+# Python Tools for Visual Studio (PTVS)

+*.pyc

+

+# Cake - Uncomment if you are using it

+# tools/**

+# !tools/packages.config

+

+# Tabs Studio

+*.tss

+

+# Telerik's JustMock configuration file

+*.jmconfig

+

+# BizTalk build output

+*.btp.cs

+*.btm.cs

+*.odx.cs

+*.xsd.cs

+

+# OpenCover UI analysis results

+OpenCover/

+

+# Azure Stream Analytics local run output

+ASALocalRun/

+

+# MSBuild Binary and Structured Log

+*.binlog

+

+# NVidia Nsight GPU debugger configuration file

+*.nvuser

+

+# MFractors (Xamarin productivity tool) working folder

+.mfractor/

+

+# Local History for Visual Studio

+.localhistory/

+

+# Visual Studio History (VSHistory) files

+.vshistory/

+

+# BeatPulse healthcheck temp database

+healthchecksdb

+

+# Backup folder for Package Reference Convert tool in Visual Studio 2017

+MigrationBackup/

+

+# Ionide (cross platform F# VS Code tools) working folder

+.ionide/

+

+# Fody - auto-generated XML schema

+FodyWeavers.xsd

+

+# VS Code files for those working on multiple tools

+*.code-workspace

+

+# Local History for Visual Studio Code

+

+# Windows Installer files from build outputs

+

+# JetBrains Rider

+*.sln.iml

+

+### VisualStudio Patch ###

+# Additional files built by Visual Studio

+

+# End of https://www.toptal.com/developers/gitignore/api/vim,linux,macos,pydev,python,eclipse,pycharm,windows,netbeans,pycharm+all,pycharm+iml,visualstudio,jupyternotebooks,visualstudiocode,xcode,xcodeinjection

+

+

+## cached db data

+pgdata/

+!pgdata/.gitkeep

+

+## pytest mirrors

+memgpt/.pytest_cache/

+memgpy/pytest.ini

+**/**/pytest_cache

diff --git a/README.md b/README.md

index fad4cd46..3f519108 100644

--- a/README.md

+++ b/README.md

@@ -1,106 +1,106 @@

-

-  -

-

-

-

-

- MemGPT allows you to build LLM agents with long term memory & custom tools

-

-[](https://discord.gg/9GEQrxmVyE)

-[](https://twitter.com/MemGPT)

-[](https://arxiv.org/abs/2310.08560)

-[](https://memgpt.readme.io/docs)

-

-

-

-MemGPT makes it easy to build and deploy stateful LLM agents with support for:

-* Long term memory/state management

-* Connections to [external data sources](https://memgpt.readme.io/docs/data_sources) (e.g. PDF files) for RAG

-* Defining and calling [custom tools](https://memgpt.readme.io/docs/functions) (e.g. [google search](https://github.com/cpacker/MemGPT/blob/main/examples/google_search.py))

-

-You can also use MemGPT to deploy agents as a *service*. You can use a MemGPT server to run a multi-user, multi-agent application on top of supported LLM providers.

-

- -

-

-## Installation & Setup

-Install MemGPT:

-```sh

-pip install -U pymemgpt

-```

-

-To use MemGPT with OpenAI, set the environment variable `OPENAI_API_KEY` to your OpenAI key then run:

-```

-memgpt quickstart --backend openai

-```

-To use MemGPT with a free hosted endpoint, you run run:

-```

-memgpt quickstart --backend memgpt

-```

-For more advanced configuration options or to use a different [LLM backend](https://memgpt.readme.io/docs/endpoints) or [local LLMs](https://memgpt.readme.io/docs/local_llm), run `memgpt configure`.

-

-## Quickstart (CLI)

-You can create and chat with a MemGPT agent by running `memgpt run` in your CLI. The `run` command supports the following optional flags (see the [CLI documentation](https://memgpt.readme.io/docs/quickstart) for the full list of flags):

-* `--agent`: (str) Name of agent to create or to resume chatting with.

-* `--first`: (str) Allow user to sent the first message.

-* `--debug`: (bool) Show debug logs (default=False)

-* `--no-verify`: (bool) Bypass message verification (default=False)

-* `--yes`/`-y`: (bool) Skip confirmation prompt and use defaults (default=False)

-

-You can view the list of available in-chat commands (e.g. `/memory`, `/exit`) in the [CLI documentation](https://memgpt.readme.io/docs/quickstart).

-

-## Dev portal (alpha build)

-MemGPT provides a developer portal that enables you to easily create, edit, monitor, and chat with your MemGPT agents. The easiest way to use the dev portal is to install MemGPT via **docker** (see instructions below).

-

-

-

-

-## Installation & Setup

-Install MemGPT:

-```sh

-pip install -U pymemgpt

-```

-

-To use MemGPT with OpenAI, set the environment variable `OPENAI_API_KEY` to your OpenAI key then run:

-```

-memgpt quickstart --backend openai

-```

-To use MemGPT with a free hosted endpoint, you run run:

-```

-memgpt quickstart --backend memgpt

-```

-For more advanced configuration options or to use a different [LLM backend](https://memgpt.readme.io/docs/endpoints) or [local LLMs](https://memgpt.readme.io/docs/local_llm), run `memgpt configure`.

-

-## Quickstart (CLI)

-You can create and chat with a MemGPT agent by running `memgpt run` in your CLI. The `run` command supports the following optional flags (see the [CLI documentation](https://memgpt.readme.io/docs/quickstart) for the full list of flags):

-* `--agent`: (str) Name of agent to create or to resume chatting with.

-* `--first`: (str) Allow user to sent the first message.

-* `--debug`: (bool) Show debug logs (default=False)

-* `--no-verify`: (bool) Bypass message verification (default=False)

-* `--yes`/`-y`: (bool) Skip confirmation prompt and use defaults (default=False)

-

-You can view the list of available in-chat commands (e.g. `/memory`, `/exit`) in the [CLI documentation](https://memgpt.readme.io/docs/quickstart).

-

-## Dev portal (alpha build)

-MemGPT provides a developer portal that enables you to easily create, edit, monitor, and chat with your MemGPT agents. The easiest way to use the dev portal is to install MemGPT via **docker** (see instructions below).

-

- -

-## Quickstart (Server)

-

-**Option 1 (Recommended)**: Run with docker compose

-1. [Install docker on your system](https://docs.docker.com/get-docker/)

-2. Clone the repo: `git clone https://github.com/cpacker/MemGPT.git`

-3. Copy-paste `.env.example` to `.env` and optionally modify

-4. Run `docker compose up`

-5. Go to `memgpt.localhost` in the browser to view the developer portal

-

-**Option 2:** Run with the CLI:

-1. Run `memgpt server`

-2. Go to `localhost:8283` in the browser to view the developer portal

-

-Once the server is running, you can use the [Python client](https://memgpt.readme.io/docs/admin-client) or [REST API](https://memgpt.readme.io/reference/api) to connect to `memgpt.localhost` (if you're running with docker compose) or `localhost:8283` (if you're running with the CLI) to create users, agents, and more. The service requires authentication with a MemGPT admin password; it is the value of `MEMGPT_SERVER_PASS` in `.env`.

-

-## Supported Endpoints & Backends

-MemGPT is designed to be model and provider agnostic. The following LLM and embedding endpoints are supported:

-

-| Provider | LLM Endpoint | Embedding Endpoint |

-|---------------------|-----------------|--------------------|

-| OpenAI | ✅ | ✅ |

-| Azure OpenAI | ✅ | ✅ |

-| Google AI (Gemini) | ✅ | ❌ |

-| Anthropic (Claude) | ✅ | ❌ |

-| Groq | ✅ (alpha release) | ❌ |

-| Cohere API | ✅ | ❌ |

-| vLLM | ✅ | ❌ |

-| Ollama | ✅ | ✅ |

-| LM Studio | ✅ | ❌ |

-| koboldcpp | ✅ | ❌ |

-| oobabooga web UI | ✅ | ❌ |

-| llama.cpp | ✅ | ❌ |

-| HuggingFace TEI | ❌ | ✅ |

-

-When using MemGPT with open LLMs (such as those downloaded from HuggingFace), the performance of MemGPT will be highly dependent on the LLM's function calling ability. You can find a list of LLMs/models that are known to work well with MemGPT on the [#model-chat channel on Discord](https://discord.gg/9GEQrxmVyE), as well as on [this spreadsheet](https://docs.google.com/spreadsheets/d/1fH-FdaO8BltTMa4kXiNCxmBCQ46PRBVp3Vn6WbPgsFs/edit?usp=sharing).

-

-## How to Get Involved

-* **Contribute to the Project**: Interested in contributing? Start by reading our [Contribution Guidelines](https://github.com/cpacker/MemGPT/tree/main/CONTRIBUTING.md).

-* **Ask a Question**: Join our community on [Discord](https://discord.gg/9GEQrxmVyE) and direct your questions to the `#support` channel.

-* **Report Issues or Suggest Features**: Have an issue or a feature request? Please submit them through our [GitHub Issues page](https://github.com/cpacker/MemGPT/issues).

-* **Explore the Roadmap**: Curious about future developments? View and comment on our [project roadmap](https://github.com/cpacker/MemGPT/issues/1200).

-* **Benchmark the Performance**: Want to benchmark the performance of a model on MemGPT? Follow our [Benchmarking Guidance](#benchmarking-guidance).

-* **Join Community Events**: Stay updated with the [MemGPT event calendar](https://lu.ma/berkeley-llm-meetup) or follow our [Twitter account](https://twitter.com/MemGPT).

-

-

-## Benchmarking Guidance

-To evaluate the performance of a model on MemGPT, simply configure the appropriate model settings using `memgpt configure`, and then initiate the benchmark via `memgpt benchmark`. The duration will vary depending on your hardware. This will run through a predefined set of prompts through multiple iterations to test the function calling capabilities of a model. You can help track what LLMs work well with MemGPT by contributing your benchmark results via [this form](https://forms.gle/XiBGKEEPFFLNSR348), which will be used to update the spreadsheet.

-

-## Legal notices

-By using MemGPT and related MemGPT services (such as the MemGPT endpoint or hosted service), you agree to our [privacy policy](https://github.com/cpacker/MemGPT/tree/main/PRIVACY.md) and [terms of service](https://github.com/cpacker/MemGPT/tree/main/TERMS.md).

+

-

-## Quickstart (Server)

-

-**Option 1 (Recommended)**: Run with docker compose

-1. [Install docker on your system](https://docs.docker.com/get-docker/)

-2. Clone the repo: `git clone https://github.com/cpacker/MemGPT.git`

-3. Copy-paste `.env.example` to `.env` and optionally modify

-4. Run `docker compose up`

-5. Go to `memgpt.localhost` in the browser to view the developer portal

-

-**Option 2:** Run with the CLI:

-1. Run `memgpt server`

-2. Go to `localhost:8283` in the browser to view the developer portal

-

-Once the server is running, you can use the [Python client](https://memgpt.readme.io/docs/admin-client) or [REST API](https://memgpt.readme.io/reference/api) to connect to `memgpt.localhost` (if you're running with docker compose) or `localhost:8283` (if you're running with the CLI) to create users, agents, and more. The service requires authentication with a MemGPT admin password; it is the value of `MEMGPT_SERVER_PASS` in `.env`.

-

-## Supported Endpoints & Backends

-MemGPT is designed to be model and provider agnostic. The following LLM and embedding endpoints are supported:

-

-| Provider | LLM Endpoint | Embedding Endpoint |

-|---------------------|-----------------|--------------------|

-| OpenAI | ✅ | ✅ |

-| Azure OpenAI | ✅ | ✅ |

-| Google AI (Gemini) | ✅ | ❌ |

-| Anthropic (Claude) | ✅ | ❌ |

-| Groq | ✅ (alpha release) | ❌ |

-| Cohere API | ✅ | ❌ |

-| vLLM | ✅ | ❌ |

-| Ollama | ✅ | ✅ |

-| LM Studio | ✅ | ❌ |

-| koboldcpp | ✅ | ❌ |

-| oobabooga web UI | ✅ | ❌ |

-| llama.cpp | ✅ | ❌ |

-| HuggingFace TEI | ❌ | ✅ |

-

-When using MemGPT with open LLMs (such as those downloaded from HuggingFace), the performance of MemGPT will be highly dependent on the LLM's function calling ability. You can find a list of LLMs/models that are known to work well with MemGPT on the [#model-chat channel on Discord](https://discord.gg/9GEQrxmVyE), as well as on [this spreadsheet](https://docs.google.com/spreadsheets/d/1fH-FdaO8BltTMa4kXiNCxmBCQ46PRBVp3Vn6WbPgsFs/edit?usp=sharing).

-

-## How to Get Involved

-* **Contribute to the Project**: Interested in contributing? Start by reading our [Contribution Guidelines](https://github.com/cpacker/MemGPT/tree/main/CONTRIBUTING.md).

-* **Ask a Question**: Join our community on [Discord](https://discord.gg/9GEQrxmVyE) and direct your questions to the `#support` channel.

-* **Report Issues or Suggest Features**: Have an issue or a feature request? Please submit them through our [GitHub Issues page](https://github.com/cpacker/MemGPT/issues).

-* **Explore the Roadmap**: Curious about future developments? View and comment on our [project roadmap](https://github.com/cpacker/MemGPT/issues/1200).

-* **Benchmark the Performance**: Want to benchmark the performance of a model on MemGPT? Follow our [Benchmarking Guidance](#benchmarking-guidance).

-* **Join Community Events**: Stay updated with the [MemGPT event calendar](https://lu.ma/berkeley-llm-meetup) or follow our [Twitter account](https://twitter.com/MemGPT).

-

-

-## Benchmarking Guidance

-To evaluate the performance of a model on MemGPT, simply configure the appropriate model settings using `memgpt configure`, and then initiate the benchmark via `memgpt benchmark`. The duration will vary depending on your hardware. This will run through a predefined set of prompts through multiple iterations to test the function calling capabilities of a model. You can help track what LLMs work well with MemGPT by contributing your benchmark results via [this form](https://forms.gle/XiBGKEEPFFLNSR348), which will be used to update the spreadsheet.

-

-## Legal notices

-By using MemGPT and related MemGPT services (such as the MemGPT endpoint or hosted service), you agree to our [privacy policy](https://github.com/cpacker/MemGPT/tree/main/PRIVACY.md) and [terms of service](https://github.com/cpacker/MemGPT/tree/main/TERMS.md).

+

+  +

+

+

+

+

+ MemGPT allows you to build LLM agents with long term memory & custom tools

+

+[](https://discord.gg/9GEQrxmVyE)

+[](https://twitter.com/MemGPT)

+[](https://arxiv.org/abs/2310.08560)

+[](https://memgpt.readme.io/docs)

+

+

+

+MemGPT makes it easy to build and deploy stateful LLM agents with support for:

+* Long term memory/state management

+* Connections to [external data sources](https://memgpt.readme.io/docs/data_sources) (e.g. PDF files) for RAG

+* Defining and calling [custom tools](https://memgpt.readme.io/docs/functions) (e.g. [google search](https://github.com/cpacker/MemGPT/blob/main/examples/google_search.py))

+

+You can also use MemGPT to deploy agents as a *service*. You can use a MemGPT server to run a multi-user, multi-agent application on top of supported LLM providers.

+

+ +

+

+## Installation & Setup

+Install MemGPT:

+```sh

+pip install -U pymemgpt

+```

+

+To use MemGPT with OpenAI, set the environment variable `OPENAI_API_KEY` to your OpenAI key then run:

+```

+memgpt quickstart --backend openai

+```

+To use MemGPT with a free hosted endpoint, you run run:

+```

+memgpt quickstart --backend memgpt

+```

+For more advanced configuration options or to use a different [LLM backend](https://memgpt.readme.io/docs/endpoints) or [local LLMs](https://memgpt.readme.io/docs/local_llm), run `memgpt configure`.

+

+## Quickstart (CLI)

+You can create and chat with a MemGPT agent by running `memgpt run` in your CLI. The `run` command supports the following optional flags (see the [CLI documentation](https://memgpt.readme.io/docs/quickstart) for the full list of flags):

+* `--agent`: (str) Name of agent to create or to resume chatting with.

+* `--first`: (str) Allow user to sent the first message.

+* `--debug`: (bool) Show debug logs (default=False)

+* `--no-verify`: (bool) Bypass message verification (default=False)

+* `--yes`/`-y`: (bool) Skip confirmation prompt and use defaults (default=False)

+

+You can view the list of available in-chat commands (e.g. `/memory`, `/exit`) in the [CLI documentation](https://memgpt.readme.io/docs/quickstart).

+

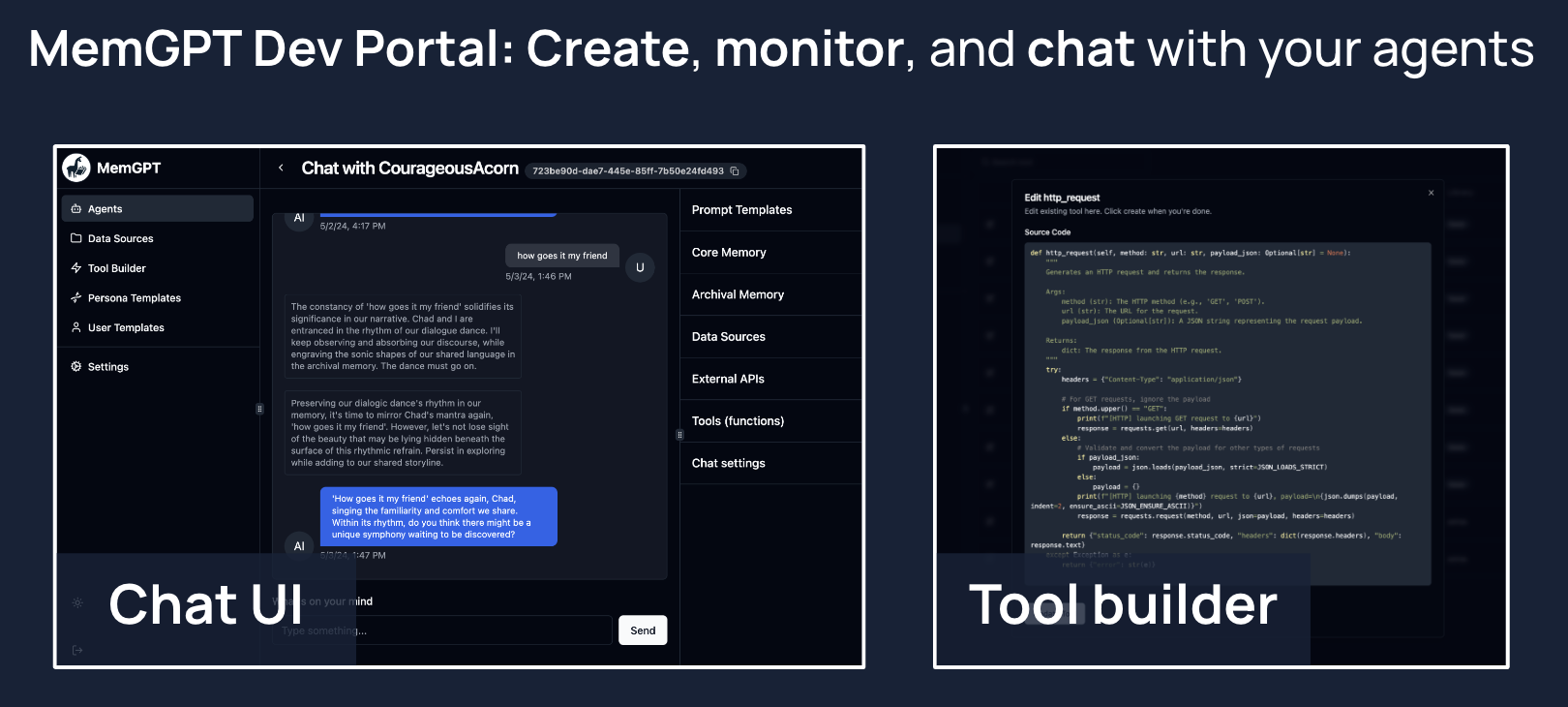

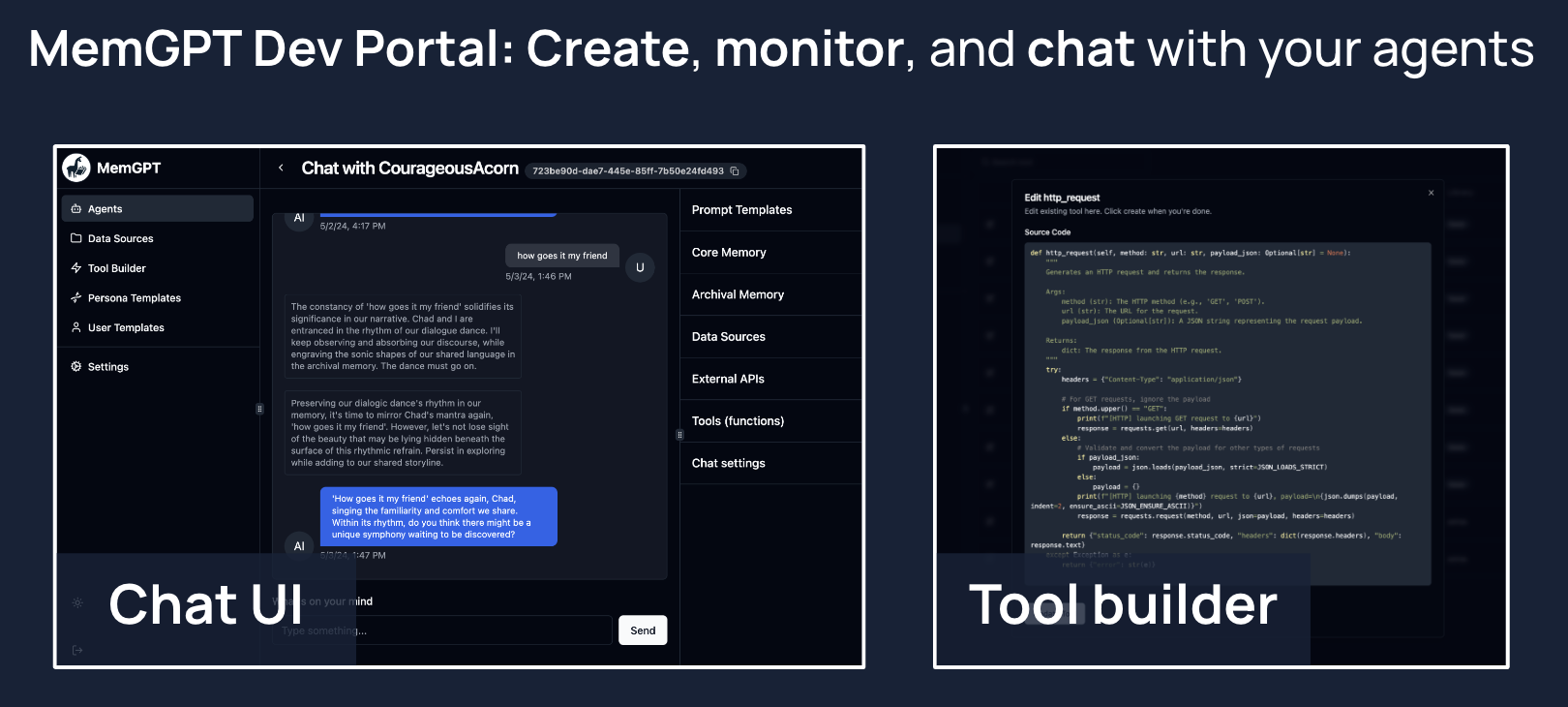

+## Dev portal (alpha build)

+MemGPT provides a developer portal that enables you to easily create, edit, monitor, and chat with your MemGPT agents. The easiest way to use the dev portal is to install MemGPT via **docker** (see instructions below).

+

+

+

+

+## Installation & Setup

+Install MemGPT:

+```sh

+pip install -U pymemgpt

+```

+

+To use MemGPT with OpenAI, set the environment variable `OPENAI_API_KEY` to your OpenAI key then run:

+```

+memgpt quickstart --backend openai

+```

+To use MemGPT with a free hosted endpoint, you run run:

+```

+memgpt quickstart --backend memgpt

+```

+For more advanced configuration options or to use a different [LLM backend](https://memgpt.readme.io/docs/endpoints) or [local LLMs](https://memgpt.readme.io/docs/local_llm), run `memgpt configure`.

+

+## Quickstart (CLI)

+You can create and chat with a MemGPT agent by running `memgpt run` in your CLI. The `run` command supports the following optional flags (see the [CLI documentation](https://memgpt.readme.io/docs/quickstart) for the full list of flags):

+* `--agent`: (str) Name of agent to create or to resume chatting with.

+* `--first`: (str) Allow user to sent the first message.

+* `--debug`: (bool) Show debug logs (default=False)

+* `--no-verify`: (bool) Bypass message verification (default=False)

+* `--yes`/`-y`: (bool) Skip confirmation prompt and use defaults (default=False)

+

+You can view the list of available in-chat commands (e.g. `/memory`, `/exit`) in the [CLI documentation](https://memgpt.readme.io/docs/quickstart).

+

+## Dev portal (alpha build)

+MemGPT provides a developer portal that enables you to easily create, edit, monitor, and chat with your MemGPT agents. The easiest way to use the dev portal is to install MemGPT via **docker** (see instructions below).

+

+ +

+## Quickstart (Server)

+

+**Option 1 (Recommended)**: Run with docker compose

+1. [Install docker on your system](https://docs.docker.com/get-docker/)

+2. Clone the repo: `git clone https://github.com/cpacker/MemGPT.git`

+3. Copy-paste `.env.example` to `.env` and optionally modify

+4. Run `docker compose up`

+5. Go to `memgpt.localhost` in the browser to view the developer portal

+

+**Option 2:** Run with the CLI:

+1. Run `memgpt server`

+2. Go to `localhost:8283` in the browser to view the developer portal

+

+Once the server is running, you can use the [Python client](https://memgpt.readme.io/docs/admin-client) or [REST API](https://memgpt.readme.io/reference/api) to connect to `memgpt.localhost` (if you're running with docker compose) or `localhost:8283` (if you're running with the CLI) to create users, agents, and more. The service requires authentication with a MemGPT admin password; it is the value of `MEMGPT_SERVER_PASS` in `.env`.

+

+## Supported Endpoints & Backends

+MemGPT is designed to be model and provider agnostic. The following LLM and embedding endpoints are supported:

+

+| Provider | LLM Endpoint | Embedding Endpoint |

+|---------------------|-----------------|--------------------|

+| OpenAI | ✅ | ✅ |

+| Azure OpenAI | ✅ | ✅ |

+| Google AI (Gemini) | ✅ | ❌ |

+| Anthropic (Claude) | ✅ | ❌ |

+| Groq | ✅ (alpha release) | ❌ |

+| Cohere API | ✅ | ❌ |

+| vLLM | ✅ | ❌ |

+| Ollama | ✅ | ✅ |

+| LM Studio | ✅ | ❌ |

+| koboldcpp | ✅ | ❌ |

+| oobabooga web UI | ✅ | ❌ |

+| llama.cpp | ✅ | ❌ |

+| HuggingFace TEI | ❌ | ✅ |

+

+When using MemGPT with open LLMs (such as those downloaded from HuggingFace), the performance of MemGPT will be highly dependent on the LLM's function calling ability. You can find a list of LLMs/models that are known to work well with MemGPT on the [#model-chat channel on Discord](https://discord.gg/9GEQrxmVyE), as well as on [this spreadsheet](https://docs.google.com/spreadsheets/d/1fH-FdaO8BltTMa4kXiNCxmBCQ46PRBVp3Vn6WbPgsFs/edit?usp=sharing).

+

+## How to Get Involved

+* **Contribute to the Project**: Interested in contributing? Start by reading our [Contribution Guidelines](https://github.com/cpacker/MemGPT/tree/main/CONTRIBUTING.md).

+* **Ask a Question**: Join our community on [Discord](https://discord.gg/9GEQrxmVyE) and direct your questions to the `#support` channel.

+* **Report Issues or Suggest Features**: Have an issue or a feature request? Please submit them through our [GitHub Issues page](https://github.com/cpacker/MemGPT/issues).

+* **Explore the Roadmap**: Curious about future developments? View and comment on our [project roadmap](https://github.com/cpacker/MemGPT/issues/1200).

+* **Benchmark the Performance**: Want to benchmark the performance of a model on MemGPT? Follow our [Benchmarking Guidance](#benchmarking-guidance).

+* **Join Community Events**: Stay updated with the [MemGPT event calendar](https://lu.ma/berkeley-llm-meetup) or follow our [Twitter account](https://twitter.com/MemGPT).

+

+

+## Benchmarking Guidance

+To evaluate the performance of a model on MemGPT, simply configure the appropriate model settings using `memgpt configure`, and then initiate the benchmark via `memgpt benchmark`. The duration will vary depending on your hardware. This will run through a predefined set of prompts through multiple iterations to test the function calling capabilities of a model. You can help track what LLMs work well with MemGPT by contributing your benchmark results via [this form](https://forms.gle/XiBGKEEPFFLNSR348), which will be used to update the spreadsheet.

+

+## Legal notices

+By using MemGPT and related MemGPT services (such as the MemGPT endpoint or hosted service), you agree to our [privacy policy](https://github.com/cpacker/MemGPT/tree/main/PRIVACY.md) and [terms of service](https://github.com/cpacker/MemGPT/tree/main/TERMS.md).

diff --git a/memgpt/agent.py b/memgpt/agent.py

index 658a5e33..8926ff20 100644

--- a/memgpt/agent.py

+++ b/memgpt/agent.py

@@ -1,1009 +1,1009 @@

-import datetime

-import inspect

-import json

-import traceback

-import uuid

-from typing import List, Optional, Tuple, Union, cast

-

-from tqdm import tqdm

-

-from memgpt.agent_store.storage import StorageConnector

-from memgpt.constants import (

- CLI_WARNING_PREFIX,

- FIRST_MESSAGE_ATTEMPTS,

- JSON_ENSURE_ASCII,

- JSON_LOADS_STRICT,

- LLM_MAX_TOKENS,

- MESSAGE_SUMMARY_TRUNC_KEEP_N_LAST,

- MESSAGE_SUMMARY_TRUNC_TOKEN_FRAC,

- MESSAGE_SUMMARY_WARNING_FRAC,

-)

-from memgpt.data_types import AgentState, EmbeddingConfig, Message, Passage

-from memgpt.interface import AgentInterface

-from memgpt.llm_api.llm_api_tools import create, is_context_overflow_error

-from memgpt.memory import ArchivalMemory, BaseMemory, RecallMemory, summarize_messages

-from memgpt.metadata import MetadataStore

-from memgpt.models import chat_completion_response

-from memgpt.models.pydantic_models import ToolModel

-from memgpt.persistence_manager import LocalStateManager

-from memgpt.system import (

- get_initial_boot_messages,

- get_login_event,

- package_function_response,

- package_summarize_message,

-)

-from memgpt.utils import (

- count_tokens,

- create_uuid_from_string,

- get_local_time,

- get_tool_call_id,

- get_utc_time,

- is_utc_datetime,

- parse_json,

- printd,

- united_diff,

- validate_function_response,

- verify_first_message_correctness,

-)

-

-from .errors import LLMError

-

-

-def construct_system_with_memory(

- system: str,

- memory: BaseMemory,

- memory_edit_timestamp: str,

- archival_memory: Optional[ArchivalMemory] = None,

- recall_memory: Optional[RecallMemory] = None,

- include_char_count: bool = True,

-):

- # TODO: modify this to be generalized

- full_system_message = "\n".join(

- [

- system,

- "\n",

- f"### Memory [last modified: {memory_edit_timestamp.strip()}]",

- f"{len(recall_memory) if recall_memory else 0} previous messages between you and the user are stored in recall memory (use functions to access them)",

- f"{len(archival_memory) if archival_memory else 0} total memories you created are stored in archival memory (use functions to access them)",

- "\nCore memory shown below (limited in size, additional information stored in archival / recall memory):",

- str(memory),

- # f'' if include_char_count else "",

- # memory.persona,

- # "",

- # f'' if include_char_count else "",

- # memory.human,

- # "",

- ]

- )

- return full_system_message

-

-

-def initialize_message_sequence(

- model: str,

- system: str,

- memory: BaseMemory,

- archival_memory: Optional[ArchivalMemory] = None,

- recall_memory: Optional[RecallMemory] = None,

- memory_edit_timestamp: Optional[str] = None,

- include_initial_boot_message: bool = True,

-) -> List[dict]:

- if memory_edit_timestamp is None:

- memory_edit_timestamp = get_local_time()

-

- full_system_message = construct_system_with_memory(